Ad platforms don’t just react to your targeting and creatives — they actively learn from the signals your campaign produces. The issue is that those signals are often flawed, and once the system starts learning from them, it doesn’t correct the problem — it amplifies it.

This is where many campaigns quietly break.

Why Optimization Doesn’t “Fix” Weak Campaigns

A common assumption is that Meta will stabilize performance over time. In practice, the system optimizes toward whatever signals it receives, regardless of their quality.

If your campaign generates low-intent conversions early on, the algorithm treats them as successful outcomes to replicate. As a result, you may observe several patterns developing at the same time:

-

Stable or improving CPL, because the platform identifies cheaper users who behave similarly to early converters.

-

Declining lead quality, which becomes visible through sales feedback, lower acceptance rates, or reduced pipeline progression.

-

Narrowing audience behavior, as delivery concentrates around segments that convert easily but lack real buying intent.

This often creates a false sense of performance improvement — a problem explored further in How to analyze Facebook ad performance beyond CTR and CPC.

How Bad Signals Enter the System

The problem usually starts earlier than most teams expect, at the interaction level rather than the conversion level.

Several mechanisms introduce misleading signals into the system:

-

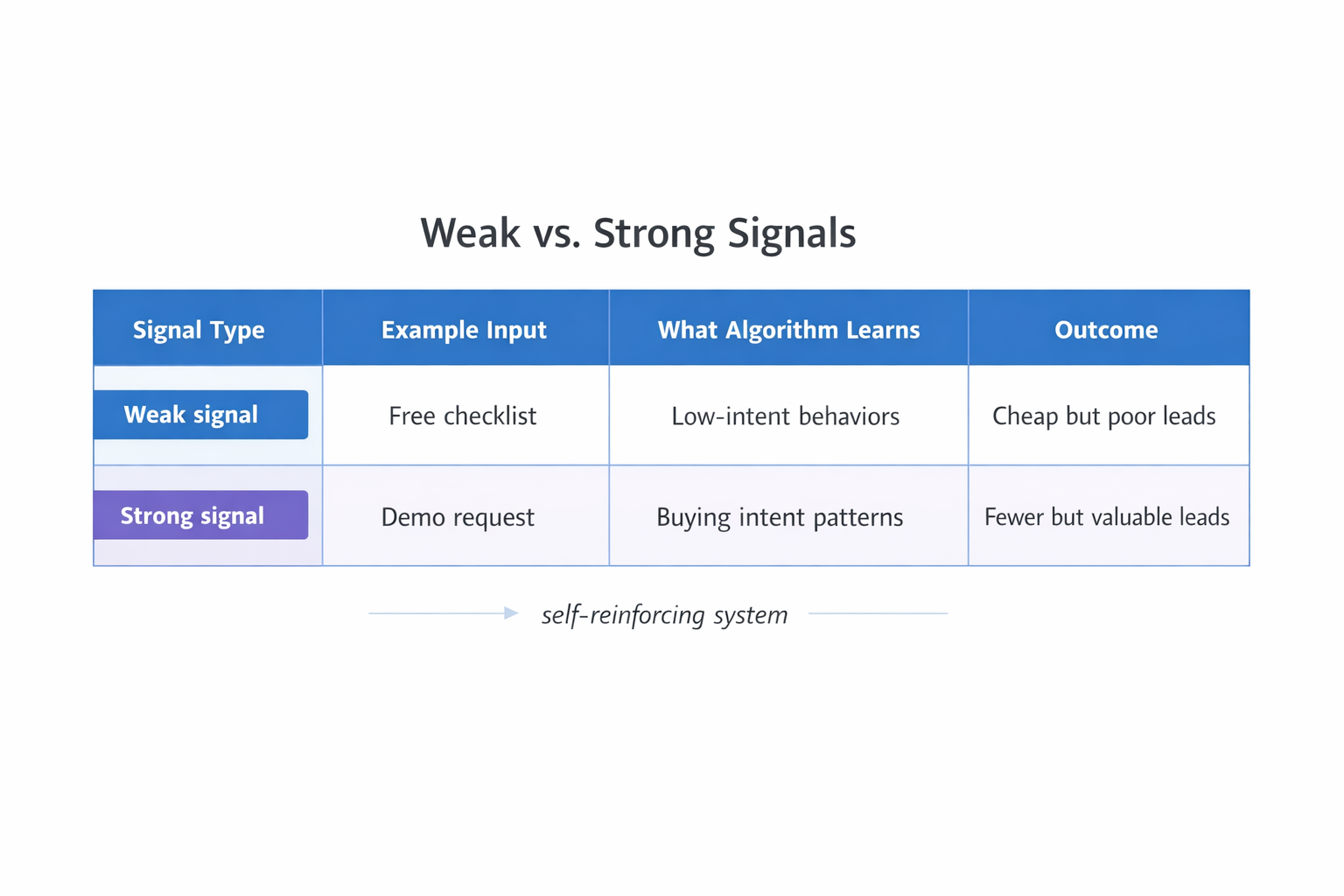

Low-friction offers that attract curiosity instead of intent, such as free tools, generic checklists, or broad webinars. These generate volume from users who are not actively evaluating solutions.

-

Over-simplified forms that remove qualification pressure, allowing a wider range of users to convert and signaling that these behaviors represent success.

-

Retargeting-heavy conversion volume, which biases the system toward warm users and limits its ability to learn from cold audiences.

-

Misaligned conversion events, where optimization focuses on form submissions instead of qualified leads or revenue-driving actions.

Each of these produces a dataset that appears valid inside Ads Manager but does not reflect actual business outcomes. This mismatch is closely related to the issue described in Why your ads get clicks but no sales.

What the Algorithm Actually Learns

The system does not understand “lead quality” in business terms. Instead, it identifies patterns in observable behavior.

When multiple conversions occur within similar behavioral clusters, the algorithm increases delivery toward those clusters in future auctions. Over time, this leads to:

-

Higher bid density around similar users, increasing competition for the same behavioral profiles.

-

Reduced exploration, as the system becomes less likely to test new audience segments.

-

Faster delivery to easy converters, even when they do not translate into revenue.

This creates a reinforcing loop where weak signals become stronger simply because they are repeated more often.

Observable Signs of Signal Degradation

You can usually detect signal issues directly in Ads Manager if you look beyond surface metrics.

Common indicators include:

-

High CTR combined with weak downstream performance, where ads attract attention but fail to produce meaningful pipeline movement.

-

Stable CPL alongside declining acceptance rate, indicating that cost efficiency is improving while lead quality deteriorates.

-

Increasing audience overlap over time, as the system repeatedly targets the same behavioral segments — a pattern explained in The role of audience overlap in Facebook ads performance.

-

Rising frequency without proportional gains, suggesting that delivery favors familiarity rather than expansion.

-

Unstable performance when scaling budgets, where results drop once the system is forced outside its learned patterns.

These are not random fluctuations — they point to a structural signal problem.

Why Scaling Makes It Worse

Scaling does not fix weak signals; it exposes them.

When you increase budget, the algorithm must find more users who match the learned patterns. If those patterns are low quality, expansion leads to weaker matches and declining performance.

In practice, this results in:

-

Rising CPA after initial scaling, as the system struggles to maintain efficiency outside core segments.

-

Increased volatility in performance, with inconsistent delivery and unstable results.

-

Faster degradation of conversion rates, particularly in new audience segments.

This is why many campaigns that look strong at small budgets fail when scaled — a pattern also discussed in The science of scaling Facebook ads without killing performance.

How to Break the Feedback Loop

The solution is not to adjust bids or budgets, but to change the signals the system learns from.

This requires deliberate intervention:

-

Introduce friction intentionally, by adding qualifying questions, requiring business emails, or gating access to higher-value content.

-

Shift optimization events closer to revenue, using qualified leads, booked calls, or pipeline-stage conversions instead of raw submissions.

-

Separate campaigns by intent level, ensuring that prospecting and retargeting signals do not contaminate each other.

-

Control early conversion sources, especially during the first few days, when the system is forming initial patterns.

-

Scrutinize early performance, since fast success often indicates weak signals rather than strong ones.

Although these changes may increase CPL in the short term, they improve the quality of the data the algorithm uses, which leads to more reliable performance over time.

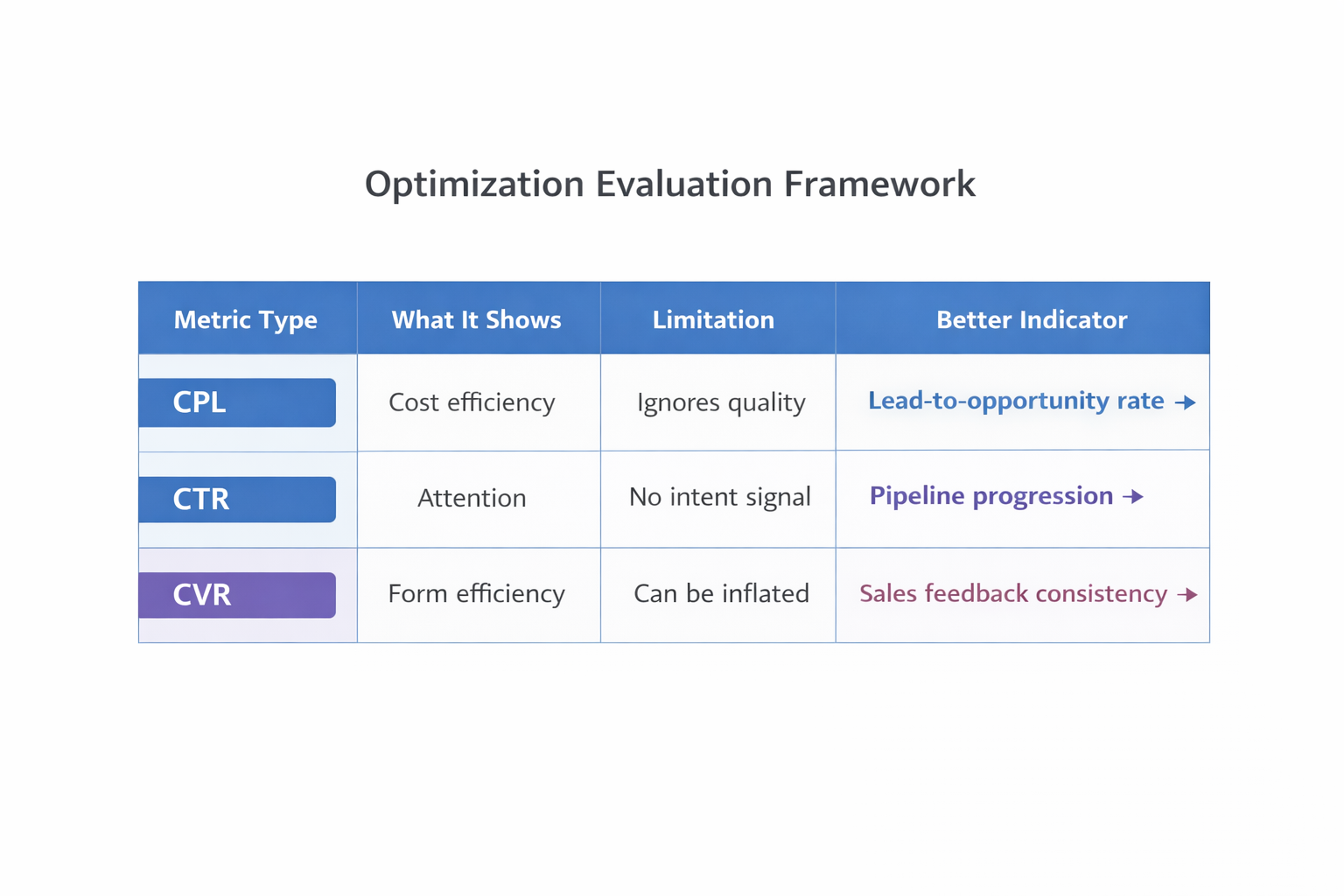

A More Accurate Way to Evaluate Optimization

Instead of asking whether performance is improving, it is more useful to evaluate whether the system is learning from better signals.

This can be assessed by tracking:

-

Lead-to-opportunity rate, rather than focusing only on conversion rate.

-

Consistency in sales feedback, across different campaigns and audiences.

-

Performance stability after scaling, which indicates whether learning generalizes beyond initial segments.

-

Audience diversity over time, reflecting whether the system continues to explore new users.

If these improve, optimization is aligned with business outcomes rather than surface metrics.

Final Perspective

Ad platforms do not distinguish between good and bad outcomes — they respond to repetition. When a campaign generates consistent signals, the system assumes those signals are valuable and scales them accordingly.

If those signals are weak, optimization will reinforce them and gradually reduce performance quality. The only reliable way to improve results is to control what the algorithm is allowed to learn from.

Once that shifts, performance does not just improve — it becomes more stable and scalable.