You usually don’t notice data problems when everything is “working.”

Leads are coming in. CPL looks acceptable. The dashboard doesn’t raise any alarms. Then a few weeks later, sales starts pushing back — leads aren’t relevant, calls don’t convert, pipeline quality drops.

At that point, most teams look at targeting or creatives.

But the issue often started earlier — in how the data was collected.

When the Algorithm Learns the Wrong Behavior

Meta doesn’t understand what a “good lead” is unless you show it.

It reacts to patterns. If a certain type of user fills out your form quickly, the system will try to find more users like that. It doesn’t ask whether those users actually buy.

You can see this mismatch in Ads Manager if you know where to look.

A common situation: CPL trends down over 5–7 days, while sales acceptance rate drops at the same time. From the platform’s perspective, performance improved. From a business perspective, it got worse.

That gap almost always points to a signal problem, not a targeting issue.

Where Data Starts Losing Integrity

The breakdown rarely comes from something extreme. It’s usually small “performance optimizations” that slowly distort the dataset.

For example, removing form fields to increase volume.

On day one, results look better — more leads at a lower cost. But within a few days, you start seeing patterns:

-

submission time drops to just a few seconds;

-

duplicate or incomplete information increases;

-

follow-up response rates fall sharply.

What changed isn’t just volume — it’s the type of user entering the funnel. The algorithm picks up on that immediately.

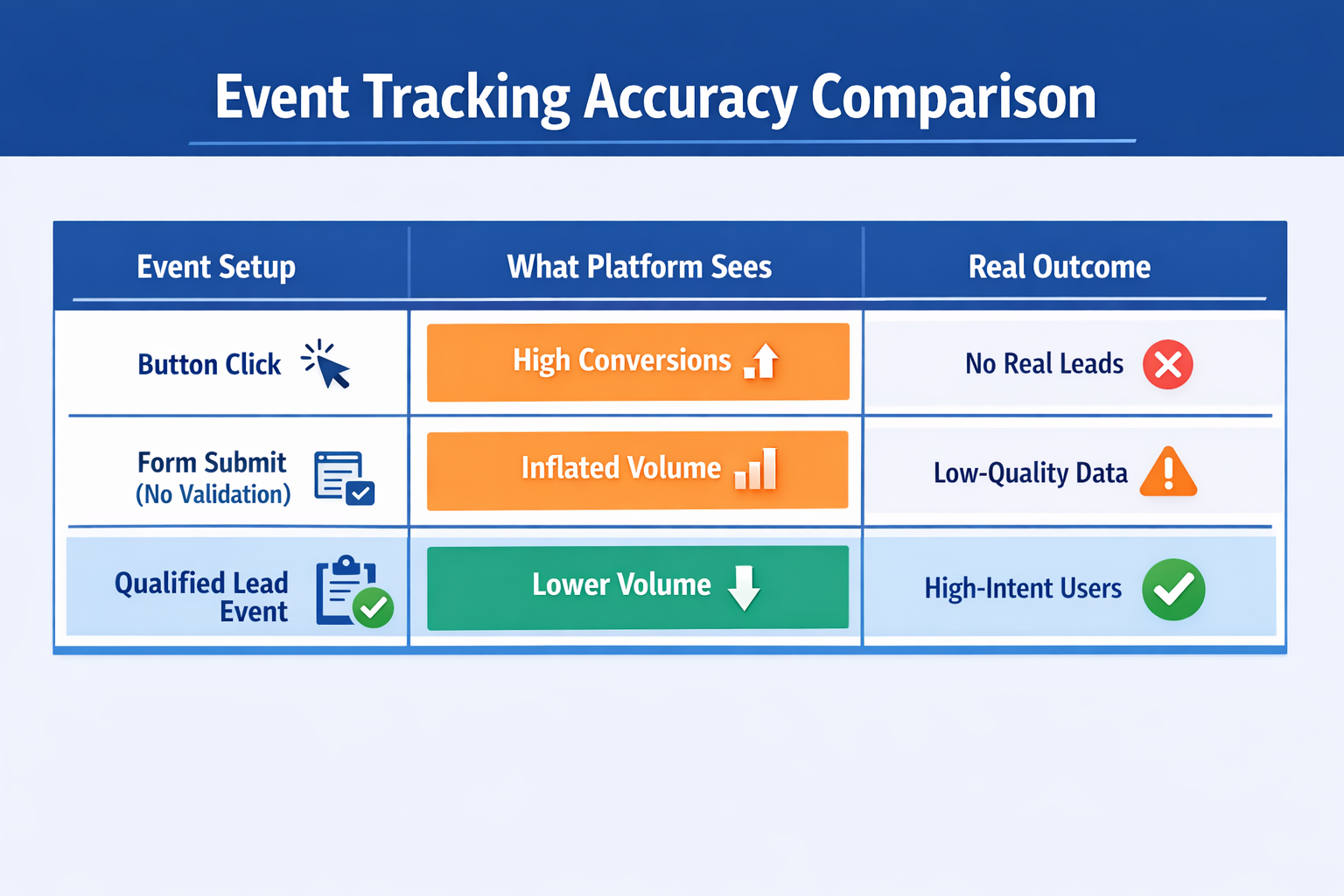

Another example is event tracking.

If you fire a conversion event on a button click instead of a confirmed submission, the system starts optimizing for clicks, not actual leads. You’ll often see inflated results that don’t translate into business outcomes — something closely related to what’s discussed in Ad Metrics That Lie: When Good Numbers Hide Bad Performance.

How Distorted Signals Scale

Once low-quality signals enter the system, they don’t stay contained.

The algorithm groups users based on shared behavior. If your initial conversions come from low-intent users, Meta builds audiences around those patterns.

You can watch this happen:

-

frequency climbs quickly, but engagement quality drops;

-

lookalike audiences scale fast, then stall;

-

performance becomes inconsistent across days, even with stable budgets.

At that point, increasing spend usually makes things worse, not better.

The system is doing exactly what it’s designed to do — it just has the wrong input.

This is also why many advertisers struggle with scaling, even when early metrics look strong, as explained in The Science of Scaling Facebook Ads Without Killing Performance.

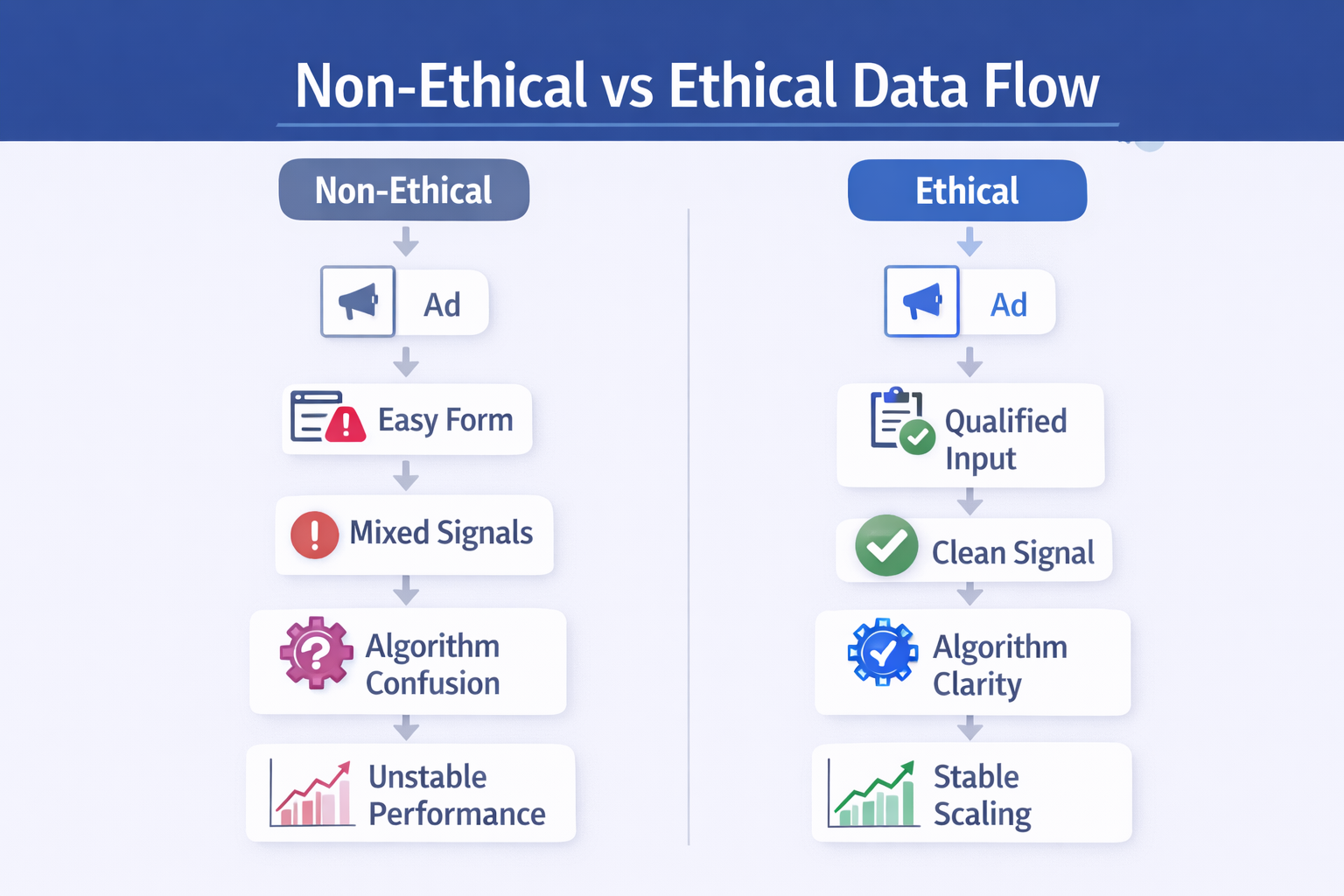

What “Ethical” Actually Means in Campaign Terms

In practice, ethical data collection is about signal accuracy.

It means the action you track should represent real intent, not just an easy interaction.

That often requires pushing against short-term performance pressure.

Instead of removing friction, you might add it. Instead of maximizing volume, you narrow the entry point.

A few adjustments that tend to change outcomes quickly:

-

Match events to real outcomes:

If your sales team qualifies only a portion of leads, your tracking should reflect that. Otherwise, the algorithm learns from noise. -

Slow the user down slightly:

Adding qualifying questions filters out low-intent users. Volume drops, but downstream metrics improve. -

Make the value exchange explicit:

When users understand what happens after submission, response quality improves. -

Bring sales data back into analysis:

Campaigns that generate accepted leads often look very different from those that only generate form fills.

This connects closely with how advertisers use stronger data inputs in Why First-Party Data Is the Key to Future-Proofing Your Facebook Ads.

The Tradeoff That Feels Wrong at First

When you clean up data collection, performance often looks worse before it improves.

CPL goes up. Conversion rate drops. Volume decreases.

This is where many teams revert back.

But if you track what happens after the lead, the picture changes.

You might go from 100 leads to 60, while qualified leads increase from 20 to 35. The platform shows a decline. The business sees improvement.

Over time, campaigns built on cleaner signals behave differently:

-

delivery stabilizes instead of fluctuating daily;

-

scaling doesn’t immediately break performance;

-

optimization becomes more predictable.

The system simply has better data to work with.

A Quick Way to Check If Data Is the Problem

You don’t need a full audit to spot issues.

Look at one campaign and compare two things:

-

platform-reported performance (CPL, CVR);

-

sales outcomes (acceptance rate, pipeline progression).

If those move in opposite directions, your signal is misaligned.

Another simple check: look at how long it takes a user to convert.

If most submissions happen within a few seconds, you’re likely capturing low-intent actions.

Final Takeaway

Ad platforms don’t optimize for truth. They optimize for signals.

If the signal is easy to generate but disconnected from real outcomes, the system will scale it anyway — very efficiently.

Ethical data collection is simply a more disciplined way to control what the algorithm learns from.

Once that improves, many performance issues stop being “optimization problems” — and start resolving on their own.