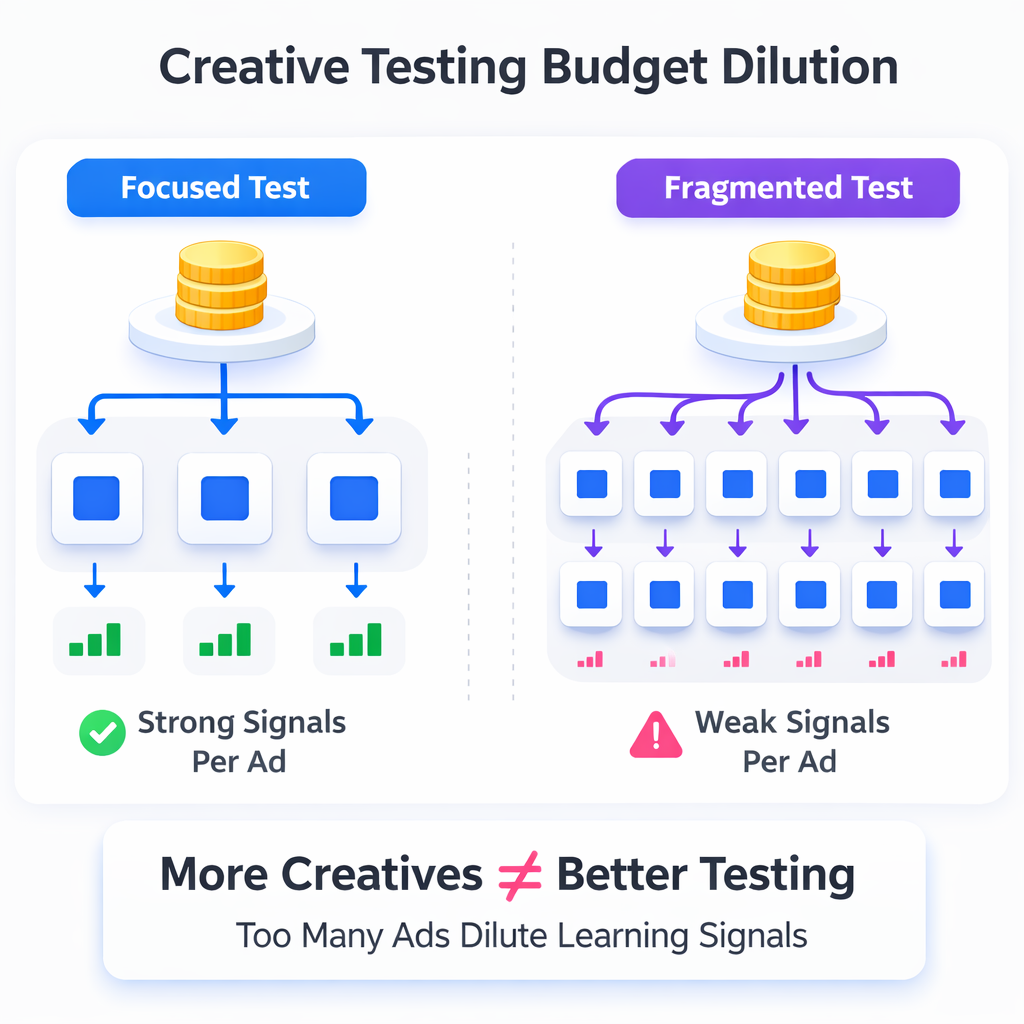

Creative testing often fails for a simple reason: the budget is fragmented across too many variations. Instead of producing clear signals, the campaign generates weak, inconclusive data.

If you test ten creatives with a limited budget, each ad receives too few impressions for the algorithm to properly evaluate performance. The result is unstable delivery, misleading metrics, and premature conclusions about what actually works.

This article explains how to structure creative tests so Meta’s delivery system can generate meaningful learning signals while still allowing you to compare different creative concepts.

Why Most Creative Tests Produce Inconclusive Results

A common testing mistake appears when advertisers attempt to test many variations at once. The logic seems sound: more creatives should produce faster learning. In practice, the opposite happens.

When the budget is divided across too many ads, several operational issues appear inside Ads Manager:

-

Insufficient impression volume per creative.

Meta’s delivery system needs repeated exposure to determine which users respond to a specific ad. If each creative receives only a few thousand impressions, the model cannot reliably estimate conversion probability. -

Delivery skew toward early signals.

The algorithm quickly reallocates spend toward creatives that generate the first engagement events. Early clicks or reactions can cause a creative to dominate spend before meaningful conversion data appears. -

Delayed learning phase completion.

Campaigns often remain in the learning phase longer when events are distributed across many ad variations. This leads to unstable CPM and inconsistent delivery patterns.

Many advertisers notice this problem when running large tests without a clear structure. If you want a deeper look at common setup errors, see Top Facebook Ad Mistakes That Drain Your Budget.

Testing structure — not just creative quality — determines whether the results are trustworthy.

The Budget Dilution Problem in Creative Testing

The core constraint in any test is event density. Meta’s optimization models learn from clusters of conversion events that occur within a specific audience segment.

If conversions are distributed across too many creatives, the model receives fragmented signals. For example, imagine a campaign generating 40 purchases per week:

For example, imagine a campaign generating 40 purchases per week:

-

Testing 2 creatives means each creative can receive roughly 20 purchase signals.

-

Testing 8 creatives spreads those same signals across many ads, leaving each with only a handful of conversions.

At low event counts, the algorithm cannot determine whether performance differences are real or random. You may pause a creative that could have scaled if it had received sufficient traffic.

This is why structured testing matters just as much as creative quality. Many marketers underestimate how strongly campaign structure affects results, which is discussed in The Role of Campaign Structure in Predictable Ad Performance.

A Practical Structure for Creative Testing

A reliable testing structure limits the number of variables per test cycle. Instead of testing many small variations, focus on comparing distinct creative concepts.

A typical structure might look like this:

-

Test 3–4 clearly different creative angles.

Each creative should represent a different message or positioning. For example, one ad might emphasize product benefits, another social proof, and another urgency. Testing small visual tweaks at this stage rarely produces meaningful insight. -

Allocate enough budget for each ad to gather events.

A simple rule used by many performance teams is to ensure each creative can realistically generate multiple conversion signals per day. If your campaign budget cannot support that, reduce the number of creatives. -

Run the test long enough to stabilize delivery.

Meta’s algorithm reallocates spend dynamically during the first days of delivery. Early results can be misleading because the system is still exploring audience clusters. -

Evaluate results using conversion metrics first.

CTR and CPC can help explain behavior, but the main evaluation signal should remain conversion rate or cost per purchase.

This structure keeps the test focused while allowing the algorithm to gather meaningful behavioral signals.

Separate Concept Testing From Iteration

Another mistake happens when advertisers mix concept testing and creative iteration in the same campaign.

Concept testing answers a strategic question — Which message resonates with the audience?

Iteration answers a tactical question — Which version of the winning concept performs best?

Combining both stages dilutes signals.

A more reliable process looks like this:

Stage 1 — Concept validation

-

Test 3–4 distinct creative angles.

-

Focus on major differences in message, hook, or offer.

-

Identify the concept that generates the strongest conversion behavior.

Stage 2 — Iteration and scaling

Once a concept proves effective, create multiple variations within that idea:

-

different opening hooks

-

alternate visuals

-

modified calls to action

Because the underlying message is already validated, these iterations refine performance rather than searching blindly for a winning idea.

If you want to dive deeper into structured experimentation frameworks, review How to Run Split Tests That Reveal Real Insights.

Watch These Signals During Creative Tests

Creative tests often fail because advertisers focus only on final performance metrics. Observing delivery signals inside Ads Manager can reveal problems earlier.

Several indicators suggest the test structure may be too fragmented:

-

Rapid spend concentration in one ad.

If one creative receives most of the budget within the first day, the algorithm likely reacted to early engagement signals rather than conversion data. -

Large impression gaps between ads.

When some creatives receive three or four times more impressions than others, the comparison becomes unreliable. -

Learning phase resets.

Frequent resets indicate the system keeps adjusting delivery due to insufficient or inconsistent event signals.

When these patterns appear repeatedly, the problem often lies in the testing structure rather than the creatives themselves. In many cases, the underlying issue is exactly what the article Why Testing Too Many Ads at Once Hurts Your Campaign Results explains.

When It Makes Sense to Test More Creatives

There are cases where broader testing becomes possible. Larger advertisers with high event volume can support more creative variations because the algorithm receives abundant data.

Testing more creatives becomes viable when:

-

The campaign generates high daily conversion volume, allowing each creative to accumulate meaningful signals.

-

Budgets support meaningful traffic distribution, ensuring each ad still receives substantial impressions.

-

Creative tests are separated into multiple ad sets, giving the algorithm clearer optimization paths.

Even in these scenarios, successful teams rarely launch dozens of creatives simultaneously. Testing still happens in controlled phases.

The Real Goal of Creative Testing

Creative testing is not about identifying a single “winning ad.” The real objective is to uncover patterns about what drives user response.

A well-structured testing process reveals:

-

which hooks attract attention

-

which value propositions trigger clicks

-

which narratives lead to purchases

When tests are structured correctly, each cycle generates insights that guide the next round of creative production.

Practical Takeaway

If your creative tests feel inconsistent or inconclusive, the issue may not be the creatives themselves. The testing structure may simply be spreading the budget too thin.

Limit each test to a small number of distinct concepts, ensure each ad receives enough impressions and conversions, and separate concept discovery from iteration.

This approach gives Meta’s delivery system the signals it needs to identify real performance differences — and gives you creative insights that actually inform the next campaign.