Meta is simplifying how campaigns are set up. Fewer ad sets, broader targeting, more automation.

On the surface, that looks like a shift toward easier media buying. In reality, it shifts the burden somewhere else.

Performance now depends much more on how much meaningful creative variation you can feed into the system.

Most advertisers haven’t adjusted to that yet.

Campaigns Stall for a Very Specific Reason

A campaign performs well early, then starts to level off. You increase budget and results get worse instead of better.

When you look inside the ad set, there’s usually a clear pattern. One or two creatives are taking most of the spend, while the rest barely deliver. Frequency rises, but conversions don’t follow.

This is the system hitting a limit.

It found a segment of users that respond to a specific message. Once that group is saturated, it needs a different angle to continue expanding. If that angle doesn’t exist in your creative set, delivery has nowhere to go.

This is the same dynamic behind ad fatigue and performance drop over time — except now it happens faster because campaigns reach saturation quicker.

What Actually Changed in How Meta Delivers Ads

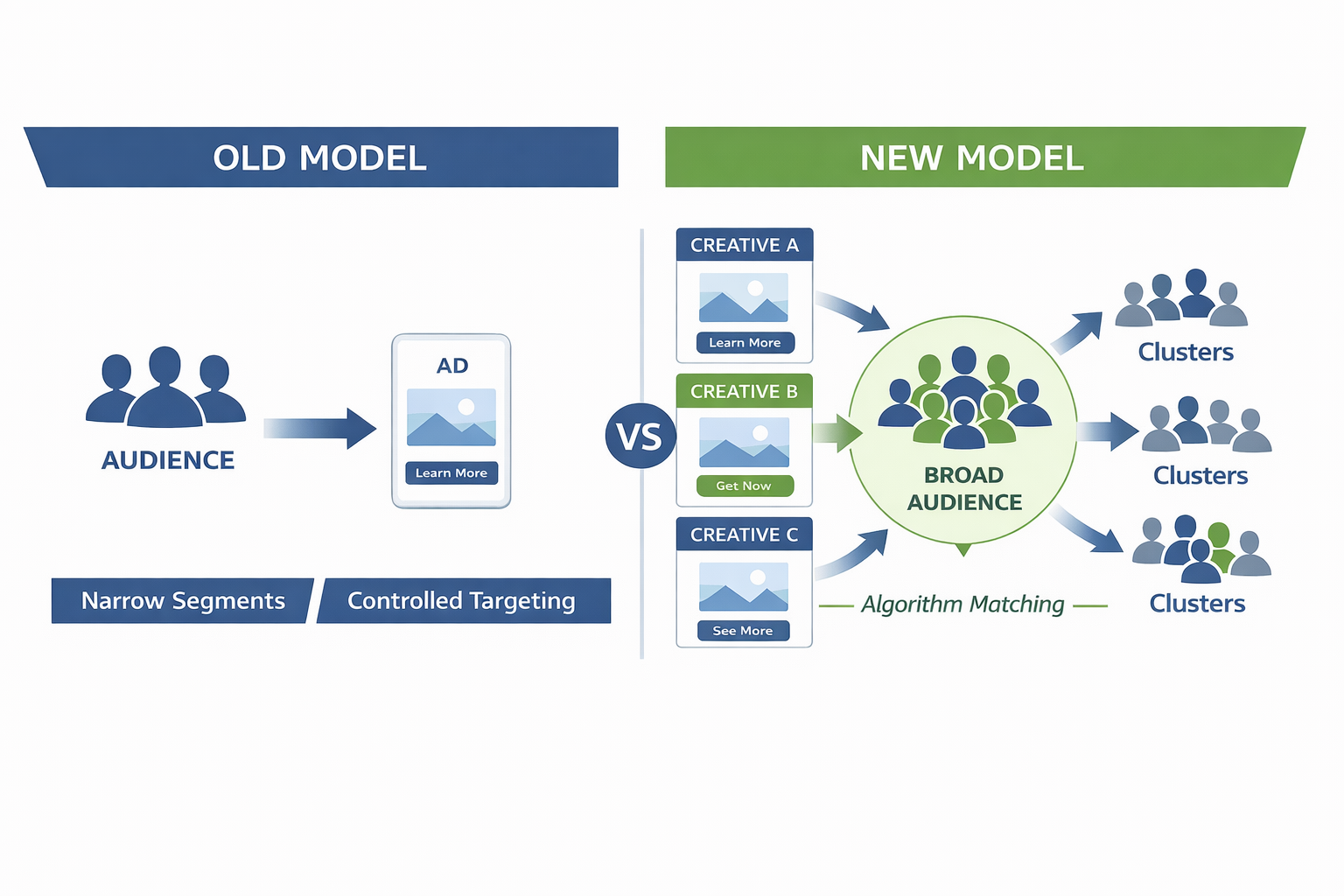

The shift isn’t just more automation — it’s how ads are matched to users.

With systems like Andromeda, Meta doesn’t rely heavily on advertiser-defined segments anymore. Instead, it distributes creatives across a broad audience and looks for patterns in how different users respond.

You can verify this in Ads Manager:

-

changing audiences often has minimal impact once a campaign has data,

-

introducing a new creative can immediately change delivery distribution,

-

performance differences between ads inside the same ad set are often larger than differences between audiences.

That’s because each creative is functioning as a separate signal. The system uses those signals to identify which types of users to prioritize in auctions.

This aligns with the broader shift explained in targeting updates and how to adapt — control is decreasing because the system is doing more of the matching.

Why “More Creatives” Usually Doesn’t Work

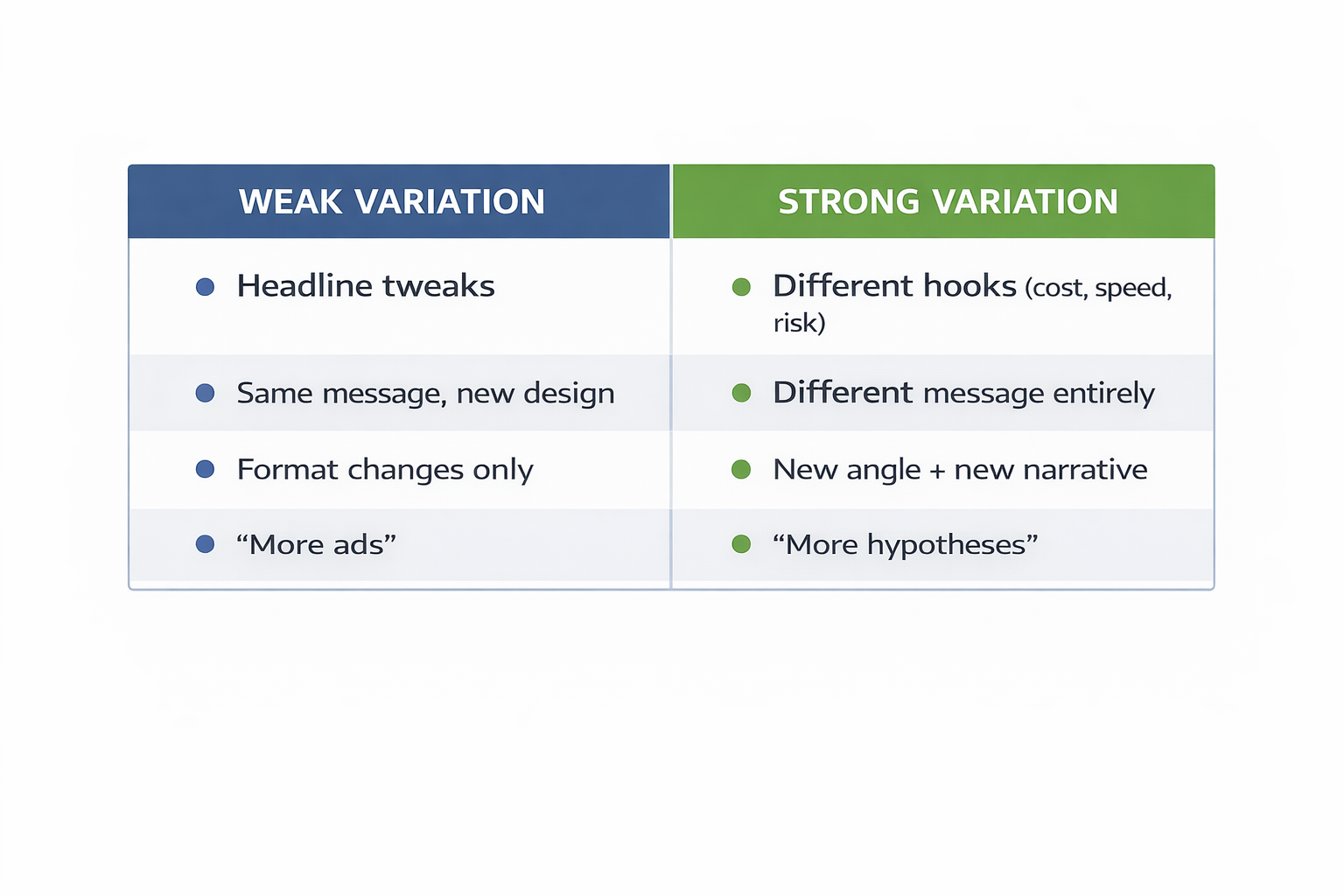

A lot of teams respond to this shift by increasing output, but not in a way the system actually benefits from.

They produce variations like:

-

minor headline rewrites that keep the same core promise,

-

visual tweaks that don’t change the underlying message,

-

different formats built around the same idea.

From the system’s perspective, these are highly correlated inputs. They don’t open new paths for delivery.

What tends to work better is variation that changes the reason someone would respond. For example, an ad focused on cost reduction will pull a different user group than one focused on speed or implementation risk.

This is why creative testing fails in many accounts — not because testing is wrong, but because the inputs are too similar. A deeper breakdown of this issue is covered in why your creative testing strategy isn’t working.

Without real variation, delivery collapses onto a small number of ads, and scaling becomes unstable.

The Tradeoff Behind Meta’s Push for Automation

The reduction in manual controls is not just a product decision. It reflects how the system performs best.

When advertisers heavily segment campaigns or apply strict targeting constraints, they limit the number of interactions the system can observe. That slows down learning and reduces the effectiveness of optimization.

Broad targeting does the opposite. It increases the pool of available users and allows the system to test more combinations of user behavior and creative response.

But there’s a dependency here.

Broad targeting without sufficient creative variation leads to faster fatigue. The same message gets shown to a larger audience, but it doesn’t resonate beyond the initial segment that responded.

That’s where most campaigns break.

The Real Bottleneck: Creative Throughput

The practical issue isn’t that advertisers don’t understand this shift. It’s that most teams aren’t set up to support it.

Creative production is still handled in cycles:

-

ideas are developed,

-

assets are produced and reviewed,

-

campaigns launch,

-

results are analyzed before the next round begins.

That process worked when campaigns were stable. It doesn’t work when the system is constantly reallocating spend.

The gap shows up as a timing problem. By the time new creatives are ready, the previous ones have already saturated their effective audience.

This is also why many advertisers struggle with scaling — even when metrics look good early. The limitation isn’t budget, it’s lack of new signals.

Why AI Hasn’t Closed the Gap

Meta’s push into AI-generated creative is meant to address this constraint.

In practice, adoption is uneven.

Teams are comfortable using automation for budget allocation or audience expansion, where results are easier to validate. Creative is different.

There are a few consistent concerns:

-

outputs can drift from brand positioning,

-

legal questions around generated assets remain unresolved,

-

most tools generate variations better than they generate new ideas.

So AI helps with speed, but not with direction.

That’s why many teams still rely on human input to define messaging — even if production becomes partially automated.

What Changes When Creative Input Increases

When campaigns include a wider range of distinct creative angles, the system behaves differently.

Instead of concentrating spend on a single ad, delivery spreads across multiple messages that resonate with different users. Frequency grows more slowly, and scaling becomes more stable.

You also start to see patterns that are easier to act on:

-

certain angles consistently attract specific types of users,

-

performance doesn’t collapse when one creative fatigues,

-

new creatives extend performance instead of replacing it.

This is the same principle behind scaling Facebook campaigns without killing performance — growth comes from expanding inputs, not forcing spend.

Final Takeaway

Meta’s direction toward automation is real, but it doesn’t remove the need for input — it increases it.

The system can handle targeting, bidding, and distribution with minimal guidance. It still depends on advertisers to supply enough distinct creative signals to explore the market effectively.

Right now, that’s where most accounts fall short.

Campaigns don’t stall because the algorithm stops working. They stall because the system runs out of new signals to act on.