Most Meta campaigns don’t fail because they’re ignored. They fail because they’re adjusted too often.

If you’ve ever seen performance drop right after “improving” a campaign, you’ve already experienced this. The issue isn’t optimization itself — it’s the frequency and timing of it.

This is where many advertisers misread how Meta’s system actually works.

Why Constant Optimization Feels Right (But Isn’t)

When performance fluctuates, the instinct is to intervene. CPM goes up, CPL increases, conversion rate dips — so changes follow.

In practice, this creates a loop:

-

Performance shifts slightly, and you edit the campaign.

-

The system resets learning signals, which introduces instability.

-

That instability triggers another round of edits.

-

The cycle repeats, often with diminishing returns.

From the outside, it looks like active management. Inside the system, it looks like continuous disruption.

Meta’s delivery algorithm depends on pattern recognition across auctions. Every time you change inputs, you reduce its ability to build those patterns in a stable way.

What Actually Happens After Each Edit

Not all changes are equal, but most meaningful edits trigger recalibration.

Typical examples include:

-

Budget adjustments beyond small increments.

Increasing budget by 20–30% forces the system to re-enter auctions at a different scale. -

Creative swaps or frequent refreshes.

New ads reset engagement history and need to rebuild performance signals. -

Audience or targeting changes.

The algorithm must relearn which user clusters convert. -

Bid or optimization event changes.

This resets how the system evaluates value in auctions.

After these edits, you’ll often observe:

-

Spend volatility across hours.

-

Higher CPM during re-entry.

-

Lower short-term efficiency.

-

Learning phase resets.

This is also why many campaigns struggle to stabilize. If you want to understand that dynamic deeper, see How to Finish the Facebook Learning Phase Quickly.

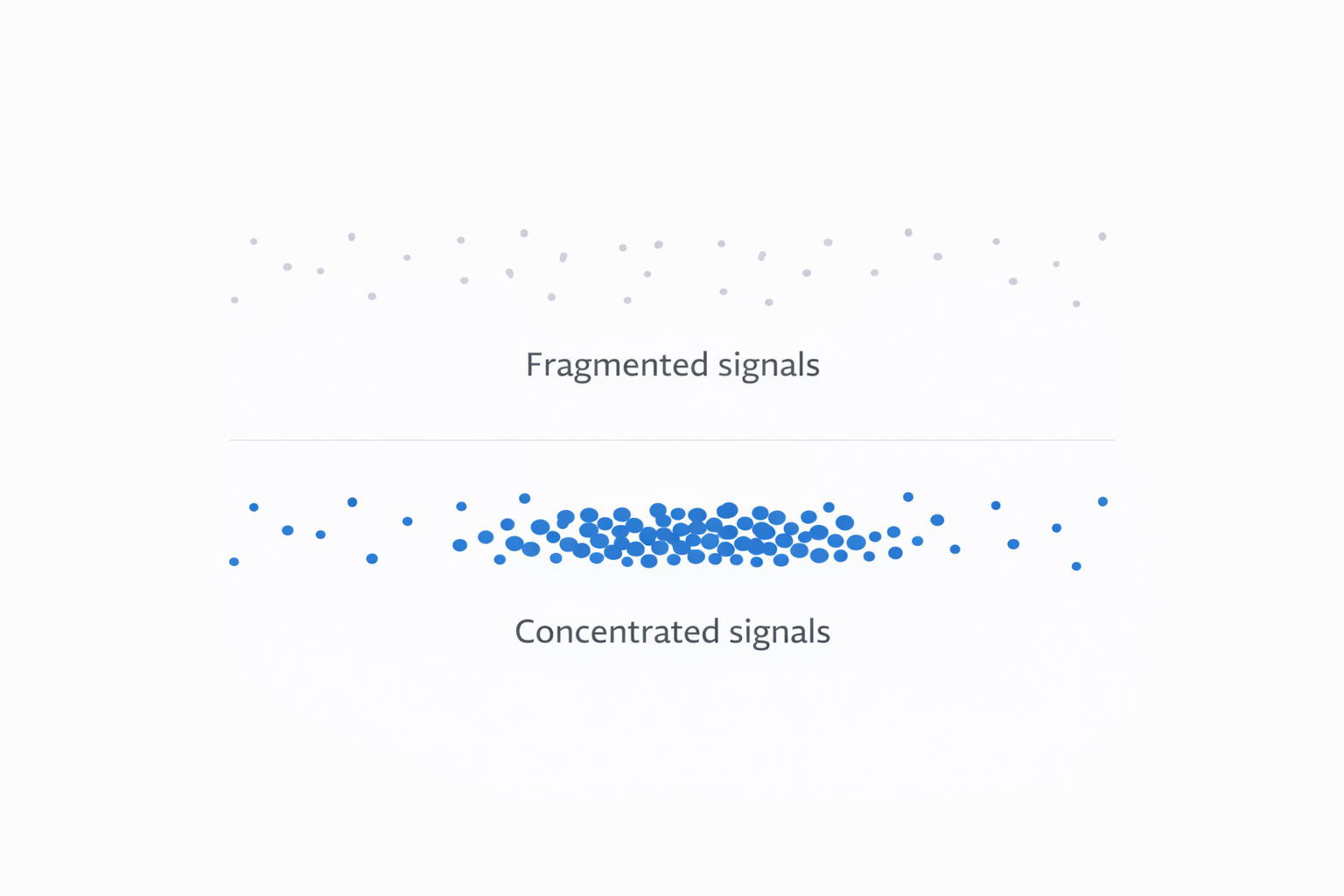

The Hidden Cost: Signal Fragmentation

The real damage from constant optimization isn’t visible in a single metric.

Instead of building strong datasets, you spread signals across too many variations. That leads to:

-

Weak optimization signals.

The system lacks enough consistent data to prioritize high-quality users. -

False negatives.

Good creatives or audiences get paused too early. -

Short-term bias.

Decisions rely on CTR or CPC instead of actual outcomes.

If you’re evaluating campaigns this way, you’re likely missing deeper performance drivers — as explained in How to Analyze Facebook Ad Performance Beyond CTR and CPC.

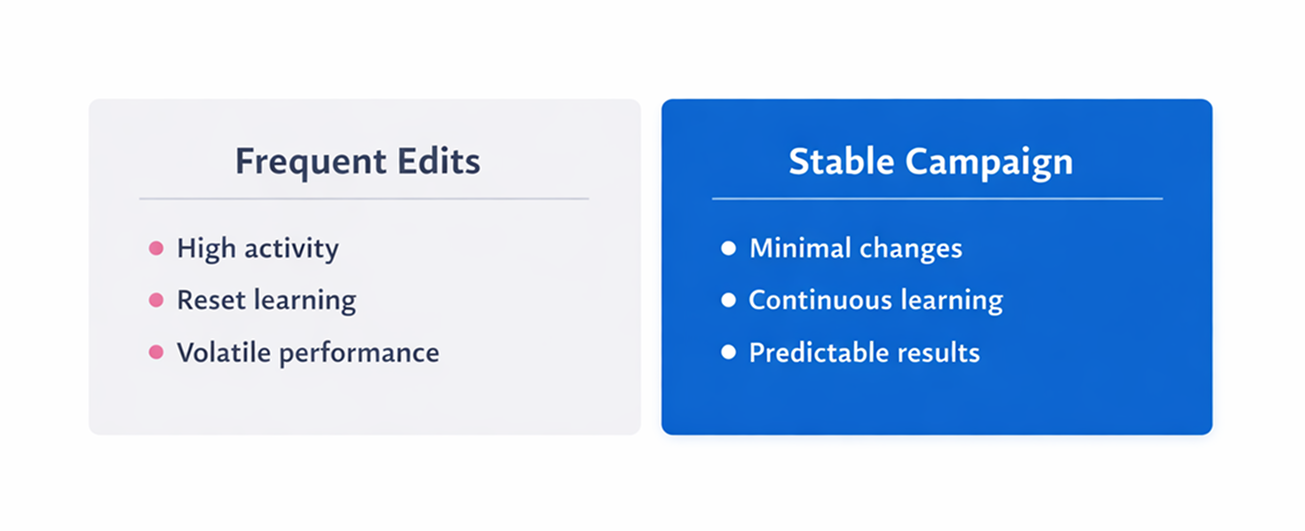

Why Stability Outperforms Activity

Campaigns that look “inactive” often perform better over time.

You’ll typically see:

-

CPM stabilizing after a few days.

-

Conversion rates improving gradually.

-

More consistent spend distribution.

-

Lower cost per result without changes.

This happens because the algorithm finally has stable inputs and enough data to optimize effectively.

When Optimization Actually Makes Sense

Optimization should be deliberate, not constant.

It makes sense when:

-

Performance declines consistently over several days.

-

Metrics conflict (e.g., high CTR, low conversions).

-

Spend hits a ceiling despite strong results.

-

Creative fatigue appears (rising frequency, falling CTR).

In these cases, changes solve a clear problem.

A More Stable Operating Model

Instead of reacting constantly, use structured decision timing:

-

Daily — monitor only.

Check delivery and anomalies, but don’t edit. -

Every 3–5 days — evaluate patterns.

Look at trends across key metrics. -

Weekly — make strategic changes.

Adjust budgets, creatives, or audiences.

This creates consistency, which the algorithm can actually learn from.

The Core Shift Most Advertisers Miss

Meta doesn’t reward activity.

It rewards stable, consistent signals.

Frequent edits break those signals.

Once you shift your mindset:

-

you make fewer changes,

-

you test more deliberately,

-

you get more predictable results.

Final Takeaway

If you’re adjusting campaigns every day, you’re likely slowing them down.

The goal isn’t to optimize more.

It’s to interfere less — and only when the data actually justifies it.