Optimizing campaigns for revenue sounds like the obvious choice. If the ad system knows how much each customer spends, it should prioritize users who generate higher order values.

In practice, many advertisers see performance decline after switching to value optimization.

Campaigns that previously generated steady purchases may start delivering fewer conversions. Spend becomes uneven, learning phases reset more often, and daily results fluctuate.

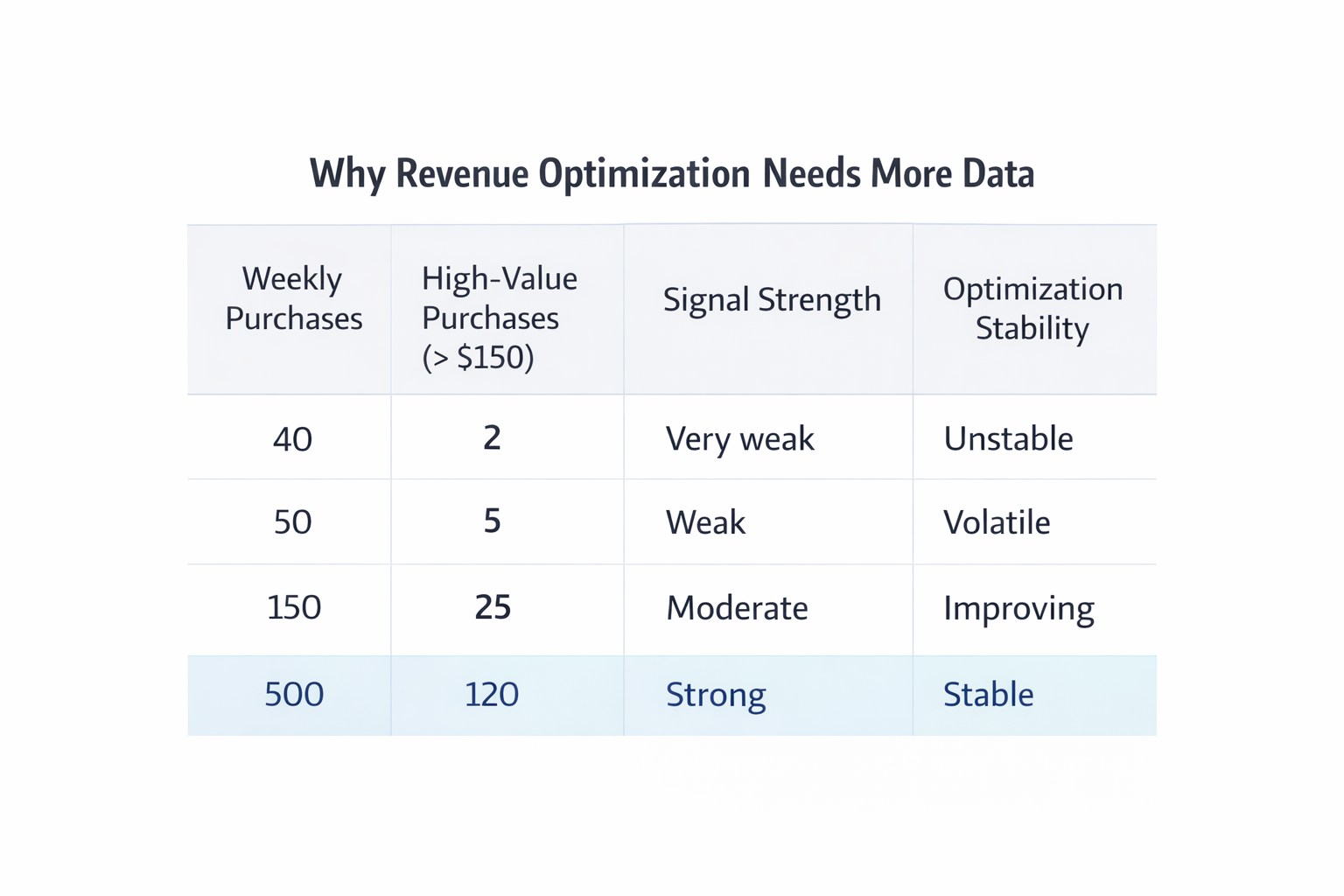

The reason is simple: revenue optimization requires far more data than conversion optimization. When the dataset is small, the algorithm struggles to identify reliable patterns among high-value buyers.

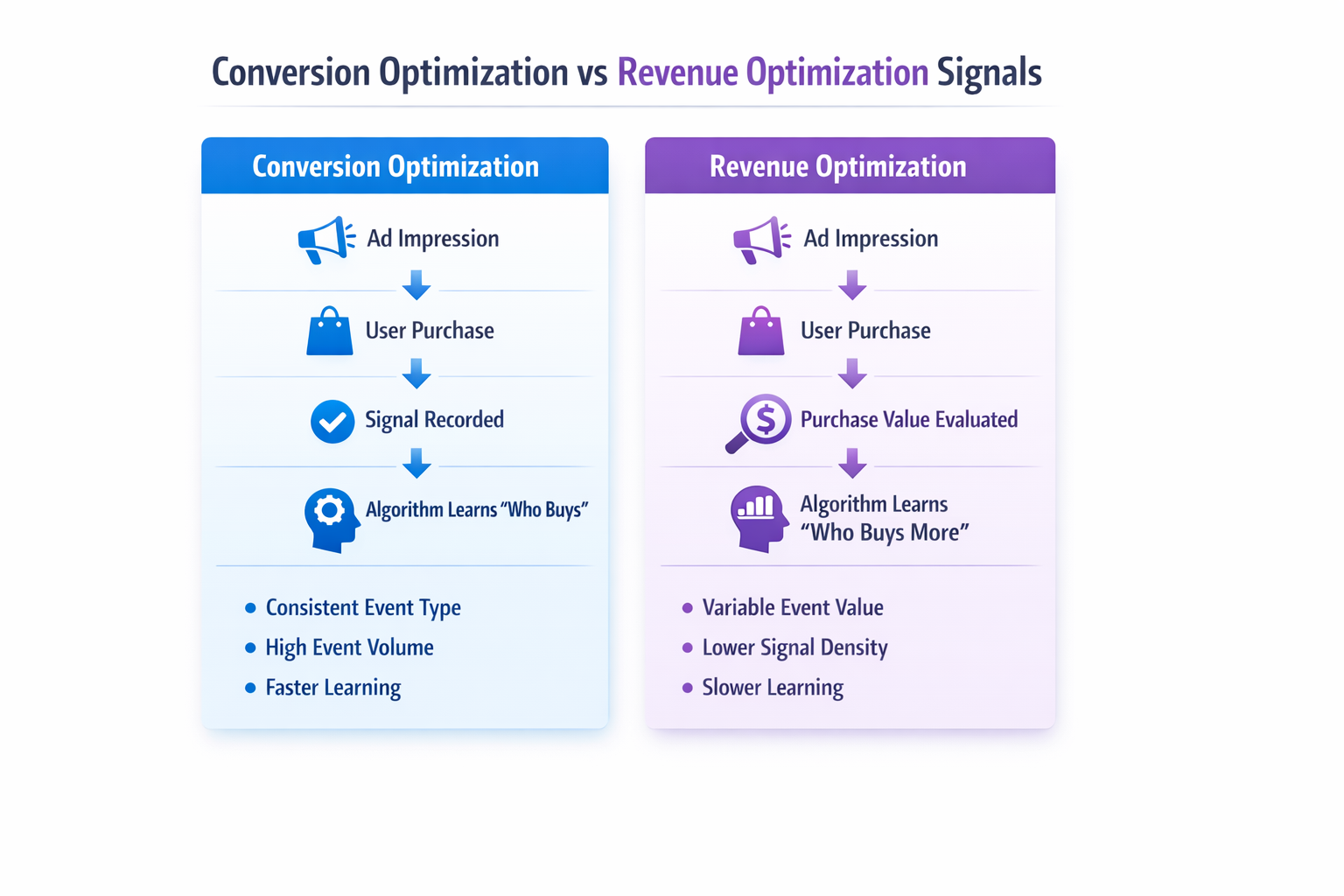

Conversion Optimization Creates a Clear Learning Signal

Meta’s delivery system learns by analyzing repeated events. Each conversion helps the algorithm identify patterns among users who complete the same action.

When a campaign optimizes for Purchase, every order is treated as the same signal.

The system only needs to answer one question: which users are likely to buy?

This creates a stable learning environment for the algorithm.

Several practical effects follow.

-

Every purchase strengthens the same behavioral pattern.

A $20 order and a $200 order both confirm that the campaign reached a converting user. The algorithm focuses on identifying shared characteristics among buyers rather than modeling order value. -

Event volume accumulates quickly.

Even low-value purchases contribute useful data. This allows the system to identify converting user clusters faster. -

Delivery stabilizes earlier.

Because feedback arrives frequently, campaigns often exit the learning phase faster and maintain more consistent daily spend.

If you review stable campaigns in Ads Manager, you will typically see steady CPA ranges and predictable delivery patterns.

Revenue Optimization Makes the Signal Much Harder to Model

When you switch to Value Optimization, the system must estimate two things simultaneously:

-

the probability that a user will purchase;

-

the expected value of that purchase.

Instead of simply identifying buyers, the algorithm tries to predict which buyers will spend more.

This creates a much noisier dataset.

A campaign might produce events such as:

-

a $20 order from a first-time customer;

-

a $45 order driven by a discount ad;

-

a $110 order after several site visits;

-

a $250 order from a returning customer.

Each outcome carries a different signal.

To model those differences reliably, the algorithm needs many examples of each type of buyer. Most campaigns do not generate enough data.

Rare High-Value Orders Cause Instability

In most ecommerce accounts, large purchases occur infrequently.

For example, a campaign might generate:

-

around 50 purchases per week;

-

an average order value near $60;

-

only a few purchases above $150.

When optimizing for revenue, those rare high-value orders influence the algorithm heavily. However, the sample size is too small for stable modeling.

As a result, delivery becomes unpredictable.

In Ads Manager, this often appears as:

-

uneven daily spend;

-

fluctuating CPA;

-

campaigns returning to the learning phase after small changes.

The system is trying to identify high-value buyer patterns that the data cannot clearly support.

Conversion Volume Often Declines

Revenue optimization also makes the algorithm more selective about auctions.

Users predicted to generate small purchases receive fewer bids. Even if they are highly likely to convert, the campaign may avoid competing for those impressions.

Two delivery changes typically follow.

-

The reachable audience becomes smaller.

Users resembling low-value buyers are deprioritized, which reduces overall reach. -

The campaign enters fewer auctions.

If expected purchase value is low, the system bids less aggressively.

This frequently leads to fewer conversions overall, even if average order value increases slightly.

Strong Audiences Matter More Than Optimization Type

Audience structure plays a major role in campaign performance. High-quality audiences provide clearer behavioral signals for the algorithm to learn from.

For example, building segments from engaged users can significantly improve targeting accuracy. Guides such as Using Facebook Engagement Custom Audiences to Find Your Best Leads explain how engagement-based audiences reveal strong buyer intent.

The same principle applies when expanding reach with lookalike audiences. If the seed dataset is strong, Meta can identify similar users more effectively. Articles like Lookalike Audiences: How to Seed, Train, and Scale describe how proper seeding improves audience modeling.

For advertisers looking to understand how different audience types fit into campaign structure, The Complete Guide to Warm, Cold, and Custom Audiences in Meta Ads outlines how Meta categorizes users by intent.

In many cases, improving audience structure produces a larger performance improvement than switching optimization goals.

Final Takeaway

Revenue optimization introduces a more complex objective for the algorithm. Instead of predicting who will purchase, the system must also estimate purchase value.

That approach works best when campaigns generate large amounts of conversion data.

For many advertisers, especially those running moderate budgets, optimizing for conversions produces stronger results because it provides the algorithm with a simpler and more reliable signal.