When a Facebook Ads campaign underperforms, marketers often debate whether the problem lies in the audience or the creative. Both influence performance, but they do not affect Meta’s delivery system in the same way.

Testing the wrong variable first can lead to misleading conclusions. You may pause an audience because conversions look weak, even though the real issue is that the creative never generated strong engagement signals.

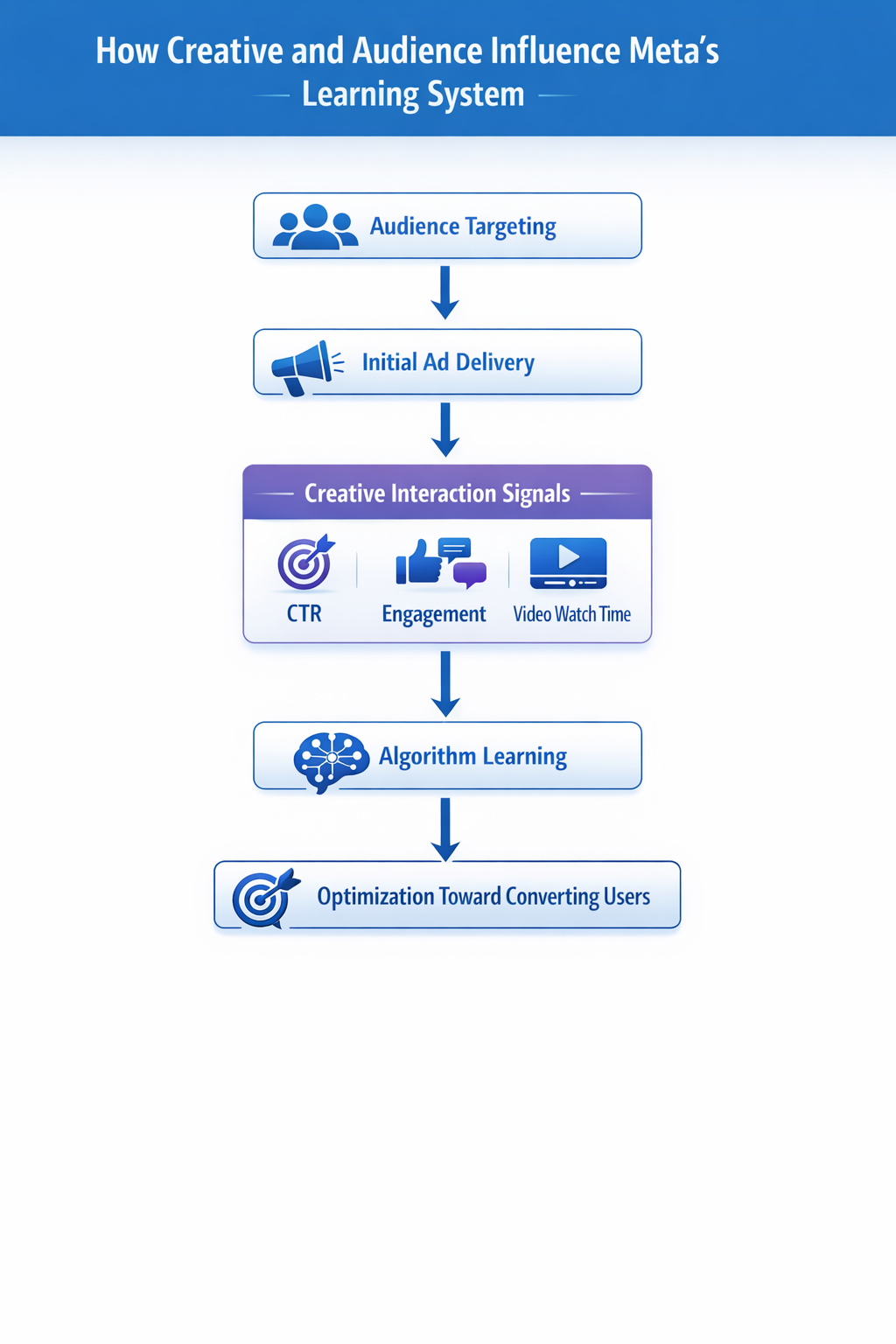

Understanding how Meta evaluates ads during the learning phase helps determine which element should be tested first.

Why the Question Often Leads to Confusion

Audience and creative testing are frequently treated as equal levers. In practice, they affect different parts of the delivery process.

Meta’s algorithm evaluates new ads primarily through early behavioral signals such as:

-

Click-through rate (CTR).

This signal indicates whether users find the message relevant enough to interact with. If CTR remains low after several thousand impressions, the system assumes the ad is not compelling. -

Engagement behavior.

Likes, comments, shares, and saves help the algorithm identify clusters of users who respond positively to the content. -

Content consumption signals.

For video creatives, watch time and completion rate show whether users actually engage with the message instead of scrolling past it.

These signals originate from the creative, not the audience. The audience simply defines who receives the first impressions.

If the creative fails to attract attention, the system receives weak feedback regardless of how accurate the targeting might be.

Why Creative Testing Usually Comes First

In most campaigns, creative testing should happen before audience testing. Creative determines whether the algorithm receives enough engagement signals to begin optimization.

If you test audiences while the creative performs poorly, the results will not reveal which audience works best. Every ad set will struggle for the same reason.

Starting with creative testing offers several advantages:

-

Clearer performance signals.

When multiple creatives compete within the same audience, differences in CTR and engagement reflect the strength of the messaging rather than targeting variation. -

Faster learning cycles.

One audience containing several creatives collects data faster than multiple audiences using identical ads. -

Cleaner diagnostics.

If each ad set uses a different audience and creative combination, it becomes impossible to determine which variable actually caused the performance change.

For this reason, experienced media buyers often begin with creative testing inside a broad or stable audience.

A Practical Creative Testing Structure

A useful creative test isolates messaging while keeping other variables stable. One simple structure includes the following elements:

-

A single broad audience.

Use a large interest group, a lookalike audience, or open targeting. The goal is to generate impressions quickly so that engagement signals appear within the first few thousand impressions. -

Several distinct creative concepts.

Avoid testing minor design edits. Instead, compare clearly different messaging angles such as:-

a problem-focused hook explaining the pain point,

-

a testimonial or customer story,

-

a product demonstration showing how the product works,

-

a comparison-based ad highlighting advantages over alternatives.

-

-

Identical campaign settings.

Budgets, placements, and optimization events should remain consistent across all ads. This prevents delivery bias from distorting the test results.

Once one or two creatives consistently outperform the others, you can begin evaluating audiences with much stronger signals.

When Audience Testing Should Come First

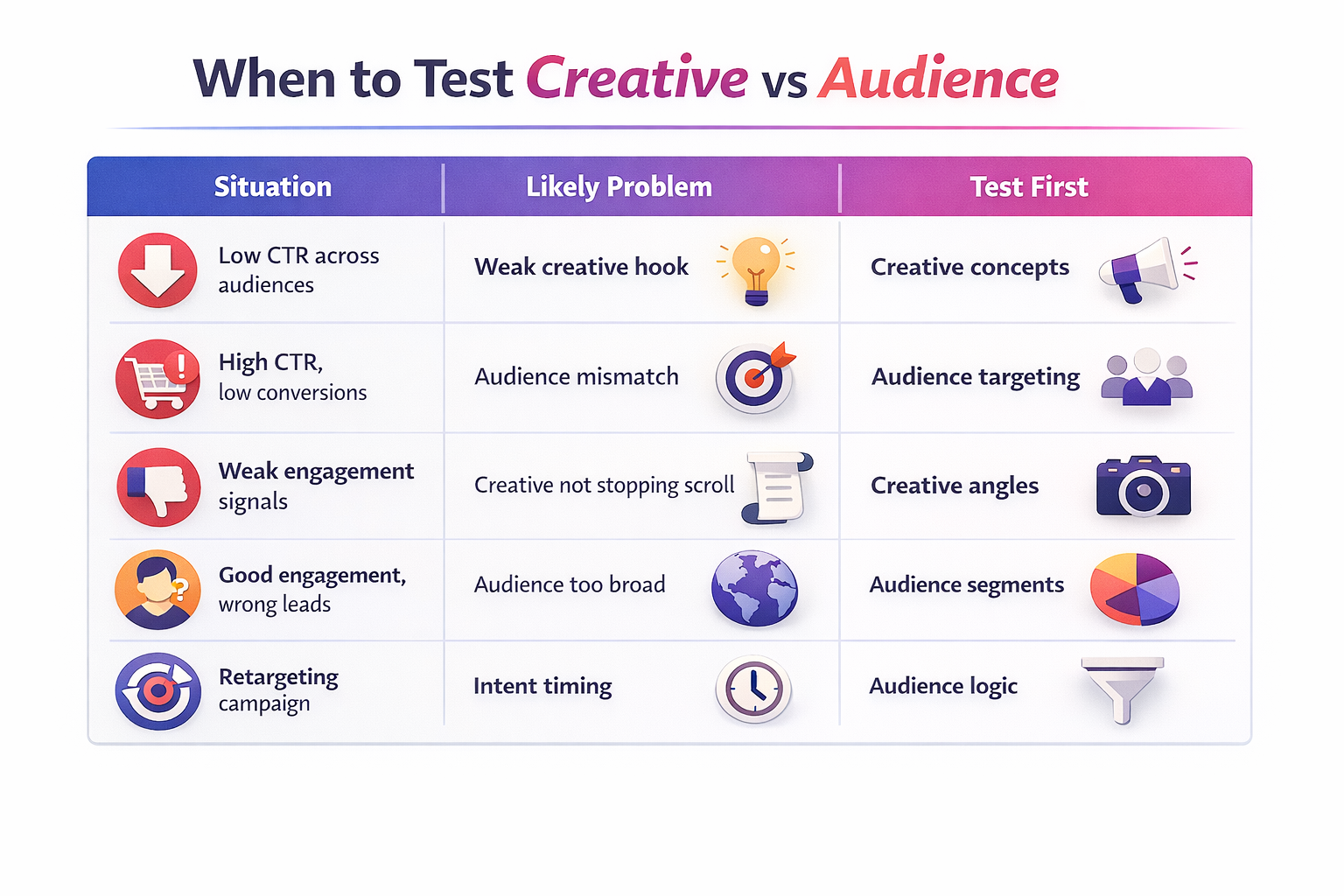

Although creative testing often takes priority, there are situations where audience testing becomes the logical starting point.

High ly specialized markets

ly specialized markets

Some campaigns target narrow professional segments or technical buyers. Broad targeting may reach many users who have no relevance to the product.

In this case, the creative may appear weak simply because it reaches the wrong people. Testing different audience definitions can reveal which segment actually responds to the offer.

If you are experimenting with different targeting sources, it can also help to understand how Facebook audience targeting works and how Meta interprets behavioral signals inside each audience pool.

Retargeting-heavy campaigns

Retargeting audiences already contain users who demonstrated interest in the product. Creative differences tend to produce smaller performance gaps.

Performance improvements often come from adjusting the audience logic instead. For example:

-

separating visitors who viewed pricing pages from casual blog readers;

-

isolating users who added products to the cart but did not purchase;

-

excluding recent buyers from retargeting campaigns.

These structural adjustments can produce larger improvements than launching new creatives.

Weak lookalike seed data

Lookalike audiences rely on patterns found in the seed list. If the seed contains inconsistent or low-quality data, the resulting audience may not represent your real buyers.

Typical warning signs include:

-

consistently low CTR across all creatives;

-

poor engagement even when the message is clear;

-

high CPM without conversion improvement.

In these cases, improving the seed or rebuilding the lookalike often matters more than creative experimentation. If lookalikes are central to your strategy, it helps to understand how to build lookalike audiences that actually convert.

Signs the Creative Is the Real Issue

Before launching new audience tests, check whether the creative itself is already sending clear warning signals.

Common indicators include:

-

Low CTR across multiple audiences.

If several ad sets show the same weak engagement pattern, the problem is likely the message or visual hook. -

Minimal interaction with the ad.

Ads that generate impressions but almost no reactions or comments usually fail to capture attention in the feed. -

Rising CPM with declining engagement.

When users scroll past the ad quickly, Meta reduces delivery efficiency, which often increases CPM.

In these situations, improving the creative concept will typically produce faster gains than searching for new audiences.

When Creative Works but Conversions Stay Low

Sometimes the opposite problem appears: the creative attracts clicks and engagement, but conversions remain weak.

This pattern often indicates a mismatch between the message and the audience.

Typical symptoms include:

-

high CTR but poor purchase rates;

-

strong landing page traffic with little downstream action;

-

engagement from users who are curious but not qualified buyers.

At this stage, audience testing becomes valuable because the creative already proves it can generate interest.

Exploring more precise targeting strategies—such as high-converting Facebook custom audiences or segmented audience pools—can help identify the users who actually convert.

You may also benefit from refining segmentation approaches, which are explained in Maximizing ROI through Facebook Audience Segmentation.

A Practical Testing Sequence for Most Campaigns

For most advertisers, the most reliable workflow follows three stages:

-

Creative exploration.

Launch several distinct creative concepts within a broad or stable audience. Identify which messages consistently generate engagement. -

Audience validation.

Take the strongest creatives and test them across multiple audiences, including interest groups, lookalikes, and retargeting segments. -

Optimization and scaling.

Allocate more budget to winning combinations while periodically introducing new creatives to prevent fatigue.

This sequence ensures the algorithm receives strong signals early while still revealing which audience segments produce conversions.

The Key Takeaway

Creative and audience both influence campaign performance, but they operate at different stages of the delivery process.

Creative determines whether the algorithm receives useful engagement signals. Without those signals, audience tests often produce misleading conclusions.

For most campaigns, start by testing multiple creative concepts inside a broad audience. Once a strong message emerges, audience testing becomes far more reliable.

The most useful diagnostic question is not “Should we test audience or creative?” but rather: which variable is currently preventing the algorithm from learning?