Most campaign performance problems don’t start in targeting or creative. They start in tracking.

When conversion events are incomplete, misfiring, or delayed, the ad platform receives a distorted picture of what actually works. The campaign may still spend normally, but the optimization engine begins steering budget toward the wrong signals.

This rarely causes an immediate crash in results. Instead, performance slowly drifts — CPAs rise, learning phases repeat, and scaling attempts stall without an obvious explanation.

Understanding how event tracking feeds the ad delivery system helps explain why these issues quietly compound over time.

Ad Platforms Optimize Only the Signals They Can See

When a campaign is set to optimize for purchases, the platform is not evaluating revenue directly. It is reacting to the conversion events that your tracking sends back.

If those events are incomplete or inaccurate, the system begins modeling the wrong behavioral patterns.

Inside platforms like Meta or Google Ads, the optimization loop typically works like this:

-

An impression enters an auction.

The system predicts the probability that a user will complete the selected conversion event. -

The platform bids based on that predicted probability.

Higher predicted conversion likelihood leads to higher bids in competitive auctions. -

The campaign observes actual outcomes.

When a tracked event fires (purchase, lead, signup), the system updates its prediction models. -

Future auctions adjust based on those outcomes.

Users with similar behavioral signals receive higher delivery priority.

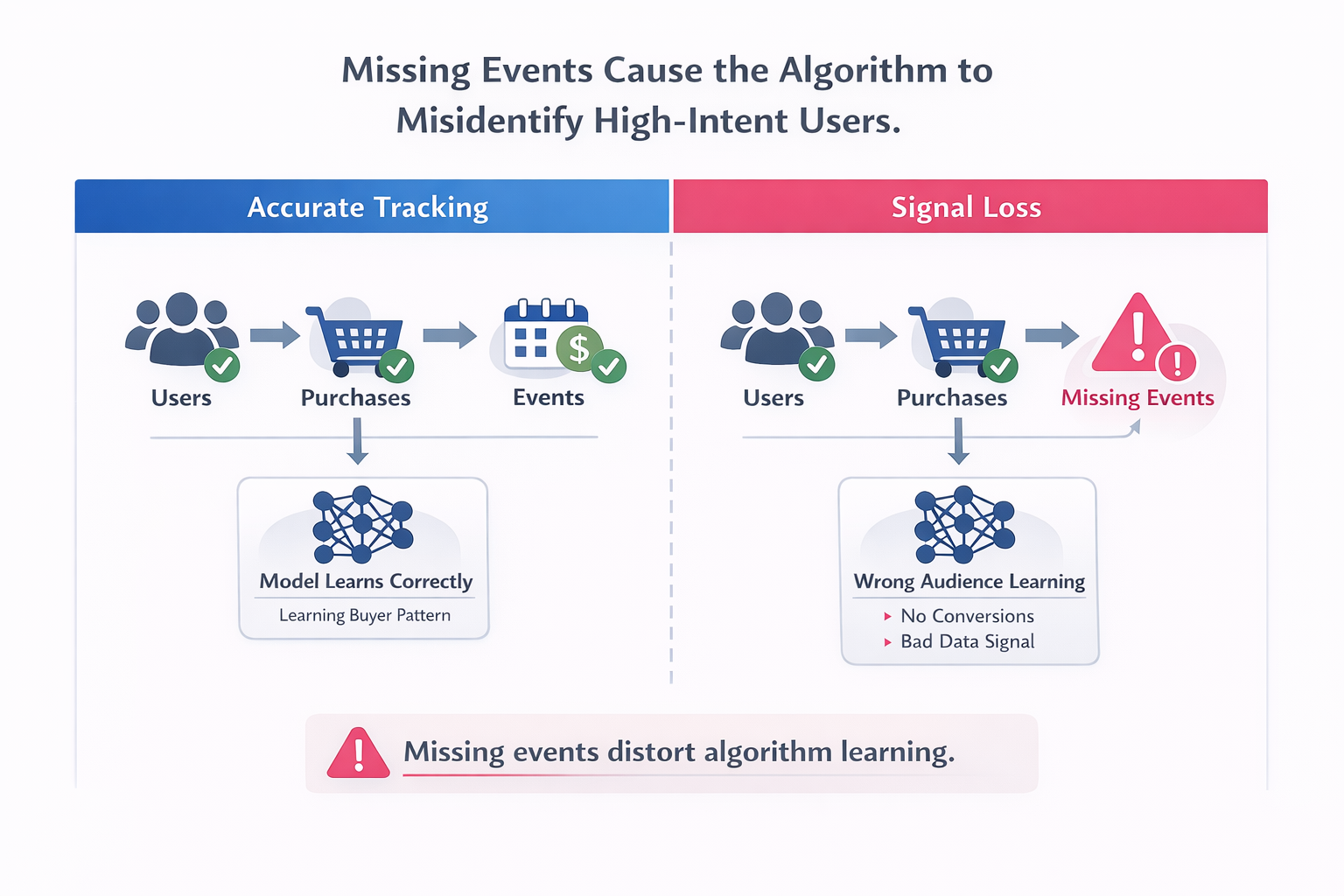

If tracking fails to capture a portion of real conversions, the system incorrectly assumes those users did not convert. Over time, the platform reduces delivery toward similar users because the model believes they underperform.

The result — the campaign gradually drifts away from high-value audiences.

Missing Events Cause the Algorithm to Misidentify High-Intent Users

A common scenario appears when server-side tracking or pixel events fail intermittently.

Imagine a campaign generating 100 real purchases per day, but the tracking setup records only 65 of them.

The missing 35 conversions create two problems inside the optimization model:

-

Some converting users appear as non-converters.

-

The algorithm learns incorrect patterns about who actually buys.

This distortion compounds quickly.

For example, suppose many missing events occur from Safari or iOS devices because of tracking restrictions. From the algorithm’s perspective:

-

Those users click ads.

-

They visit the site.

-

But they rarely convert.

The system therefore reduces delivery to those device segments, even though they might contain some of the most valuable buyers.

A media buyer reviewing Ads Manager might see:

-

Rising CPMs.

-

Lower conversion rate.

-

Stable click-through rate.

Nothing obvious points directly to tracking failure. Yet the underlying signal guiding optimization has already shifted.

If your pixel configuration is incomplete, this problem becomes even worse. Proper event implementation — like the process described in How to Create Facebook Pixel and Track Conversions — is essential because the optimization engine relies entirely on those event signals.

Poor Event Deduplication Creates False Conversion Signals

Another subtle tracking issue appears when client-side pixels and server events both fire without proper deduplication.

Platforms expect both sources to send events, but they require a shared identifier to merge them. Without that identifier, the platform counts the same conversion twice.

This can temporarily make campaigns look stronger than they actually are.

The side effects appear later during scaling:

-

Reported conversion volume becomes inflated.

The platform believes more conversions are occurring than reality supports. -

Bid models become overly aggressive.

Because the campaign appears profitable, the system increases bids and expands delivery. -

Real CPA rises during scale attempts.

The algorithm begins entering auctions that the campaign cannot actually sustain. -

Learning phases repeat unexpectedly.

The platform recalibrates once it detects inconsistencies between predicted and observed results.

A common diagnostic sign inside Ads Manager is this pattern:

-

Strong results at low spend.

-

Rapid CPA increases once budgets double.

-

Conversion counts fluctuate unpredictably across days.

When event duplication exists, the campaign is effectively optimizing against fictional performance data.

Modern tracking setups typically combine pixel events with server signals through Meta’s Conversion API. If that infrastructure is configured incorrectly, duplication or signal loss becomes likely — which is why many advertisers move toward server-side implementations such as those explained in Server-Side Tracking for Facebook Ads: A Beginner’s Guide.

Delayed Events Break the Optimization Feedback Loop

Ad delivery models rely heavily on timing signals.

If purchase events arrive hours after the conversion occurs, the system receives feedback too late to influence upcoming auctions.

This often happens with:

-

Server events queued incorrectly.

-

Third-party tracking tools introducing processing delays.

-

Offline conversion uploads with inconsistent schedules.

From the platform’s perspective, the campaign looks like it is converting slowly or inconsistently.

Consider a typical feedback timeline:

| Event Stage | Ideal Timing | Delayed Scenario |

|---|---|---|

| Ad click | Immediate | Immediate |

| Site visit | Immediate | Immediate |

| Purchase event received | Within minutes | Several hours later |

| Optimization update | Same learning cycle | Next learning cycle |

When events arrive late, the model cannot correctly associate recent impressions with successful outcomes.

The consequences appear in several campaign metrics:

-

Learning phases last longer than expected.

-

Daily spend fluctuates heavily.

-

Conversion volume appears uneven across days.

In many cases, this behavior is tied to attribution delays rather than actual performance changes. Understanding how attribution timing works — as explained in Meta Ads Attribution: What to Know About Windows, Delays, and Data Accuracy — helps distinguish tracking lag from real campaign problems.

Low Event Volume Weakens the Model’s Predictive Accuracy

Platforms require a minimum volume of conversion events to train their optimization models effectively.

Meta, for example, recommends roughly 50 conversions per week per ad set for stable optimization. When event tracking reduces the recorded volume below that threshold, the campaign enters a weaker learning state.

This produces several delivery patterns that operators often misdiagnose.

The campaign resets learning repeatedly

When event volume drops too low, the model cannot stabilize. Each structural change — budget adjustment, creative swap, audience expansion — forces another learning reset.

The result is unstable performance across days.

Delivery concentrates on a narrow audience pocket

Without enough signals, the algorithm becomes conservative. Instead of exploring broadly, it prioritizes a small group of users that previously converted.

This often causes:

-

Frequency increases.

-

Audience fatigue.

-

Rising CPM.

Scaling stalls earlier than expected

When the model lacks confidence in its predictions, it becomes reluctant to expand delivery. Budgets increase, but spend does not grow proportionally.

From the outside, the campaign appears “capped,” even though the market still contains available buyers.

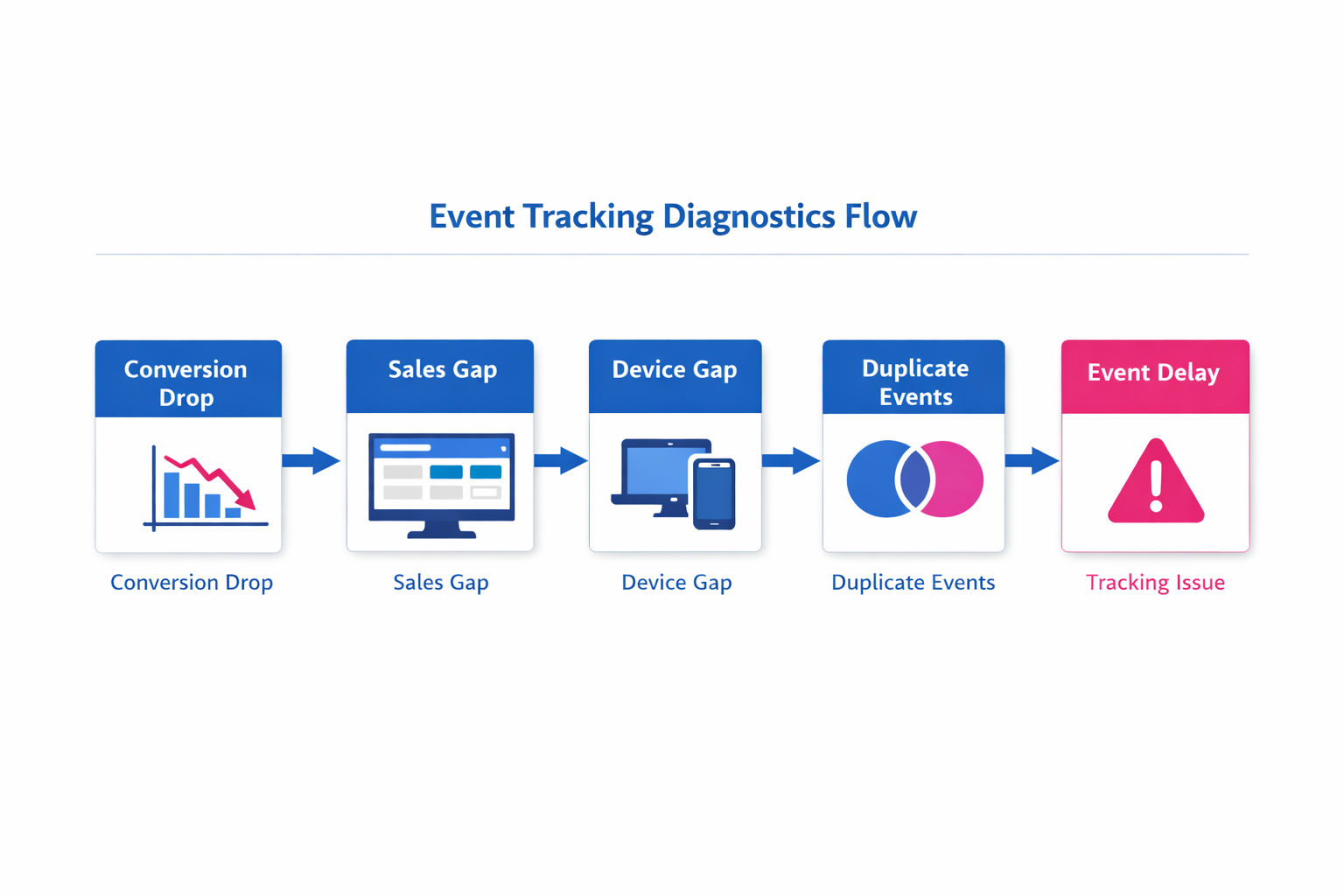

Diagnosing Event Tracking Problems in Active Campaigns

Tracking issues rarely appear as explicit platform errors. Most of the time they surface indirectly through campaign behavior.

A few diagnostic checks reveal many hidden problems.

Compare platform conversions to backend sales

Export a daily report from the ad platform and compare it against your store or CRM data.

Look for patterns such as:

-

A consistent gap between reported and actual conversions.

-

Sudden drops in tracked events after site changes.

-

Device-specific discrepancies in recorded purchases.

If real sales remain stable while tracked conversions fall, the problem likely sits inside the tracking layer rather than the campaign.

Review event distribution across devices and browsers

Inside the event manager or analytics tool, examine how conversions distribute across environments.

Warning signals include:

-

Unusually low events from Safari or iOS devices.

-

A large imbalance between desktop and mobile conversions.

-

Sudden shifts in browser share after tracking changes.

These patterns often indicate that privacy restrictions or script conflicts are blocking events.

Check for duplicate event IDs

Platforms typically provide diagnostics for deduplication errors.

Signs of duplication include:

-

Conversion counts that exceed real sales.

-

Event manager warnings about missing deduplication parameters.

-

Large discrepancies between pixel events and server events.

Examine event timing

Many event debugging tools show the delay between the user action and the recorded event.

Look for:

-

Purchases appearing several hours after they occur.

-

Large batches of conversions uploading simultaneously.

-

Inconsistent event timestamps across sources.

These timing gaps can distort attribution and reporting — especially if your campaigns rely on platform attribution models like those explained in How to Use the Facebook Attribution Tool to Optimize Your Facebook Ad Performance.

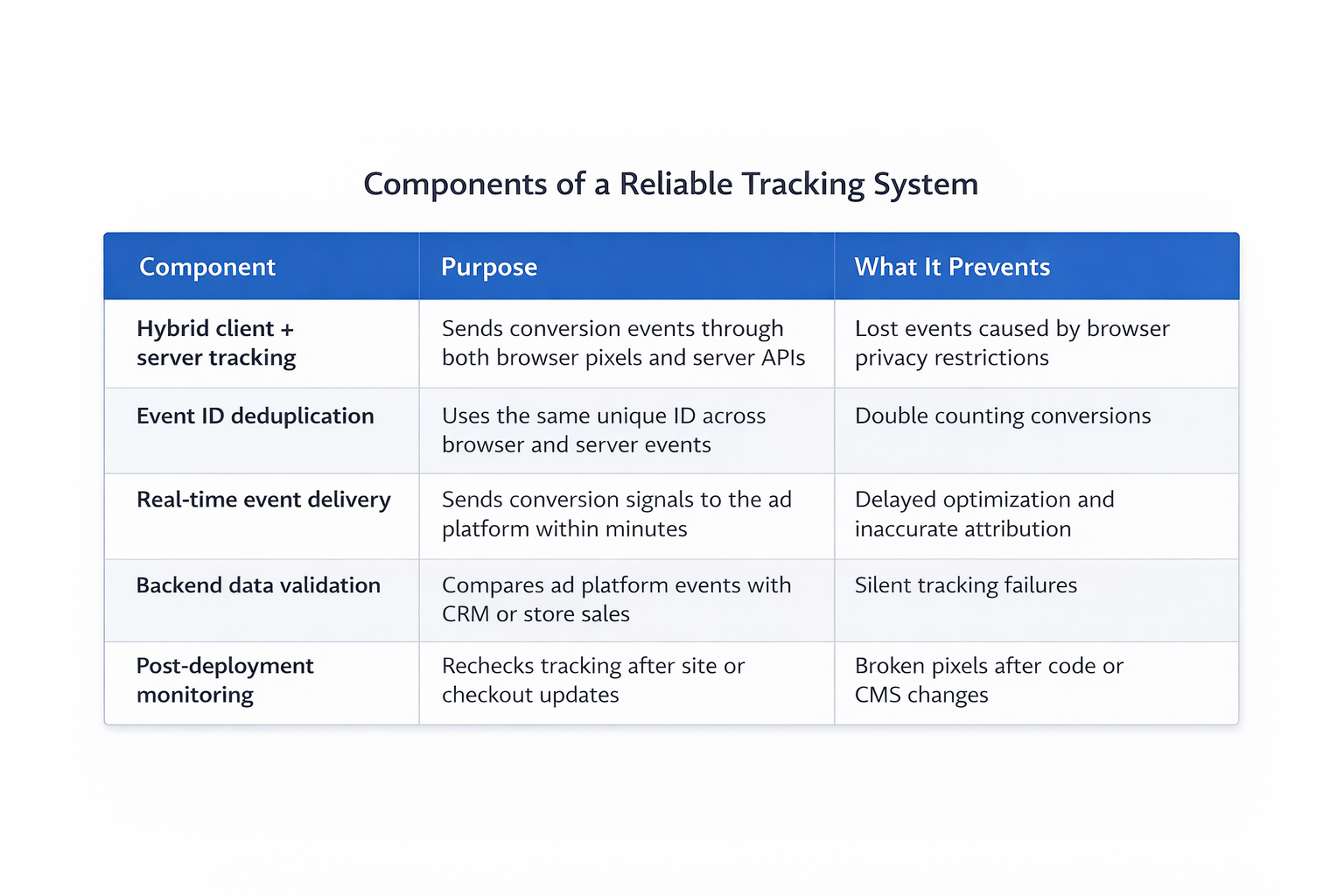

Structuring a More Reliable Tracking System

Preventing these issues requires treating tracking as operational infrastructure rather than a one-time setup.

A stable system usually includes several components working together.

-

Hybrid client and server tracking.

Combining browser pixels with server-side events improves resilience when privacy restrictions block client scripts. -

Consistent event identifiers for deduplication.

Every purchase or lead event should carry a unique ID shared between browser and server sources. -

Real-time event delivery.

Conversion signals should reach the ad platform within minutes rather than hours. -

Periodic validation against backend data.

Regular comparisons between ad platform events and internal sales data reveal discrepancies early. -

Monitoring after site updates.

Tracking frequently breaks after checkout changes, CMS updates, or script modifications. Post-deployment verification prevents silent failures.

These steps do not directly increase campaign performance. They ensure the optimization system receives an accurate picture of reality.

Without that accuracy, every other improvement — creative testing, targeting changes, budget adjustments — rests on unstable data.

Why Tracking Quality Determines Scaling Potential

Campaign optimization is fundamentally a feedback system.

Ads generate user actions, those actions create event signals, and the platform uses those signals to refine delivery in future auctions.

When event tracking weakens that feedback loop, the platform continues spending but loses the ability to learn effectively.

This explains why poorly tracked campaigns often show the same symptoms:

-

Gradual CPA inflation.

-

Inconsistent learning cycles.

-

Difficulty scaling beyond early spend levels.

The underlying problem is not creative or targeting. It is the data guiding the optimization engine.

Fixing event tracking does not produce an immediate spike in results. What it restores is the platform’s ability to correctly identify valuable users.

Once the algorithm sees accurate signals again, the campaign can finally optimize toward the outcomes you actually care about.