Testing new audience segments inside Meta is deceptively expensive.

What looks like a simple expansion often turns into unstable delivery, noisy signals, and misleading early results.

The issue is not just which audiences you test — it’s how you validate them.

If your validation process is slow or structurally flawed, you end up scaling segments that never had real potential — or killing ones that needed a different setup to prove themselves.

This article breaks down how to validate new audience segments quickly, without sacrificing signal quality or making premature decisions.

Why Most Audience Tests Produce Unreliable Results

A typical scenario: you launch a new audience, it spends for 2–3 days, CPA spikes, then drops, then spikes again. Nothing looks stable enough to trust.

That instability is not a reflection of audience quality. It’s a reflection of how Meta’s system behaves under low signal conditions.

Three things are happening under the surface:

-

Low event density distorts performance

If an ad set produces only 3–5 conversions, one extra conversion can cut CPA in half. That’s not improvement — it’s variance. -

Exploration phase dominates delivery

The system tests multiple sub-clusters inside the audience. CPM and CTR fluctuate because the algorithm is still probing where performance exists. -

Feedback loops are incomplete

Until the system sees repeated conversion patterns, it cannot prioritize the right users in auctions.

This is why early results often feel random.

If you want a deeper breakdown of early-stage evaluation, read What Facebook metrics really matter when testing new audiences.

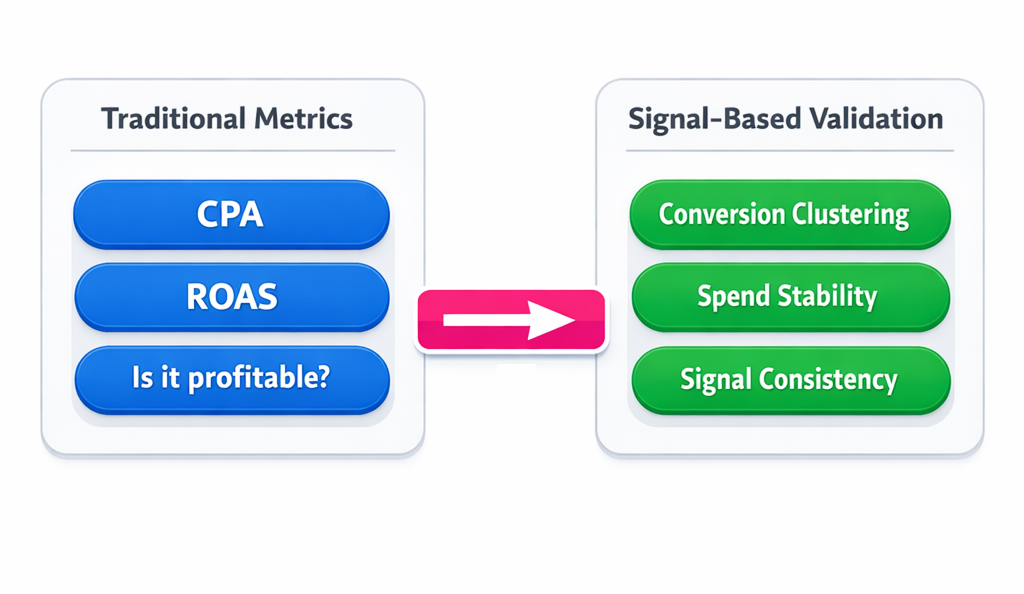

The Core Principle: Validate Signals, Not Outcomes

Fast validation requires a different evaluation model.

Instead of asking: “Is this audience profitable yet?”

Ask: “Is this audience producing stable optimization signals?”

Profitability is downstream. Signal quality determines whether the system can ever optimize.

Strong audiences show:

-

Conversion clustering

You’ll notice conversions happening in bursts — same time windows, similar user behavior patterns. -

Smooth spend distribution

Budget is allocated consistently throughout the day, not in isolated spikes. -

Controlled variability

CPA may be high, but it doesn’t swing wildly without reason.

This aligns with how Meta evaluates users internally — increasingly based on behavior signals rather than static segments.

For a deeper conceptual shift, see Signals, Not Segments: The New Way Meta Measures Users.

Structuring Fast Validation Tests

Speed doesn’t come from rushing decisions.

It comes from removing noise.

1. Keep Creative Constant

If you change audience and creative at the same time, you lose interpretability.

Lock variables:

-

Same copy — keeps message intent stable.

-

Same visual format — avoids engagement bias.

-

Same landing page — ensures conversion consistency.

Now, performance differences reflect audience behavior, not creative variance.

2. Concentrate Budget for Signal Density

Low budgets delay validation more than they save money.

Instead of testing 6 audiences with €20/day each, test 2 audiences with €60/day.

Aim for:

-

15–25 conversion events within 3–5 days

-

Avoid datasets with fewer than 5 conversions

-

Reduce parallel tests if budget is limited

If you’re working with constrained budgets, this becomes critical — Campaign optimization for Facebook Ads with small daily budgets.

3. Reduce Structural Fragmentation

Too many ad sets = diluted signals.

Common mistake:

-

5 similar audiences

-

each in separate ad sets

-

each getting minimal data

Better approach:

-

Combine related audiences

-

Use exclusions instead of splitting

-

Let the algorithm find sub-patterns internally

Over-segmentation slows learning and creates false negatives.

This is closely tied to audience overlap issues — The role of audience overlap in Facebook Ads performance.

Diagnostic Signals That Indicate a Valid Audience

You don’t need weeks to evaluate an audience.

You need the right signals in the first few days.

Focus on what you can actually observe in Ads Manager:

-

Spend consistency

If the ad set spends 80–100% of its daily budget consistently, the system is confident entering auctions. -

Repeated conversion timing

Look for patterns — same hours, same days, similar user paths. -

CTR stability vs account baseline

Large swings often indicate mismatch between audience and message. -

CPM behavior

High and unstable CPM usually signals poor relevance or weak auction competitiveness.

These are early indicators of scalability — not just performance.

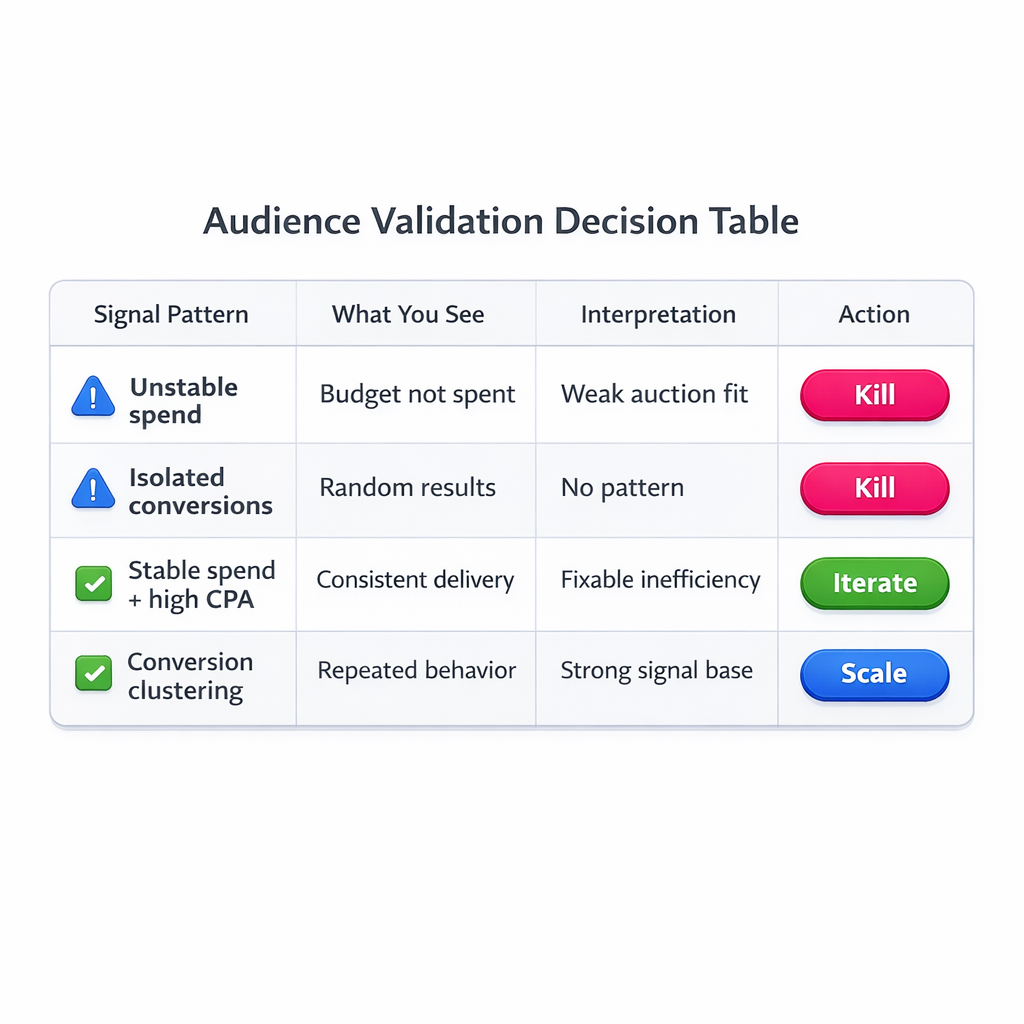

When to Kill vs When to Iterate

Not all underperformance is equal.

You need to separate structural failure from early inefficiency.

Kill the audience when:

-

Spend frequently stalls or underdelivers.

-

Conversions are isolated with no pattern.

-

CPM is consistently inflated with no stabilization.

This means the system cannot find enough viable users.

Iterate when:

-

Conversions are happening but CPA is high.

-

Spend is stable but efficiency fluctuates.

-

Engagement metrics are within normal ranges.

This means the audience is viable — just not optimized yet.

In these cases, adjust conditions instead of abandoning the segment:

-

Switch optimization event temporarily.

-

Adjust bidding constraints.

-

Refine exclusions to remove low-quality clusters.

Speed Comes From Decision Clarity

Most delays in validation don’t come from the algorithm.

They come from unclear evaluation criteria.

If you rely only on CPA:

-

You need more time

-

more data

-

and more spend

If you evaluate:

-

signal stability,

-

delivery consistency,

-

and behavioral repetition,

you can make confident decisions within days.

A Practical Validation Workflow

Use this as a repeatable process:

-

Step 1 — Launch controlled test

Standardized creatives, structured budget, minimal segmentation. -

Step 2 — Observe first 72 hours

Ignore CPA spikes. Watch spend patterns and conversion clustering. -

Step 3 — Evaluate signal quality

Focus on stability, not efficiency. -

Step 4 — Decide path

Kill unstable audiences. Iterate viable ones. -

Step 5 — Scale only after confirmation

Increase budget once patterns repeat.

Final Takeaway

Audience validation is not about waiting for results — it’s about filtering signal quality.

Strong segments stabilize early. Weak ones remain inconsistent no matter how long they run.

Once you start evaluating how the system behaves, not just what it reports, validation becomes faster, clearer, and far more reliable.