If you’re running both the Meta Pixel and Conversions API (CAPI), you’re strengthening your tracking architecture. Server-side tracking improves signal resilience, reduces browser dependency, and increases match quality.

But when deduplication is misconfigured, performance data becomes structurally unreliable. You can see inflated conversions, distorted ROAS, unstable learning phases, or unexplained volatility. All of that can stem from a simple synchronization failure.

This is not a reporting nuance. It directly affects how Meta’s optimization engine interprets performance.

If you’re serious about audience performance and signal quality — especially when building advanced Custom Audiences — this foundation matters. For a broader structural view of audience mechanics, see Facebook Custom Audiences Guide: Everything You Need to Know.

Let’s break down what actually happens, why errors occur, and how to fix them properly.

Why Deduplication Exists in the First Place

When you send events from both:

-

The Meta Pixel (browser-side tracking).

-

The Conversions API (server-side tracking).

You are often sending two representations of the same real-world action. For example, a Purchase event may fire in the browser when the thank-you page loads and simultaneously be sent from your backend when the transaction is confirmed.

Meta must decide whether these two signals represent one conversion or two. That decision depends on deduplication.

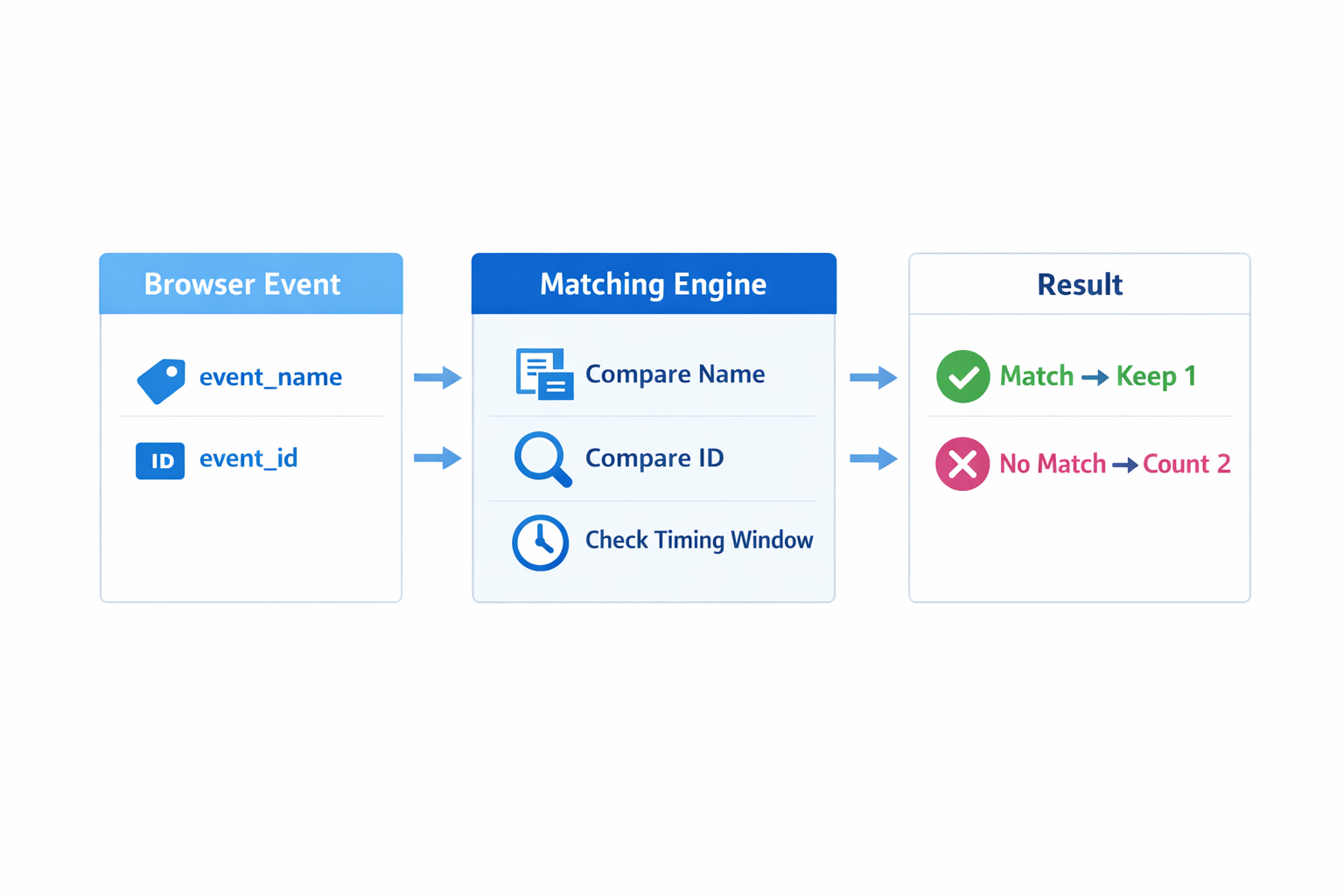

The matching relies primarily on:

-

event_name -

event_id

If both fields are identical between browser and server events, Meta keeps one and discards the duplicate. If they do not match, Meta treats them as separate conversions.

At scale, that changes your optimization data set. It also affects how reliably you can build high-quality seed audiences for retargeting and lookalikes.

What Actually Goes Wrong

Most deduplication failures fall into four structural categories.

1. Event ID Mismatch

This is the most frequent and most damaging issue.

The browser event may send one event_id, while the server event sends:

-

A different randomly generated ID.

Meta sees two independent events. Even if they occur at the same time with the same user parameters, they cannot be merged without a shared identifier. -

A static ID reused across multiple conversions.

Meta may incorrectly merge unrelated events or discard them because the ID does not represent a unique transaction. -

No

event_idat all.

Without an ID, deduplication cannot occur. Meta has no deterministic way to confirm the events are identical.

Fix: generate the event_id once in the browser at the moment the event fires. Store it in the data layer or request payload and forward that exact value to your server before the CAPI call is made. The server must reuse that same ID, not create a new one.

2. Event Name Inconsistency

Deduplication requires identical naming conventions.

If the Pixel sends:

Purchase

And CAPI sends:

purchase or OrderCompleted

Meta treats these as separate events. Deduplication fails even if the event_id matches.

This becomes especially problematic when those events are used to build retargeting segments or high-intent Custom Audiences. Inconsistent naming creates fragmented audience pools.

Fix: standardize event naming across all tracking layers. Use canonical Meta event names consistently unless you have a defined custom architecture.

3. Timing Window Misalignment

Meta deduplicates events received within a specific time window. If:

-

The browser event fires immediately when the confirmation page loads.

-

The server event is delayed due to backend processing or queue systems.

Meta may receive the events too far apart in time to confidently merge them.

This issue often appears in privacy-focused setups or advanced server implementations. If you are building durable, privacy-resilient tracking systems, timing consistency is just as important as data depth. For a deeper look at sustainable audience infrastructure, see How to Build Privacy-Safe Facebook Audiences Without Cookies.

Fix: ensure CAPI events fire in near real time. Avoid batch-processing critical conversion events.

4. Partial Coverage Setup

Some advertisers send:

-

Browser + Server for Purchase.

-

Browser only for AddToCart.

-

Server only for certain transactions.

The problem is not partial implementation itself. The problem is inconsistency within the same event type.

For example:

-

Some Purchase events are deduplicated correctly.

-

Others are sent only via server.

-

Others are sent only via browser.

This creates irregular signal density. Meta’s learning model expects consistent input. If event transmission patterns vary, performance becomes unstable.

It also weakens the reliability of Custom Audience building. If your Purchase pool contains duplicated or fragmented events, your retargeting and lookalike seeds degrade. This becomes especially relevant when turning backend data into scalable audience assets, as explained in How to Turn CRM and Email Lists into High-Quality Facebook Audiences.

Fix: audit each event type individually. Confirm that every Purchase event follows the same transmission path. Consistency matters more than complexity.

How Deduplication Errors Distort Optimization

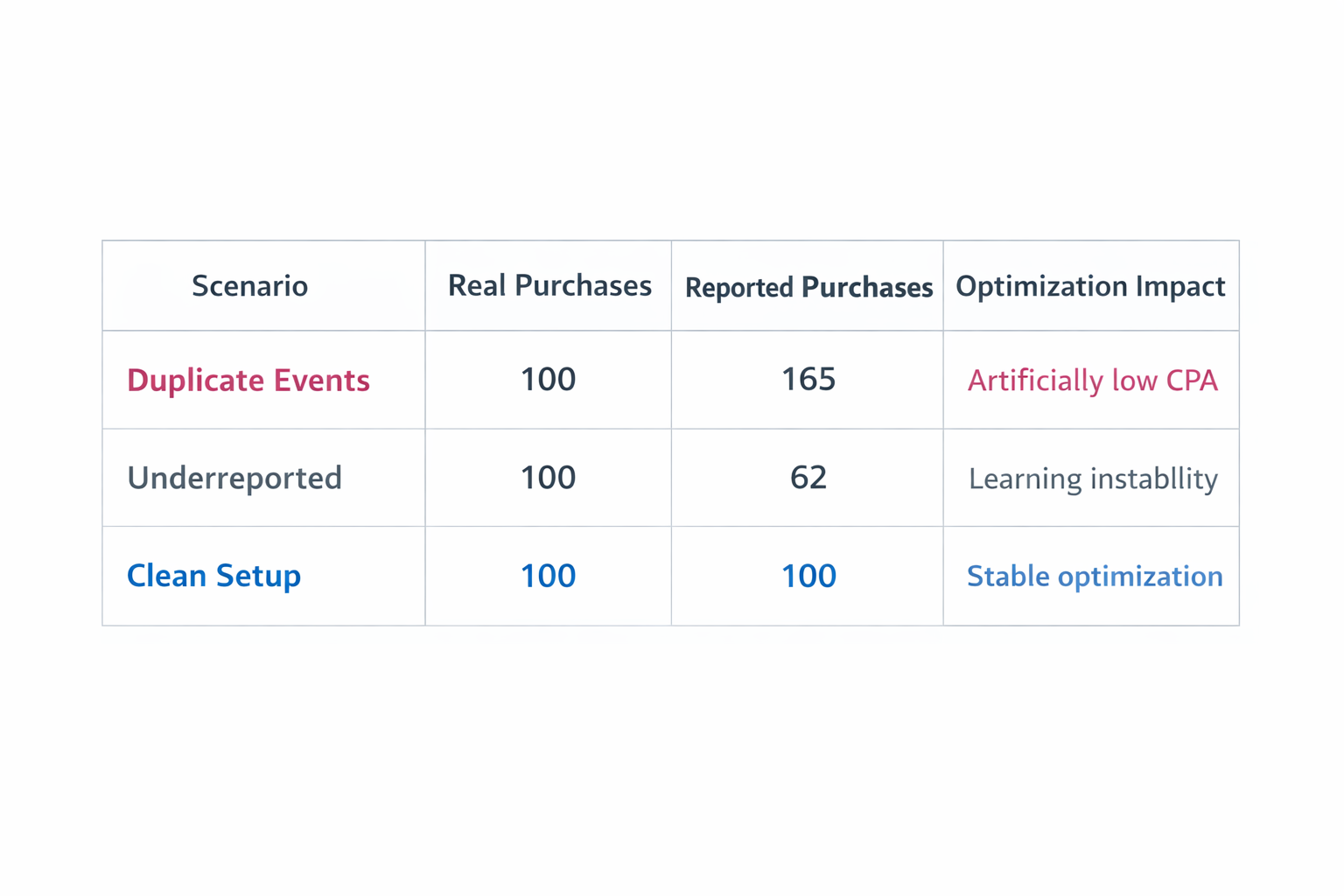

When conversions are inflated:

-

Cost per result appears artificially low because duplicate events reduce calculated CPA.

-

ROAS looks stronger because revenue may be attributed twice.

-

Scaling decisions are based on perceived efficiency rather than real profitability.

Meta may increase budget toward segments that appear to convert frequently, even if those conversions are duplicated artifacts.

When conversions are underreported:

-

Ad sets may struggle to exit the learning phase because event thresholds are not reached.

-

Stable performance can appear volatile due to inconsistent signal flow.

-

Budget allocation becomes conservative because campaigns seem inefficient.

In both scenarios, the algorithm is being trained on distorted data.

If Custom Audiences are meant to be the structural core of your strategy, signal accuracy is not optional. For the strategic layer behind this approach, see Why Custom Audiences Should Be the Core of Your Ad Strategy.

Final Takeaway

Deduplication is not a reporting cleanup task. It is a signal integrity requirement.

Meta’s optimization engine depends on:

-

Event frequency.

-

Event consistency.

-

Event credibility.

If your event architecture is unstable, targeting and creative adjustments cannot compensate for it.

Clean synchronization between Pixel and CAPI restores signal trust. Once that foundation is solid, audience building becomes reliable, optimization becomes rational, and scaling decisions align with actual revenue rather than tracking artifacts.