Many Facebook Ads accounts struggle not because of weak creatives or insufficient budget, but because optimization decisions disrupt how Meta’s delivery system learns.

The algorithm relies on stable conversion signals to predict which users are most likely to convert. When campaign structure, budgets, or targeting change too frequently, the system loses the continuity it needs to refine those predictions.

Below are several optimization mistakes that appear repeatedly in real accounts — and the mechanisms that cause them to damage performance.

Constantly Editing Campaigns During Delivery

A campaign that receives frequent edits rarely stabilizes. Many advertisers underestimate how disruptive these adjustments can be. As explained in Should I Edit My Facebook Ads?, even small changes to an active campaign can interrupt the algorithm’s learning process and temporarily reduce delivery efficiency.

When you change core delivery variables — such as budget, audience targeting, optimization event, or creative combinations — Meta recalculates delivery predictions. The system temporarily loses confidence in which users convert and begins testing again.

This becomes visible in Ads Manager through several signals:

-

Learning phase resets.

When a campaign re-enters the learning phase, the model no longer has enough recent conversion data tied to the current configuration. The algorithm must run new delivery experiments before it can narrow targeting again. -

Uneven spend distribution.

Campaigns with unstable learning often spend irregularly. You may see several hours with little delivery followed by sudden bursts of spending when the system tests new audience clusters. -

Cost volatility.

CPA and CPC fluctuate because the campaign is no longer optimizing within a stable behavioral pattern. The algorithm cycles through different user segments trying to rebuild performance signals.

What to do instead

Allow the system to accumulate meaningful data before making structural adjustments.

Practical guidelines many operators follow include:

-

Wait for sufficient conversion volume; roughly 50 optimization events per ad set usually give the algorithm enough signal density to identify early behavioral patterns;

-

Group edits into scheduled optimization windows; instead of reacting continuously, evaluate campaigns every few days so delivery remains stable between adjustments;

-

Change one variable at a time; if budget, targeting, and creatives all change simultaneously, it becomes impossible to determine which change influenced performance.

Stable delivery allows the algorithm to gradually refine audience selection instead of restarting its learning process.

Over-Segmenting Campaign Structure

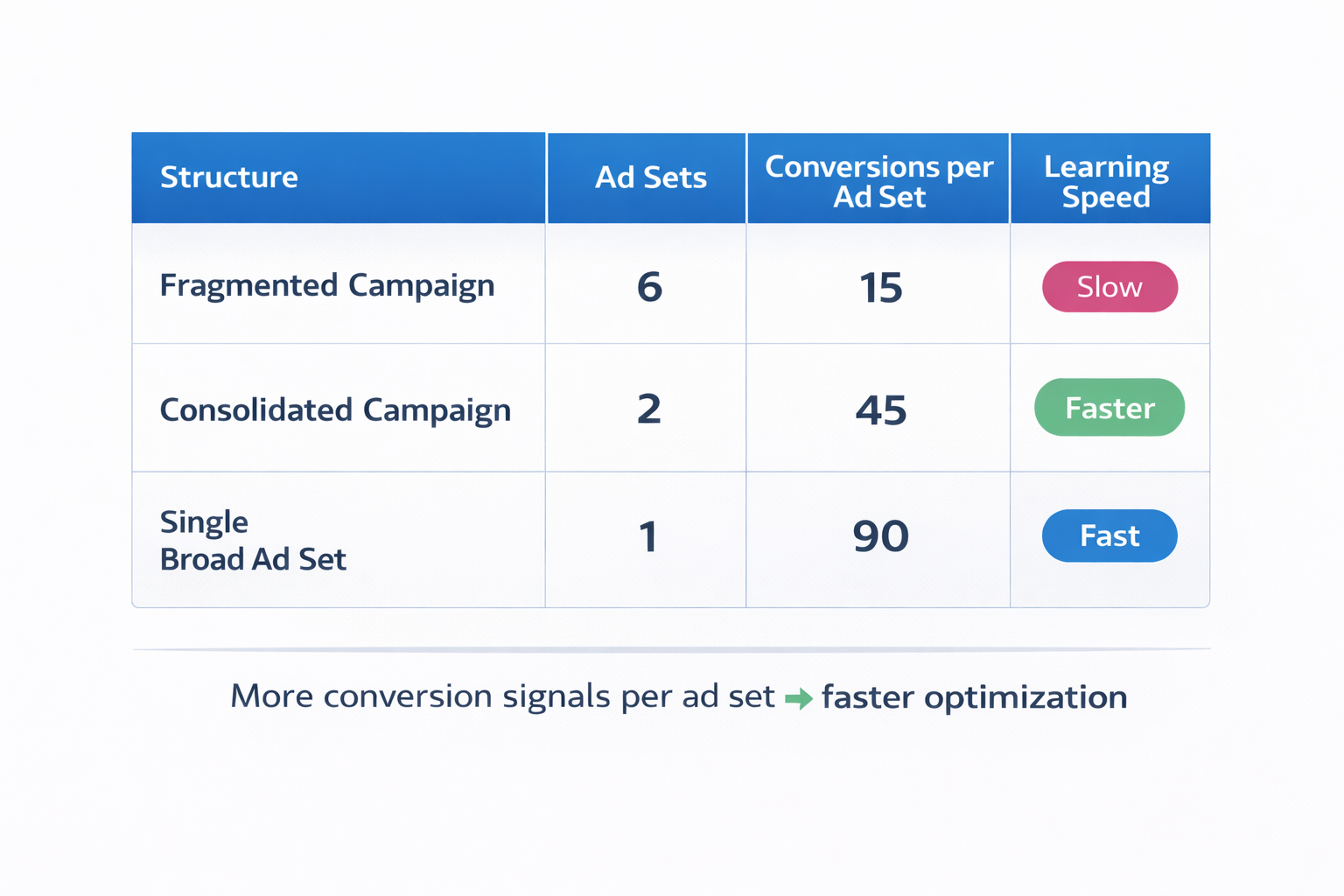

Some advertisers divide campaigns into many narrowly targeted ad sets — separate audiences for interests, demographics, devices, or small behavioral groups.

This approach fragments data and slows optimization.

Three operational problems typically appear.

1. Data fragmentation

Each ad set collects only a small portion of total conversion signals.

For example, instead of one ad set receiving 100 conversions in a week, five ad sets may receive 15 – 20 conversions each. With such small datasets, the algorithm struggles to identify statistically reliable patterns between user behavior and conversions.

As a result, delivery decisions remain uncertain and optimization progresses slowly.

When audience pools become too fragmented, campaigns can also struggle to exit the learning phase — a situation discussed in Facebook Ads Audience Too Narrow? How to Troubleshoot a Limited Audience.

2. Internal auction competition

Multiple ad sets targeting similar users often enter the same auctions.

When this happens, your campaigns effectively compete against themselves. Meta’s system selects the ad set with the highest predicted value in each auction, but the overlap increases bidding pressure and can raise CPM unnecessarily.

The account spends more to reach the same users. This dynamic is explored in more detail in Facebook Ad Auction: Do Ad Sets Compete Against Each Other?

3. Slower optimization cycles

Because each ad set receives limited data, performance signals stabilize much later.

Instead of quickly learning which users convert, the algorithm runs longer testing cycles across multiple small datasets.

A more efficient structure

Many accounts perform better when campaigns are simplified:

-

broader audiences;

-

fewer ad sets;

-

multiple creatives within each ad set.

This structure concentrates conversion data, allowing the algorithm to detect behavioral patterns faster and allocate impressions more efficiently.

Optimizing Too Early Based on Small Data Samples

A frequent optimization mistake occurs when advertisers evaluate campaigns after only a few conversions.

A typical pattern looks like this:

-

Early CPA misinterpretation; when only two or three conversions occur, the average CPA is extremely sensitive to outliers, and one expensive purchase can double the apparent cost;

-

Premature ad set shutdown; advertisers often pause ad sets that appear expensive early on, even though the algorithm may still be testing which audience clusters respond to the ads;

-

Repeated learning cycles; pausing and launching new ad sets prevents campaigns from accumulating stable conversion signals.

Observable signals in Ads Manager

Accounts affected by early optimization often show:

-

numerous paused ad sets with fewer than 10 conversions;

-

campaigns repeatedly entering the learning phase;

-

inconsistent daily spend patterns.

These signals indicate the algorithm never receives enough data to stabilize.

A more reliable evaluation method

Instead of reacting to the first conversions, analyze patterns across time:

-

conversion volume trends over several days;

-

cost distribution throughout the day;

-

frequency growth relative to reach.

Often a campaign that initially appears inefficient becomes stable once the algorithm identifies stronger behavioral clusters inside the audience.

Ignoring Frequency and Audience Saturation

Campaigns that run long enough eventually exhaust their most responsive users.

Frequency measures how often the same user sees your ad. As frequency rises, engagement typically declines because the campaign repeatedly reaches users who already decided not to convert.

Several recognizable delivery signals appear when saturation begins.

-

Declining click-through rate; when the same audience repeatedly sees identical ads, curiosity fades and CTR gradually drops;

-

Rising CPA despite stable CPM; when fewer users click and convert, cost per acquisition increases even though auction costs remain similar;

-

Plateauing reach; the campaign stops expanding into new users and continues serving impressions to the same group.

How to respond to saturation

Rather than only adjusting budgets, structural changes usually solve the issue more effectively:

-

expand audience targeting to introduce new users into the delivery pool;

-

launch new creatives that reset user attention;

-

test additional prospecting audiences to replace saturated segments.

Scaling campaigns requires periodically refreshing both audiences and creative assets.

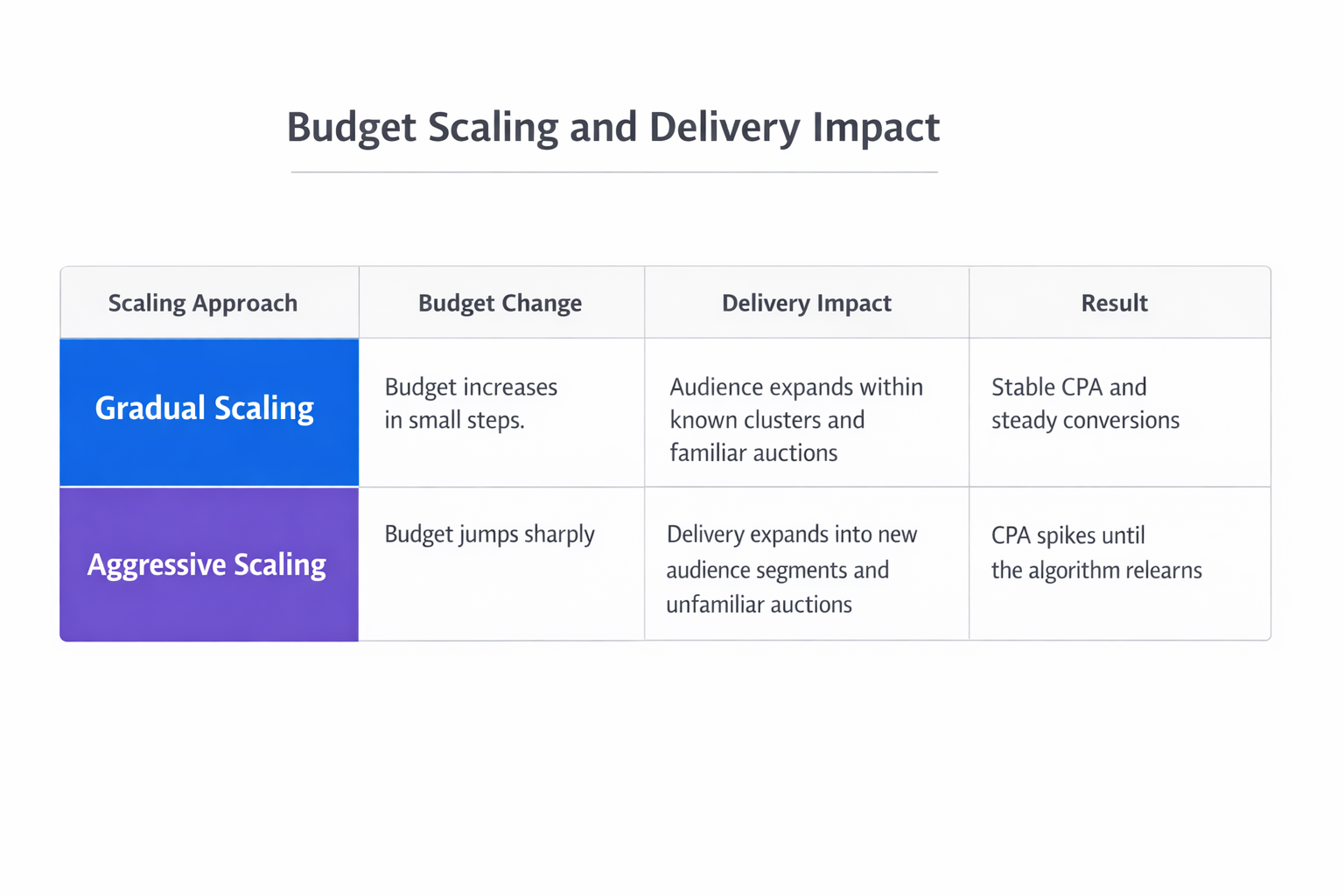

Scaling Budgets Too Aggressively

Budget increases directly influence how often your campaign enters auctions.

When budgets increase gradually, the algorithm expands delivery while preserving learned targeting patterns. When budgets jump sharply, the system suddenly enters many additional auctions where it has less predictive confidence.

This creates several delivery effects.

-

Expansion into weaker audience segments; to spend the larger budget, the campaign must reach users beyond the high-intent clusters it previously identified;

-

Participation in more expensive auctions; larger budgets push the campaign into auctions that require higher bids to win impressions;

-

Temporary CPA spikes; conversion efficiency drops until the algorithm gathers enough new data to refine targeting again.

A deeper breakdown of safe scaling mechanics can be found in The Science of Scaling Facebook Ads Without Killing Performance.

Safer scaling methods

To maintain stability during growth, many advertisers scale budgets incrementally.

Common approaches include:

-

20 – 30% budget increases every few days; this allows delivery models to adapt gradually without destabilizing targeting;

-

duplicating successful ad sets; launching additional ad sets with similar configurations distributes budget growth across multiple delivery models;

-

expanding audiences alongside budget growth; larger budgets work best when the campaign has access to a wider user pool.

Controlled scaling prevents the algorithm from being forced into low-confidence delivery.

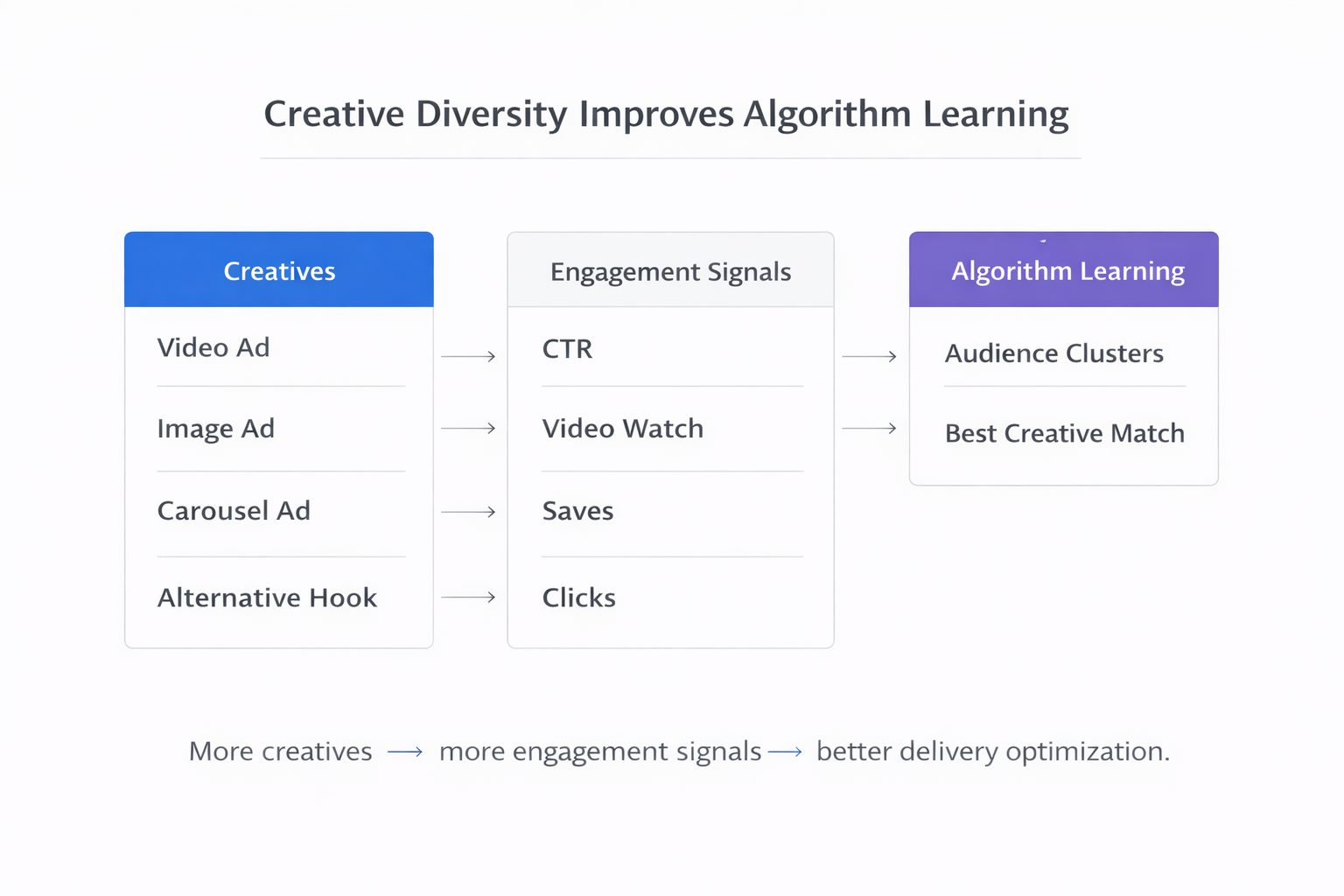

Relying on Too Few Creatives

Targeting often receives most optimization attention, but creative diversity strongly influences delivery performance.

When an ad set runs only one or two creatives, the algorithm has limited engagement signals to evaluate. Creative variation helps the system determine which messaging resonates with different behavioral clusters.

Accounts with minimal creative diversity often show the following patterns.

-

Creative concentration of impressions; one ad receives nearly all impressions because the system has no meaningful alternatives to test;

-

Rapid creative fatigue; users repeatedly see the same ad, reducing engagement and lowering CTR;

-

Limited learning signals; with only one messaging angle, the algorithm cannot identify which value propositions attract different segments of the audience.

Practical creative structure

Campaigns typically perform better when each ad set includes several creative variations:

-

different hooks or opening frames;

-

alternative visual formats such as video, static images, or carousels;

-

multiple value propositions.

Creative diversity increases the volume of engagement signals that guide delivery optimization.

Final Takeaway

Most Facebook Ads optimization problems are not caused by weak targeting or insufficient budget. They arise when optimization decisions interfere with how Meta’s delivery system learns from conversion signals.

Campaigns usually perform best when they maintain:

-

stable structures;

-

concentrated conversion data;

-

gradual budget scaling;

-

diverse creative testing;

-

consistent optimization signals.

When these conditions exist, the algorithm can refine targeting over time and allocate impressions toward the users most likely to convert.