Most performance issues don’t come from weak optimization. They come from flawed inputs.

You can see this when two campaigns use identical structures — same budget, same objective, same bidding — but produce completely different results. The difference usually sits upstream: in the data, the audience signals, or the creative entering the auction.

Optimization adjusts direction. Inputs determine where you’re allowed to go.

Optimization Only Works Within Input Constraints

Meta doesn’t improve a campaign in isolation. It reallocates delivery based on the signals it receives and reinforces whatever patterns appear most efficient.

If those signals are weak or misleading, optimization becomes a redistribution of inefficiency.

A typical example appears in lead generation campaigns. You launch with broad targeting, early metrics look acceptable, and the system begins scaling. Over time, however, delivery concentrates around users who complete forms quickly, not those who convert into pipeline.

At that point, optimization is working correctly. But the input signal — low-friction form fills — pushes the system toward low-intent traffic.

This is the same mechanism described in Why clicks don’t equal demand, where surface engagement creates a false sense of performance while actual demand remains unchanged.

The algorithm isn’t underperforming. It’s following instructions.

The Three Inputs That Shape Performance

Every campaign outcome is constrained by three core inputs. When results drift, one of these is usually misaligned. The important part is not just identifying them, but understanding how they shape delivery behavior inside the auction.

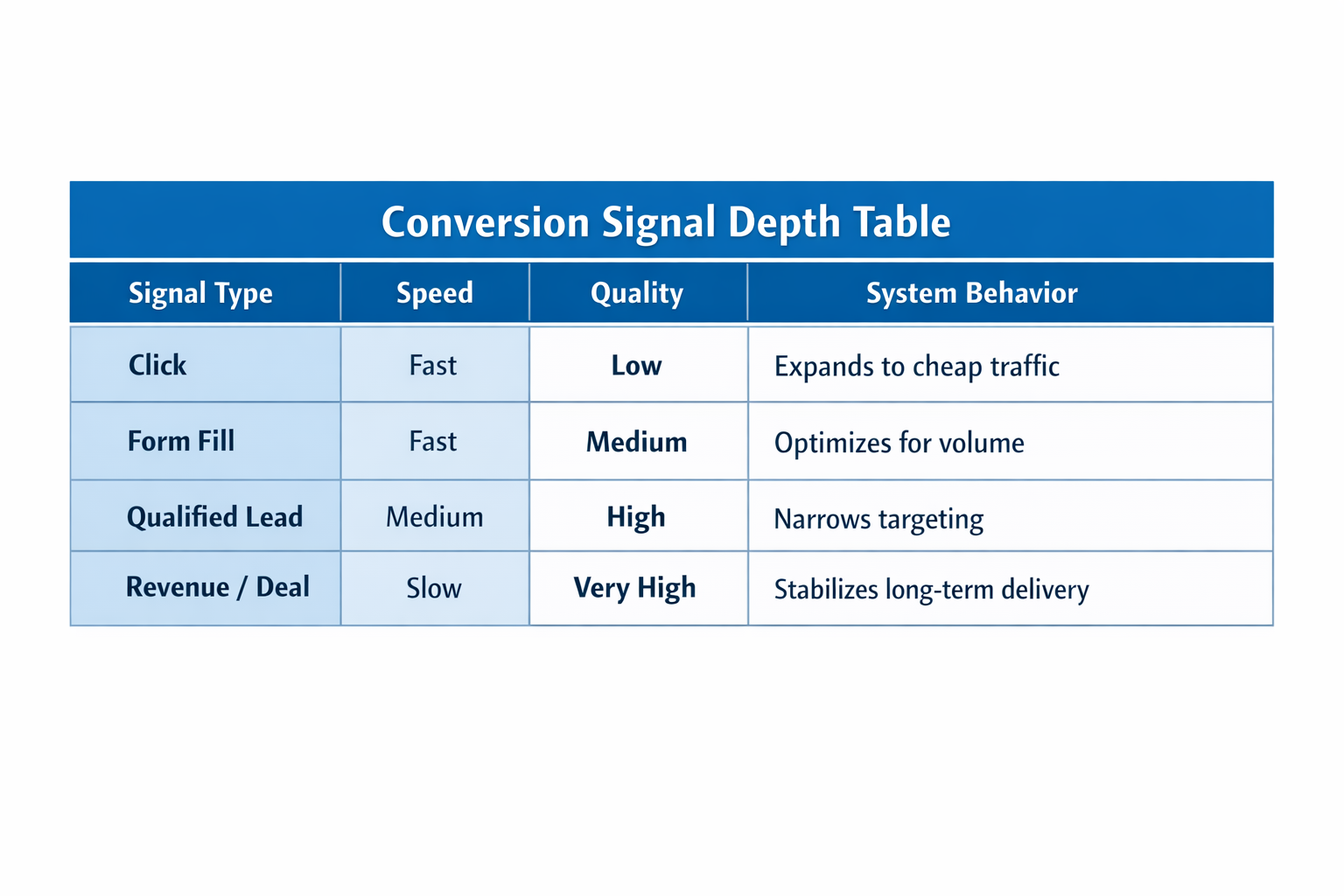

1. Conversion Signal Quality

The algorithm optimizes for the event you define. That event becomes the proxy for value, regardless of whether it reflects real business outcomes.

In practice, weak signals introduce systematic bias:

-

Form submissions with no filtering — the system prioritizes speed and ease of conversion. Over time, delivery shifts toward users who complete forms impulsively, not those with real intent.

-

Top-of-funnel events — optimization expands into cheaper inventory where engagement is easy to generate, but intent is low.

-

Delayed or inconsistent signals — unstable feedback loops prevent the model from converging, which leads to erratic spend patterns and learning resets.

You can validate this directly in campaign data. If CPL improves while sales quality declines, the signal is too shallow. If conversion volume fluctuates heavily, the system lacks consistency in what it’s optimizing toward.

This is closely related to how platforms evaluate outcomes beyond surface metrics, as explained in How to analyze Facebook ad performance beyond CTR and CPC.

In both cases, optimization isn’t broken. The input definition is.

2. Audience Signal Density

Audience quality is not determined by size alone. It depends on how consistent and interpretable the underlying data is.

Two audiences of the same size can behave completely differently:

-

A small dataset built from high-value conversions produces clear behavioral patterns.

-

A large dataset with mixed-quality leads creates noise and conflicting signals.

The second case leads to unstable delivery because the system cannot identify a reliable pattern to follow.

You’ll typically observe:

-

Higher CPM variance — frequent re-entry into auctions due to uncertainty,

-

Extended learning phases — slower convergence caused by inconsistent feedback,

-

Fluctuating conversion rates — unstable performance across time.

This aligns with a broader principle discussed in Audience quality vs quantity: what drives better long-term results? — more data does not automatically mean better performance if that data lacks structure.

The audience isn’t too broad. It’s structurally unclear.

3. Creative Input Strength

Creative determines whether your campaign can compete before optimization even begins.

Every impression is an auction decision. Your ad must generate enough early signals to justify continued delivery.

This happens across three layers:

-

Attention capture (CTR) — without early engagement, the system reduces exposure quickly.

-

Signal generation — meaningful interactions reinforce delivery into similar users.

-

Relative ranking — your ad competes directly against others targeting the same audience.

If your creative underperforms relative to competitors, delivery declines even if everything else remains unchanged.

This is often misdiagnosed as fatigue. Performance drops, frequency is low, and teams rotate creatives expecting recovery.

In reality, the problem is competitive positioning — a concept explored in Why certain ad creatives fail on Facebook and Instagram. The system simply reallocates impressions to ads that generate stronger signals.

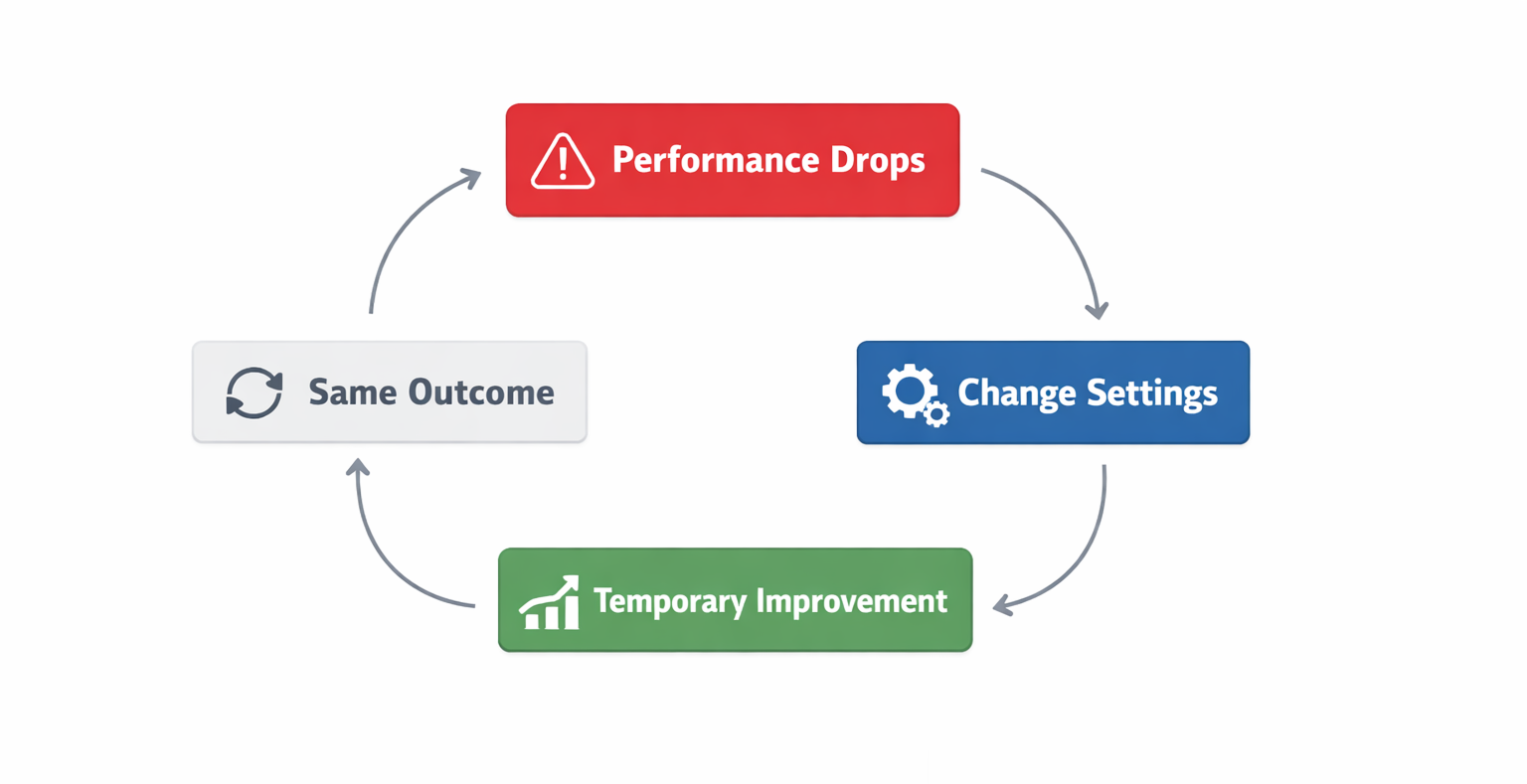

Why Optimization Often Becomes a Distraction

When performance declines, most teams focus on what they can change quickly inside Ads Manager.

Typical adjustments include:

-

Changing bid strategies — switching between cost models without improving the underlying signal quality.

-

Redistributing budgets — shifting spend across ad sets, which changes exposure but not direction.

-

Duplicating campaigns — resetting learning to force short-term variation.

These actions create movement, but not necessarily improvement.

A common pattern emerges. Performance drops, changes are made, results briefly improve, then regress. The temporary improvement comes from resetting the system, not fixing the inputs.

Once the same signals re-enter, the algorithm converges back to the same outcome.

Without changing inputs, optimization has no new path to follow.

Diagnosing Input Problems Inside Campaign Data

Input issues rarely appear as obvious errors. They show up in how performance behaves over time.

Certain patterns are strong indicators:

-

Stable CTR + declining CVR

The creative attracts attention, but the traffic lacks intent. The issue sits in signal definition or audience quality. -

High CPM volatility across days

The system cannot identify consistent high-value segments, so it continues exploring. -

Learning phase resets after small changes

Signal density is too low to support stability. -

Volume increases while downstream quality drops

Optimization is scaling the wrong outcome.

Each of these patterns is observable inside Ads Manager, which makes them useful diagnostic tools rather than abstract ideas.

What Strong Inputs Actually Look Like

High-performing campaigns share structural consistency at the input level. Optimization becomes easier because the system receives clear direction.

Clear and consistent conversion signals

Strong setups define events that reflect actual business outcomes:

-

Revenue-linked events — qualified leads, opportunities, or purchases.

-

Stable signal flow — consistent daily volume that supports learning.

-

Minimal noise — limited inclusion of low-intent actions.

Focused audience data

Effective audience construction prioritizes clarity over scale:

-

High-quality seed data — smaller but more relevant datasets.

-

Consistent behavioral patterns — users share meaningful traits.

-

Controlled segmentation — avoiding mixed-intent groups.

Competitive creatives

Creative strength determines access to efficient delivery:

-

High relative CTR — performance measured against competitors, not internal benchmarks.

-

Message alignment — the ad promise matches the conversion action.

-

Strong early signals — engagement that supports expansion.

When these inputs are aligned, optimization becomes a natural continuation of the system, not a separate lever.

A Practical Shift: Optimize Inputs First

Instead of asking how to improve performance through settings, it’s more useful to evaluate what the system is optimizing for.

This leads to different operational decisions:

-

Replace shallow conversion events — move toward qualified signals, even if volume drops initially.

-

Rebuild audiences from high-quality subsets — prioritize clarity over scale.

-

Evaluate creatives against market competition — not just internal performance.

These adjustments often reduce short-term efficiency. CPL may increase, and volume can decline.

But the system starts optimizing toward outcomes that actually drive revenue.

Final Takeaway

Optimization doesn’t create performance. It amplifies whatever inputs you provide.

If those inputs are misaligned, the algorithm will scale the problem with precision. Once the inputs are corrected, optimization becomes predictable — because the system finally has the right direction.