Creative drives performance in Meta campaigns more than targeting tweaks. Yet most accounts test randomly and call it strategy. Stable results require a structured system that controls variables, budgets, and evaluation timing.

This guide explains how to design creative testing that produces predictable gains instead of temporary spikes.

Why Creative Testing Fails in Most Accounts

Many advertisers confuse activity with experimentation. They launch several ads at once and hope the algorithm finds a winner.

The problem is uncontrolled variation. When multiple elements change at once, results become impossible to interpret.

Common structural mistakes include:

-

Testing different hooks, formats, and audiences in the same ad set; performance differences reflect targeting shifts, not creative strength.

-

Killing ads too early; early volatility often reflects learning phase instability rather than weak messaging.

-

Declaring winners based on CTR alone; strong clicks do not guarantee qualified leads or sales.

-

Rotating new creatives into scaling campaigns; this resets stability and contaminates performance data.

If this pattern sounds familiar, review why your creative testing strategy isn’t working and compare it with your current setup.

Stable results come from isolation and sequencing. Each test must answer one clear question.

The Foundation: Control Before Creativity

Creative testing begins with account architecture. Without control, even strong ads produce misleading data.

Separate Testing From Scaling

Testing and scaling have different objectives. Mixing them destabilizes both.

Create a dedicated testing campaign with fixed budgets and broad targeting. Keep your scaling campaign isolated and untouched by experimental ads.

This separation achieves two things:

-

Budget control; test results are not inflated by high spend from scaling campaigns.

-

Signal clarity; winning ads enter scaling only after validation.

-

Performance protection; strong performers do not compete with unstable variations.

If you struggle with campaign organization, study how to structure ad accounts for scale and compare it to your current hierarchy.

Testing becomes a filter, not a gamble.

Fix the Variable You Are Measuring

Every test must isolate one dimension. Otherwise, you measure noise.

You can structure tests around:

-

Hook; change only the first three seconds or headline while keeping visuals identical.

-

Format; compare static versus video while preserving the same messaging angle.

-

Angle; test problem-focused messaging against outcome-focused messaging with identical structure.

-

Offer framing; keep visuals constant while shifting value emphasis.

If more than one element changes, the data loses meaning. Many advertisers ignore this and end up in the trap described in why testing too many ads at once hurts your campaign results.

Designing a Creative Testing Framework

A framework defines when to launch, evaluate, and promote creatives. Without timing rules, decisions become emotional.

Step 1: Define the Testing Hypothesis

Each test starts with a hypothesis, not a design idea.

For example:

-

Problem: Lead quality is weak despite high CTR.

-

Hypothesis: The hook attracts curiosity instead of buyers.

-

Test: Introduce qualification language in the first line.

This approach ties creative to business metrics rather than vanity engagement.

Step 2: Use Controlled Volume

Testing requires enough data to reach signal stability. Low budgets create false winners.

Instead of launching ten ads with minimal spend, limit variation and fund each test properly. Two to four creatives per batch often produce clearer conclusions.

Evaluate based on:

-

Cost per qualified lead; connect results with CRM stages.

-

Lead-to-meeting rate; reveals whether messaging aligns with intent.

-

Cost per opportunity; filters out surface engagement bias.

If you rely only on surface metrics, revisit why click-through rate can be misleading before promoting any ad.

CTR is diagnostic. Revenue metrics are decisive.

Step 3: Respect Evaluation Windows

Meta performance fluctuates during the learning phase. Early results rarely represent steady-state behavior.

Allow sufficient time and spend before judging performance. Premature decisions reduce statistical reliability and increase volatility.

If you often pause and relaunch ads, understand why pausing and restarting Facebook ads can hurt results and adjust your workflow.

Avoid daily micro-optimizations. Weekly review cycles create consistency.

Advanced Creative Testing Approaches

Once the foundation is stable, you can refine methodology.

Sequential Hook Testing

Hooks drive attention economics. Test hooks first before redesigning entire ads.

Keep the body identical. Change only the opening message. This isolates attention impact from persuasion depth.

After identifying a strong hook, test body variations underneath it.

Message Qualification Layers

Stable accounts filter poor-fit users early.

Introduce qualification elements such as:

-

Price anchors; mention budget ranges to deter low-intent clicks.

-

Specific use cases; narrow appeal to high-value segments.

-

Explicit constraints; state who the offer is not for.

Higher CTR is not the goal. Lower but higher-quality traffic stabilizes CPA.

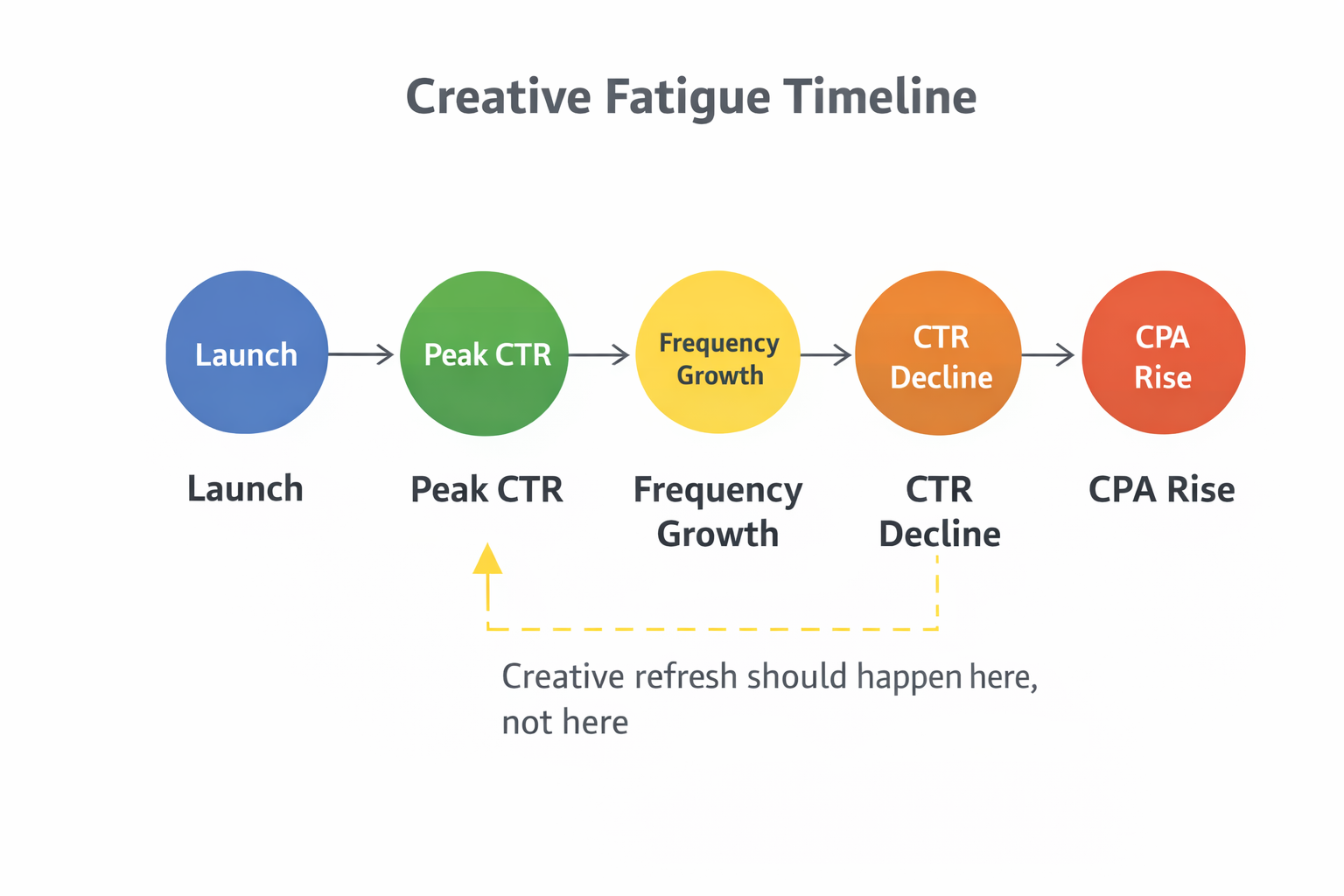

Fatigue Mapping Instead of Creative Rotation

Many advertisers replace ads once performance drops. This masks deeper signals.

Instead, map fatigue patterns:

-

Track frequency against declining lead quality; detect saturation thresholds.

-

Monitor rising CPM alongside falling conversion rates; identify audience exhaustion.

-

Compare new audience segments using the same creative; isolate message fatigue from targeting fatigue.

Creative decay often reflects audience saturation rather than poor design.

Structuring Testing Campaigns for Stability

Campaign structure affects algorithm behavior. Small adjustments create significant variance.

Broad Targeting for Testing

Broad targeting improves signal density. Narrow audiences distort results and exaggerate volatility.

Testing aims to evaluate message strength, not micro-segmentation. Once validated, creatives can enter more refined campaigns.

Consistent Optimization Events

Changing optimization goals mid-test invalidates comparisons.

Keep the same objective and event across all variants. This ensures consistent auction behavior and delivery logic.

Switching from leads to conversions mid-cycle resets learning and compromises evaluation integrity.

Promotion Rules: When a Creative Graduates

A creative should enter scaling only after proving stability.

Graduation criteria may include:

-

Sustained cost per qualified lead below account average; measured over a full review cycle.

-

Stable lead-to-meeting rate; no sharp declines across consecutive periods.

-

Predictable spend efficiency; no extreme volatility under incremental budget increases.

Promotion without these checks often transfers instability into scaling campaigns.

Maintaining Long-Term Stability

Creative testing is continuous. Stability does not mean stagnation.

Maintain a rolling pipeline:

-

Allocate fixed monthly budget for experimentation; protect it from scaling pressure.

-

Archive losing variations with documented insights; avoid repeating failed angles.

-

Track theme performance over time; identify which narratives consistently attract buyers.

Over time, patterns emerge. Certain angles align with revenue stages better than others.

Accounts that treat creative testing as an operational system achieve smoother CPAs and fewer performance shocks. Results stop depending on luck and start reflecting structure.

Stable results are not accidental. They are engineered through disciplined experimentation and controlled promotion.