You publish multiple posts that look equally strong, yet performance varies without a clear pattern. One post scales, another stalls, and CPC gradually increases.

Inside Insights, this shows up as uneven reach distribution and inconsistent engagement rates. Meta is trying to optimize delivery, but it lacks direct comparison between your content variations.

A/B content testing solves this by forcing structured competition between posts before full distribution.

How A/B content testing works in Meta Business Suite

Meta allows you to test up to four variants of a post or reel in a controlled environment before publishing anything to your Page.

The mechanics are straightforward but important:

-

Each variant is shown to a subset of your audience.

Meta splits delivery so different users see different versions, allowing clean comparison of engagement signals. -

You define the duration of the test.

For example, a 30-minute test captures early engagement signals, while longer tests reflect more stable behavior. -

You choose the metric that determines the winner.

This could be 1-minute video views, engagement, or clicks, depending on your objective. -

The winning variant is automatically published.

After the test ends, Meta distributes the best-performing version to your full audience.

During the test, variants are not visible on your Page. They only exist inside the test environment until a winner is selected.

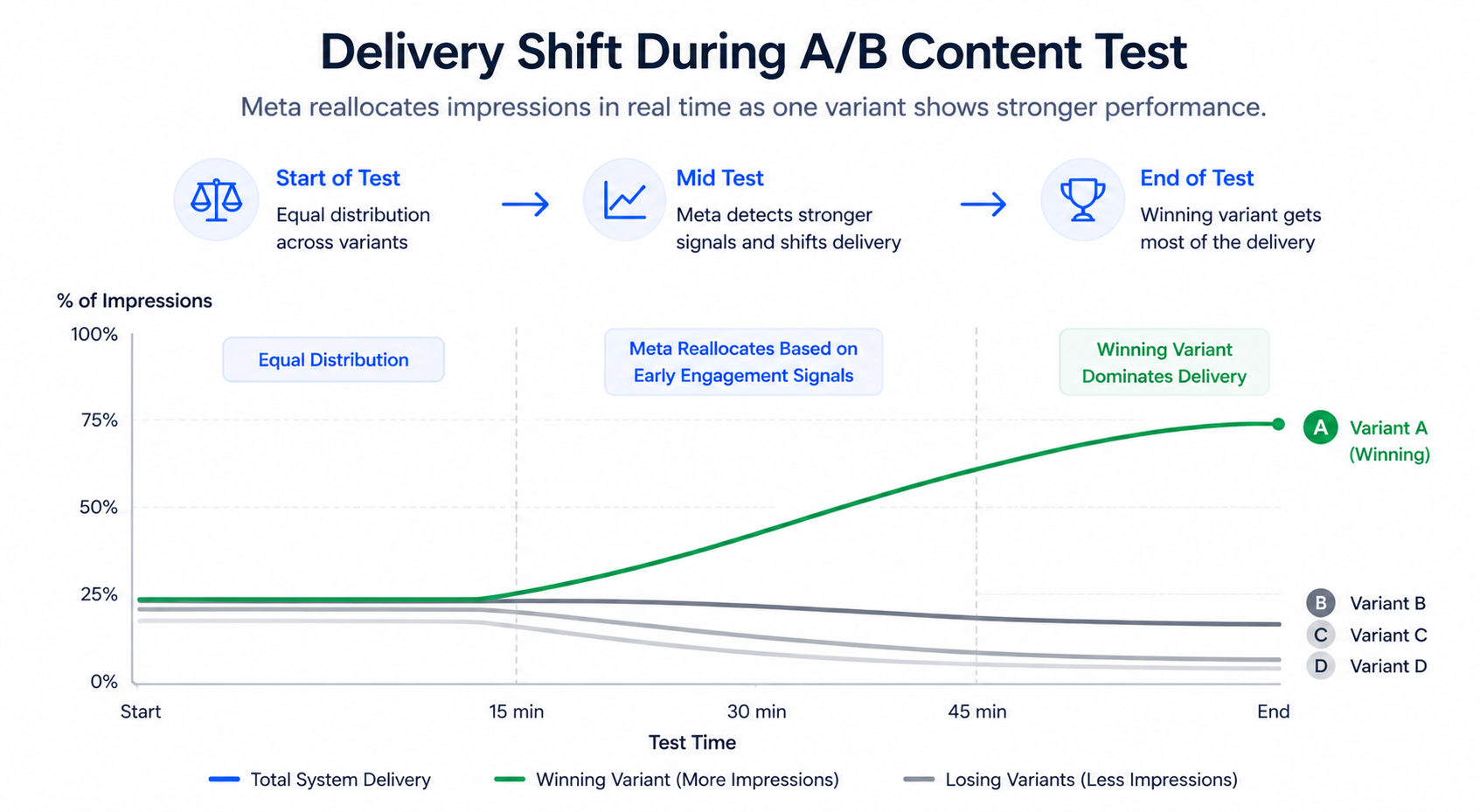

What Meta actually evaluates during the test

Meta doesn’t rely on estimates. It uses real behavioral signals from users exposed to each variant.

The most influential signals include:

-

Early engagement velocity.

If one version generates more interactions within the first few hundred impressions, Meta increases its delivery weight quickly. -

Retention signals for video content.

Metrics like 1-minute views indicate deeper engagement and influence ranking. -

Engagement efficiency relative to reach.

A post with fewer impressions but stronger interaction rate often wins.

In practice, you’ll see this as uneven impression distribution during the test. One variant starts receiving more delivery as Meta identifies it as stronger.

Why your chosen metric can lead to the wrong outcome

The test result is only as good as the metric you define.

A common situation:

-

Variant A generates high reactions and comments.

-

Variant B generates fewer interactions but drives more clicks and conversions.

If you optimize for engagement, Meta will select A. From a business standpoint, B is often more valuable.

This is why understanding why click-through rate can be misleading is critical when defining what “winning” actually means.

Real campaign example: small change, measurable cost impact

A SaaS team tests two video hooks:

-

Hook A introduces the product immediately.

-

Hook B starts with a problem and builds tension before the solution.

Within 45 minutes:

-

Hook B generates significantly higher 1-minute views.

-

Engagement is stronger within the first 1,000 impressions.

After Meta selects Hook B:

-

CTR increases from 0.9% to 1.4%.

-

CPC drops by over 20%.

-

Lead quality improves due to better message alignment.

Without testing, the team would likely have chosen the weaker version.

What happens after the test ends

The end of the test doesn’t mean all variants disappear immediately.

There are two key behaviors to understand:

-

Users who saw variants during the test may still see them later.

This means engagement data continues accumulating even after the test ends. -

Only the winning variant is published on your Page.

Your feed remains clean, but internally multiple versions were evaluated.

This often explains discrepancies between test results and post-level metrics.

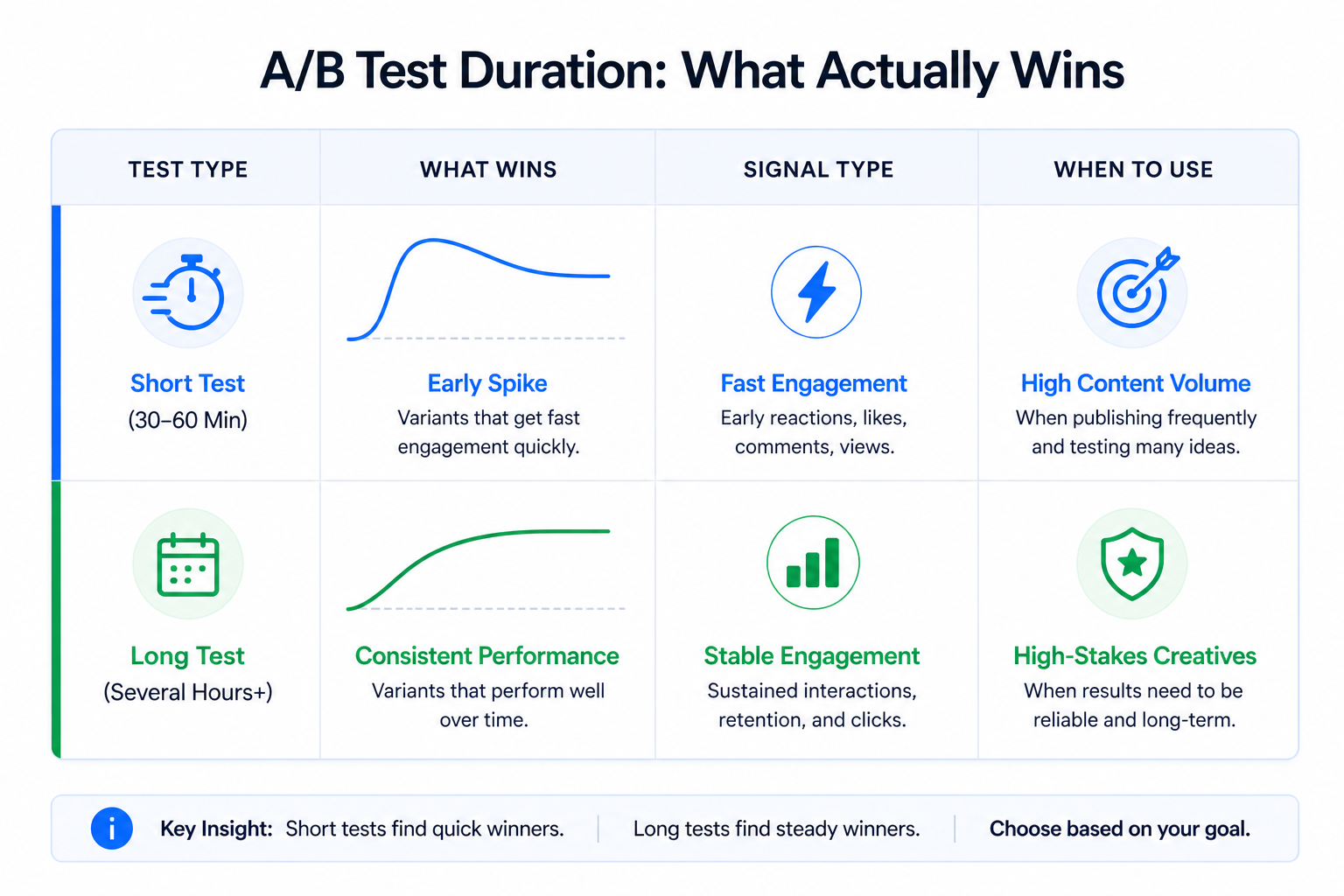

How test duration changes what “winning” means

Short and long tests produce different types of winners.

Short tests (30–60 minutes):

-

Prioritize fast engagement signals.

-

Reduce wasted impressions on weaker variants.

-

Work well for frequent content publishing.

Longer tests:

-

Capture more stable engagement behavior.

-

May favor consistency over initial performance spikes.

If your goal is efficiency and cost control, shorter tests are usually more effective.

What can break your test results

Even with Meta controlling distribution, several factors can distort outcomes:

-

Testing too many variables at once.

If multiple elements change simultaneously, Meta cannot isolate the cause. This is why understanding why testing too many ads at once hurts performance is essential. -

Poor test structure.

Without isolating variables, results become unreliable. Teams that follow frameworks like how to structure reliable A/B tests for paid traffic consistently get clearer insights. -

Weak or inconsistent audience signals.

If engagement is unstable, Meta cannot confidently determine a winner.

These issues typically show up as inconsistent impression distribution and unclear performance gaps.

How A/B testing improves paid campaign performance

Winning organic variants often translate into stronger ad creatives.

Because they already proved engagement efficiency, they enter paid campaigns with stronger signals:

-

Faster learning phase exit.

-

Lower CPM due to higher relevance.

-

More efficient budget allocation.

This reduces the trial-and-error phase in paid campaigns.

Final takeaway

Meta A/B content testing is not just a publishing feature. It’s a way to control how the algorithm learns from your content.

By testing variations before full distribution, you reduce wasted impressions and send stronger signals into the system. That leads to lower CPC, more stable performance, and more predictable scaling.