Algorithmic bias in advertising occurs when a platform’s optimization system repeatedly prioritizes one creative over others, often before sufficient performance data has been collected. Instead of testing all variants fairly, the system quickly pushes budget toward one asset.

According to industry research, many programmatic platforms shift more than 70% of impressions to a single ad variant within the first 24–48 hours of a campaign. While early optimization can improve performance, premature bias can prevent advertisers from discovering higher-performing creatives.

A study by the Interactive Advertising Bureau found that campaigns with balanced creative testing can improve conversion rates by up to 30% compared with campaigns where delivery algorithms concentrate impressions too early.

Why Algorithms Favor One Creative

Several factors influence why algorithms begin favoring a specific creative:

Early Engagement Signals

Algorithms rely on early signals such as click-through rate (CTR), view time, or engagement. If one creative receives slightly stronger initial engagement, the system may interpret it as a strong signal and rapidly scale its delivery.

Learning Phase Limitations

Many ad platforms operate in a learning phase where limited data guides early optimization decisions. With small sample sizes, even random performance fluctuations can cause the algorithm to prematurely commit to one creative.

Budget Constraints

Smaller campaign budgets provide fewer impressions for testing. When the algorithm has limited data, it tends to concentrate delivery on the variant that appears most promising.

Audience Segmentation Effects

If one creative resonates with a specific segment that receives early impressions, the algorithm may overestimate its effectiveness and shift delivery disproportionately.

Signs of Algorithmic Bias in Campaign Data

Detecting bias requires monitoring distribution patterns across creatives. The following indicators often reveal imbalanced delivery:

Disproportionate Impression Distribution

If one creative receives more than 60–70% of impressions shortly after launch, the campaign may already be experiencing algorithmic bias.

Incomplete Creative Testing

When multiple creatives are uploaded but only one accumulates meaningful data, it becomes impossible to determine which asset truly performs best.

Sudden Delivery Acceleration

A rapid spike in impressions for a single creative within the first few hours or days often signals that the algorithm has already committed to that variant.

Limited Performance Differentiation

If the favored creative does not significantly outperform others in CTR or conversion rate but still dominates delivery, the algorithm may be optimizing prematurely.

Methods to Detect Bias Systematically

To identify algorithmic favoritism more reliably, advertisers should analyze campaign data using structured evaluation methods.

Impression Share Analysis

Compare impression distribution across all creatives. Ideally, early testing phases should distribute impressions relatively evenly until statistically meaningful performance differences appear.

Performance Confidence Intervals

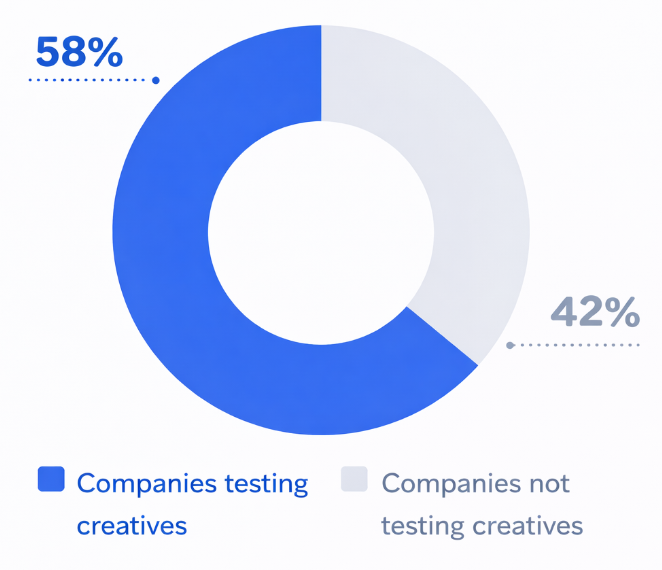

Ad Creative Testing Adoption Among Companies

Statistical confidence intervals help determine whether performance differences between creatives are meaningful or simply the result of random variation.

Time-Based Delivery Monitoring

Track how impression share changes over time. If one creative dominates within the first few hours or days, early optimization may be distorting the testing process.

Cross-Audience Comparison

Analyze whether the same creative dominates across multiple audience segments. If dominance appears only in a specific segment, the issue may be audience-driven rather than algorithmic bias.

How to Reduce the Impact of Algorithmic Bias

Although algorithms cannot be completely controlled, several tactics can improve fairness in creative testing.

Use Structured Creative Testing

Separate testing campaigns from scaling campaigns. In the testing phase, ensure that each creative receives sufficient impressions before the algorithm begins optimizing.

Increase Creative Volume

Campaigns with a larger set of creatives often reduce the chance that the algorithm locks onto a single variant too quickly.

Monitor Early Campaign Data Closely

Review campaign performance during the first 24–72 hours. Early monitoring allows advertisers to pause overly dominant creatives and rebalance delivery.

Segment Testing Campaigns

Running separate campaigns for different creative groups or audiences can prevent algorithms from making premature global optimization decisions.

Why Detecting Bias Matters

Creative diversity is essential for discovering winning advertising messages. When algorithms favor one creative too early, campaigns may miss valuable insights.

Research shows that advertisers who systematically test multiple creatives can achieve up to 50% stronger long‑term performance improvements compared with campaigns that rely on automated creative selection alone.

Identifying algorithmic bias allows marketers to maintain a more controlled testing environment, ensuring that campaign optimization is based on reliable performance data rather than early algorithmic assumptions.

Conclusion

Automated advertising systems can significantly improve campaign efficiency, but they also introduce the risk of premature optimization. Detecting algorithmic bias toward a single creative requires careful analysis of impression distribution, performance data, and delivery patterns.

By monitoring early campaign behavior and implementing structured testing strategies, advertisers can prevent algorithms from skewing results and ensure that creative performance is evaluated fairly.