Creative testing is a cornerstone of modern digital marketing. Marketers routinely experiment with different headlines, images, formats, and calls to action in order to improve engagement and conversions. However, when dozens of creative variations are launched simultaneously, campaigns can quickly lose analytical clarity.

Instead of identifying clear winners, marketers may see fragmented results, inconsistent performance data, and slow optimization cycles. Understanding the consequences of excessive creative competition is essential for running efficient and data-driven campaigns.

Why Marketers Launch Many Creative Variations

There are several reasons marketing teams test many creatives at once:

-

They want to accelerate learning and identify high-performing assets quickly.

-

Multiple teams or stakeholders contribute ideas simultaneously.

-

Platforms encourage experimentation through automated testing tools.

While experimentation is beneficial, too many concurrent variations often produce unintended consequences.

The Data Dilution Problem

When a campaign distributes impressions across too many creatives, each variation receives only a small share of the total audience. This weakens the statistical reliability of performance metrics.

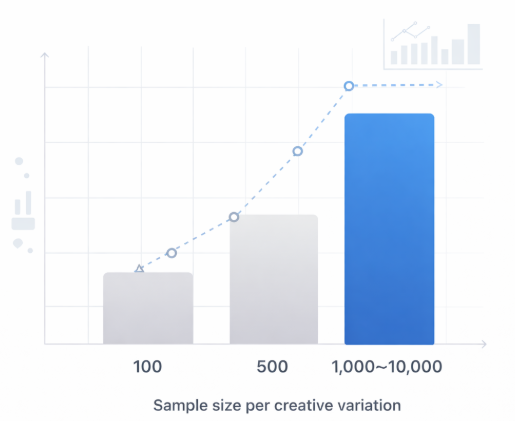

Research in marketing experimentation suggests that reliable A/B test results typically require thousands of impressions per variation. When impressions are divided among large numbers of creatives, none of them accumulate enough data to reach meaningful conclusions.

Minimum sample size required for reliable A/B testing results across creative variations

Industry studies indicate that campaigns with fewer than 1,000 impressions per creative often produce statistically unstable performance signals. In these conditions, minor fluctuations in engagement can falsely appear as meaningful trends.

Slower Optimization Cycles

Another consequence of excessive creative competition is slower optimization. When many creatives run simultaneously, campaign algorithms require more time to determine which assets perform best.

Advertising platforms often rely on machine learning systems that prioritize high-performing creatives. If too many options are introduced at once, the algorithm must evaluate each one before confidently allocating budget.

According to digital advertising benchmarks, campaigns with controlled creative testing cycles can reach performance optimization up to 30–40% faster than campaigns launching large creative batches.

Audience Fragmentation

Too many creatives can also fragment audience exposure. Instead of repeatedly reinforcing a strong message, audiences encounter many slightly different messages.

Marketing research consistently shows that repeated exposure improves recall and brand recognition. Studies in advertising effectiveness suggest that audiences typically require multiple exposures to the same message before responding.

When creative variation is excessive, no single message achieves sufficient frequency, which can reduce campaign effectiveness.

Decision-Making Becomes Difficult

Large creative test sets produce complex and sometimes contradictory datasets. A campaign may show:

-

Slight performance differences between many creatives

-

Rapid changes in top-performing variations

-

Conflicting metrics across different audience segments

These conditions make it difficult for marketing teams to confidently select winning creatives or determine why certain variations perform better.

Instead of clear insights, teams may end up making subjective decisions based on incomplete data.

How to Structure Creative Testing More Effectively

To avoid the problems caused by excessive creative competition, marketers should structure their testing frameworks carefully.

1. Limit Concurrent Variations

Testing 3–5 variations at a time allows each creative to accumulate sufficient impressions and produce statistically meaningful results.

2. Test One Variable at a Time

When multiple creative elements change simultaneously, it becomes impossible to determine what caused performance differences. Structured testing isolates variables such as headlines, visuals, or call-to-action text.

3. Use Sequential Testing

Instead of launching dozens of variations at once, marketers can test smaller groups in stages. Winning creatives from one round move into the next test cycle, gradually refining performance.

Key Takeaways

Creative experimentation is essential for improving marketing performance, but uncontrolled variation can undermine the entire testing process. When too many creatives compete simultaneously, marketers face diluted data, slower optimization, fragmented messaging, and unclear insights.

By limiting the number of concurrent variations, isolating variables, and structuring sequential experiments, marketing teams can generate reliable insights and improve campaign outcomes.

Suggested Reading

To further explore campaign optimization and marketing performance, consider these articles: