Meta has steadily expanded automation across Ads Manager. Campaign setup now encourages automated placements, automated audience expansion, automated bidding, and even automated creative combinations.

In many cases, these systems work well. They reduce manual configuration and allow the delivery algorithm to react faster than a human operator.

But automation is not neutral.

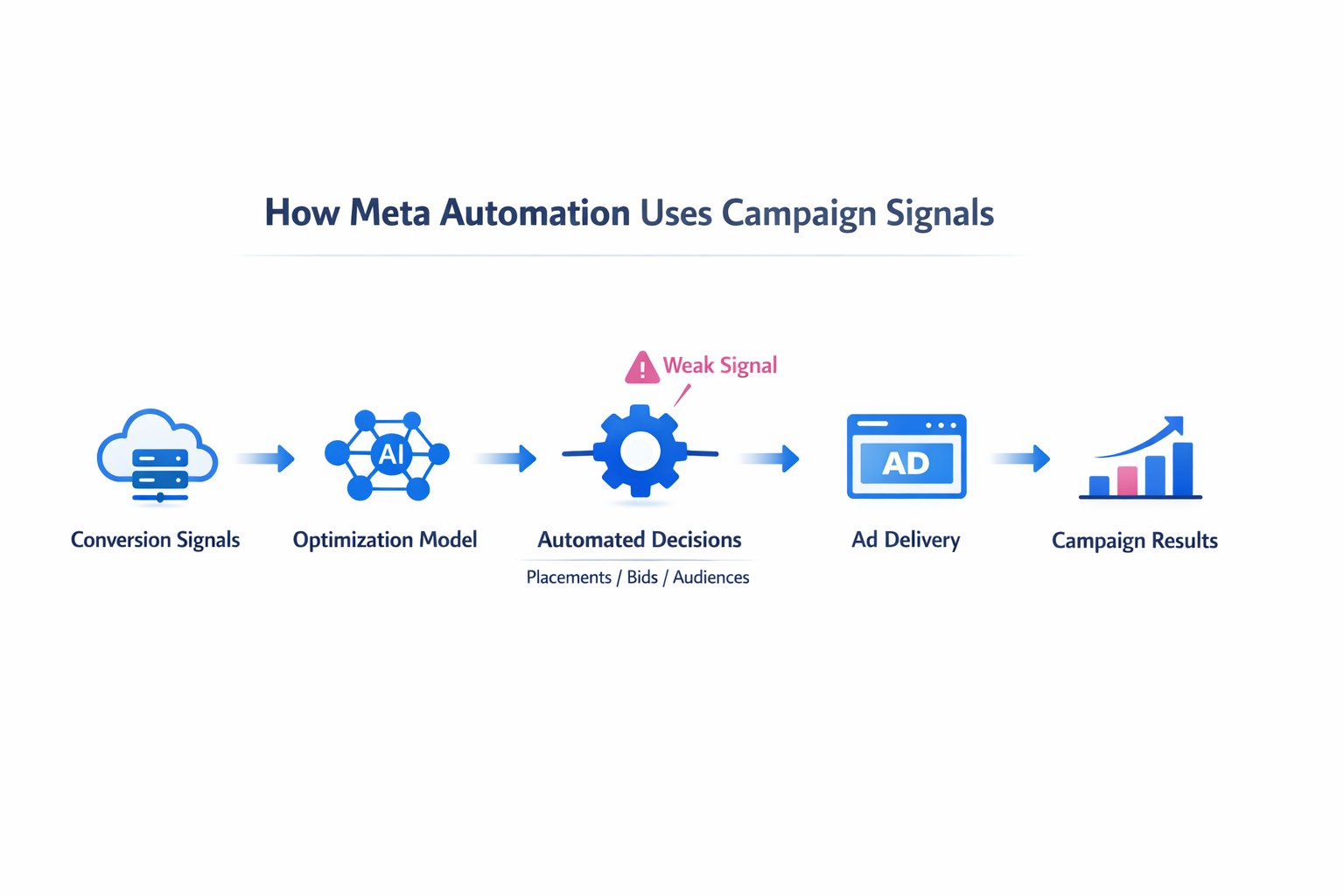

Under certain campaign conditions, these features can quietly push delivery in the wrong direction. The platform follows statistical signals, not business context. If the signals are weak, distorted, or misaligned with the objective, automation can amplify the problem rather than solve it.

Understanding when this happens helps prevent campaigns from drifting into inefficient spending patterns.

Automation Works Only When the Signal Environment Is Stable

Meta’s delivery system continuously analyzes behavioral patterns and conversion signals to decide where to allocate impressions. The algorithm increases bids and delivery toward users who statistically resemble recent converters.

When the signal environment is stable, automation performs well.

This usually requires:

-

a consistent flow of conversion events,

-

a relatively stable audience pool, and

-

predictable user behavior patterns.

Under these conditions, the system receives enough feedback to reinforce the correct targeting clusters.

Problems begin when one of these inputs becomes unstable. The algorithm still reacts to signals, but those signals may represent noise rather than meaningful user intent.

For example, if only a handful of conversions occur per week, the model often overweights individual events. One purchase from an unusual user segment can temporarily redirect delivery toward similar users who are unlikely to convert.

Automation does not recognize this as an anomaly. It treats it as data.

This dynamic is closely related to the signal distortion problem explained in Signal Loss in Digital Ads: What Marketers Can Do, where missing or inconsistent events cause optimization models to learn incorrect patterns.

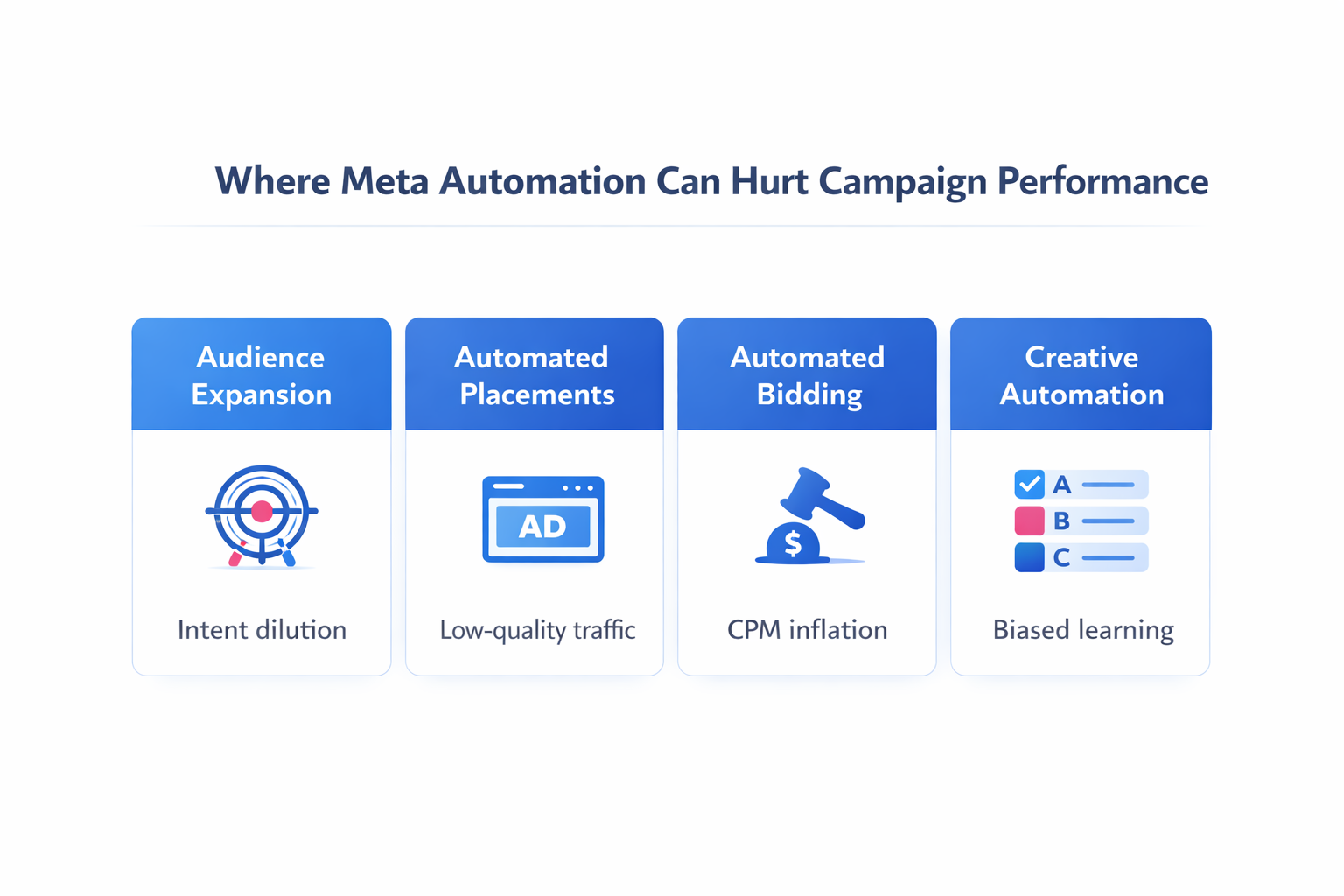

Advantage+ Audience Expansion Can Dilute High-Intent Targeting

Audience expansion allows Meta to extend delivery beyond the targeting constraints you initially define. If the system believes additional users may convert, it gradually widens the audience.

In theory, this increases scale.

In practice, the expansion often moves away from the original behavioral signal that made the audience effective.

Consider a campaign targeting users who recently visited a pricing page. That group carries strong purchase intent because their behavior signals evaluation of the product.

When expansion activates, Meta may start delivering ads to users with weaker signals:

-

people who engaged with related content,

-

users who interacted with similar brands,

-

or broad interest clusters.

From the algorithm’s perspective, this still resembles the seed audience. From a purchase-intent perspective, the signal has already weakened.

A typical campaign pattern looks like this:

-

Early performance appears strong because delivery focuses on the high-intent core.

-

After several days, expansion begins to reach adjacent audiences.

-

CTR often stays stable because the broader audience still interacts with the ad.

-

Conversion rate gradually declines as behavioral intent weakens.

Inside Ads Manager, the shift often appears as steady spend growth paired with falling cost efficiency.

If you want to scale audiences without destroying targeting quality, the mechanics are discussed in Audience Expansion Without Losing Relevance.

Automated Placements Can Push Spend Into Low-Intent Inventory

Automated placements allow Meta to distribute impressions across Facebook, Instagram, Audience Network, and other surfaces.

The system chooses placements based on predicted conversion probability and available auction inventory.

However, placement economics vary widely.

Some placements provide cheap impressions but historically produce weaker conversion signals. If the campaign objective emphasizes low-cost delivery rather than high-value conversions, the algorithm may move spend toward those areas.

Common examples include:

-

Audience Network inventory, which often generates inexpensive clicks but lower post-click engagement quality.

-

Reels inventory during early scaling, where engagement can be high but purchase intent varies widely depending on the offer.

-

In-stream environments, where users interact with ads while primarily focused on other content.

When automation detects lower CPMs in these placements, it may allocate a growing portion of the budget there.

Performance metrics can appear acceptable at the top of the funnel:

-

CTR remains stable.

-

CPC decreases.

-

Reach increases.

The real impact appears deeper in the funnel as declining conversion rates or weaker lead quality.

Automated Bidding Can Escalate Auction Costs

Meta’s bidding system adjusts bids dynamically to win impressions that appear likely to convert.

In competitive audience segments, this can push bids higher than expected.

Automation reacts to short-term patterns in the auction environment. If a cluster of users produces several conversions in quick succession, the system may aggressively increase bids to secure additional impressions from similar profiles.

The result is a rapid rise in CPM.

Campaign operators often notice this pattern in Ads Manager:

-

CPM increases sharply over several days.

-

Conversion volume remains flat.

-

Cost per acquisition begins to rise.

The system is not malfunctioning. It is simply competing harder for users it believes resemble recent buyers.

This situation resembles the dynamics described in How to Scale Facebook Ads Without Increasing CPMs, where uncontrolled auction competition gradually erodes efficiency during campaign growth.

Automated Creative Optimization Can Distort Learning

Meta frequently rotates multiple creatives within the same ad set to identify the highest-performing combinations.

This approach works well when creative differences are clear and performance gaps are meaningful.

Problems appear when:

-

creatives are extremely similar, or

-

the campaign generates limited conversion volume.

In these situations, the system may declare a “winner” based on minimal statistical differences.

Once that happens, delivery concentrates heavily on one creative variant. Other variations receive little exposure, which prevents the algorithm from collecting additional comparison data.

The campaign effectively stops testing.

A common diagnostic pattern appears in the breakdown view:

-

One creative receives the majority of impressions.

-

Other creatives remain stuck in low delivery despite comparable engagement signals.

When running creative experiments, structured testing frameworks like the Creative Testing Matrix for Faster Wins often produce clearer insights than relying purely on automated rotation.

Automation Amplifies Tracking Errors

Automation systems assume the conversion data they receive is accurate.

If the tracking infrastructure contains gaps or inconsistencies, the algorithm learns from flawed signals.

Typical examples include:

-

incomplete purchase tracking after checkout redirects,

-

duplicated events caused by pixel misconfiguration,

-

delayed server-side signals arriving long after the user interaction.

When these issues occur, the algorithm builds targeting patterns around incorrect behavioral feedback.

A distorted signal environment can cause the system to:

-

expand into irrelevant audiences,

-

increase bids for low-quality traffic, or

-

reduce delivery toward genuine buyers whose conversions were never recorded.

Reliable event tracking is therefore a prerequisite for effective automation.

The Core Tradeoff Behind Meta’s Automation

Meta’s automation systems are designed to scale campaigns quickly across enormous audiences.

To achieve this, the platform prioritizes statistical signals over contextual judgment.

The system does not evaluate:

-

whether a lead fits your sales process,

-

whether a click came from accidental engagement, or

-

whether a placement environment encourages real purchase intent.

It simply reacts to patterns in the data it receives.

When those patterns represent genuine buyer behavior, automation can dramatically improve campaign efficiency.

When the signals are weak or distorted, the same automation can accelerate performance decline.

Understanding that tradeoff allows advertisers to decide when to trust the system and when to guide it with deliberate constraints.