If you’ve been running Meta campaigns long enough, you’ve seen it: one week looks efficient and predictable, the next feels unstable. CPA jumps. ROAS compresses. Lead volume swings without obvious changes on your side.

The natural reaction is to assume something broke. In reality, week-to-week fluctuation is often the combined result of auction dynamics, signal distribution, and structural decisions inside your account. Once you understand how these forces interact, performance variance becomes something you can diagnose — not fear.

Let’s break down what’s actually happening.

The Auction Is Not Static

Every impression in Meta is the result of a live auction. You’re not bidding against a fixed market; you’re competing against a moving one.

From week to week, three things shift:

-

Competitor activity.

Other advertisers increase budgets, launch promotions, or test new creatives. When more aggressive bids enter the same audience pool, CPM rises. Even if your conversion rate stays stable, higher CPM alone can push CPA up. -

Seasonal micro-cycles.

Demand doesn’t only change quarter to quarter. It shifts inside the month. Payroll cycles, industry events, and short promotional windows affect buyer intent in subtle but measurable ways. -

Platform inventory mix.

Available impressions vary by placement and user activity. A week with heavier low-quality inventory can reduce conversion efficiency without any change to targeting.

If you treat performance swings as isolated anomalies, you miss the structural reality: you are buying from a dynamic marketplace.

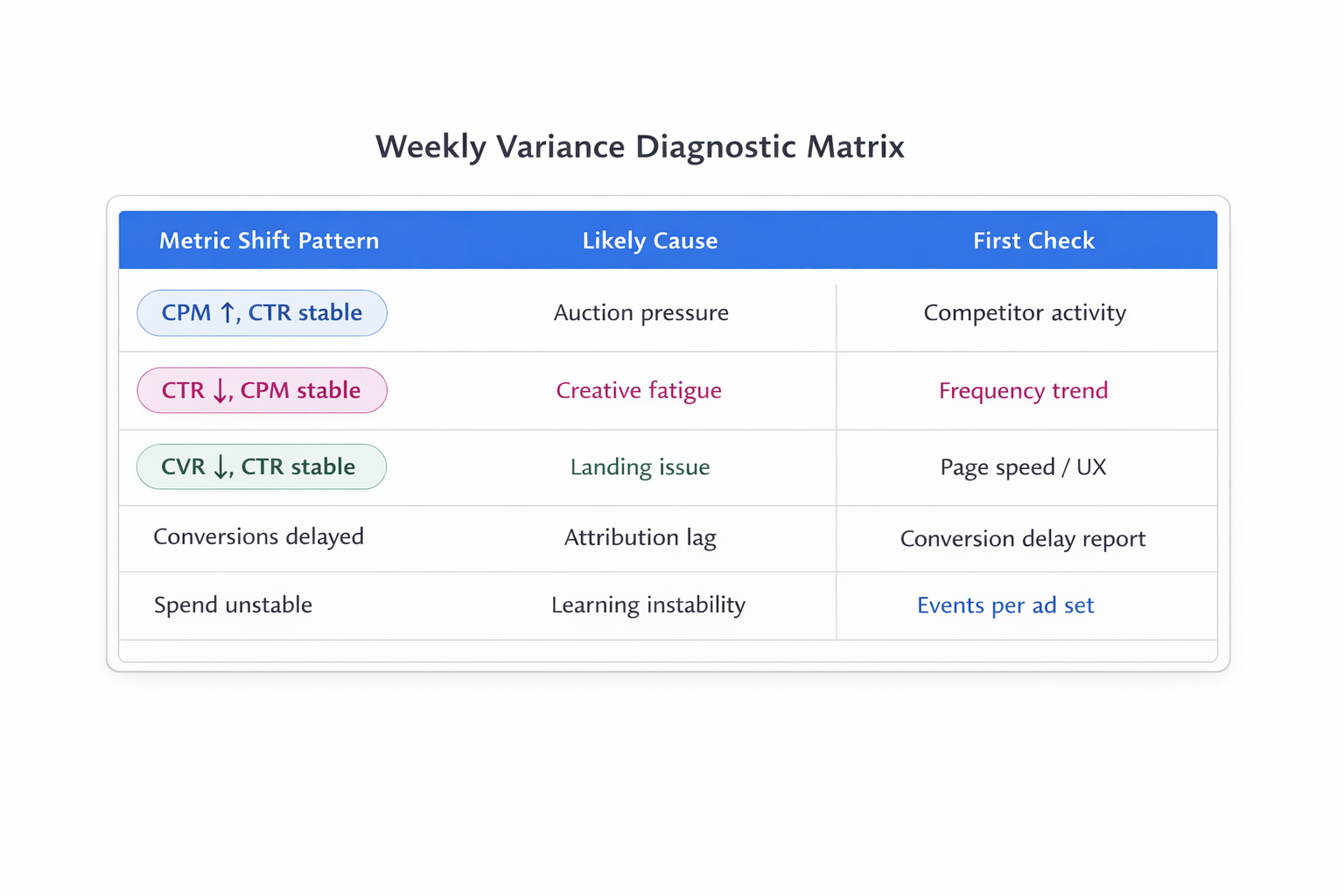

Before making structural changes, check whether CPM shifted significantly. If CPM is stable and CPA rose, the problem is probably downstream. If CPM jumped, the root cause may be competitive pressure rather than creative fatigue.

If you want a deeper breakdown of cost mechanics, see What Influences CPM on Facebook Ads (and How to Keep It Low).

Learning Phase and Signal Density Create Volatility

Meta’s optimization system depends on conversion signals per ad set. When signal volume drops below stability thresholds, volatility increases.

The common benchmark is roughly 50 optimization events per ad set per week. Below that, delivery becomes statistically unstable.

Here’s what happens when volume is thin:

-

The system explores more aggressively.

-

Performance appears inconsistent because small sample sizes amplify noise.

-

One high-value or low-value conversion can skew reported CPA.

If you’re running many segmented ad sets with low weekly conversions, you’ve essentially engineered instability. The algorithm cannot model efficiently on sparse data.

You can test this directly: consolidate audiences or reduce campaign fragmentation for two weeks and compare variance, not just average CPA. Often, average performance doesn’t change dramatically — but volatility decreases.

For a detailed explanation of how stability relates to learning status, read What Does “Learning Limited” Mean in Facebook Ads?

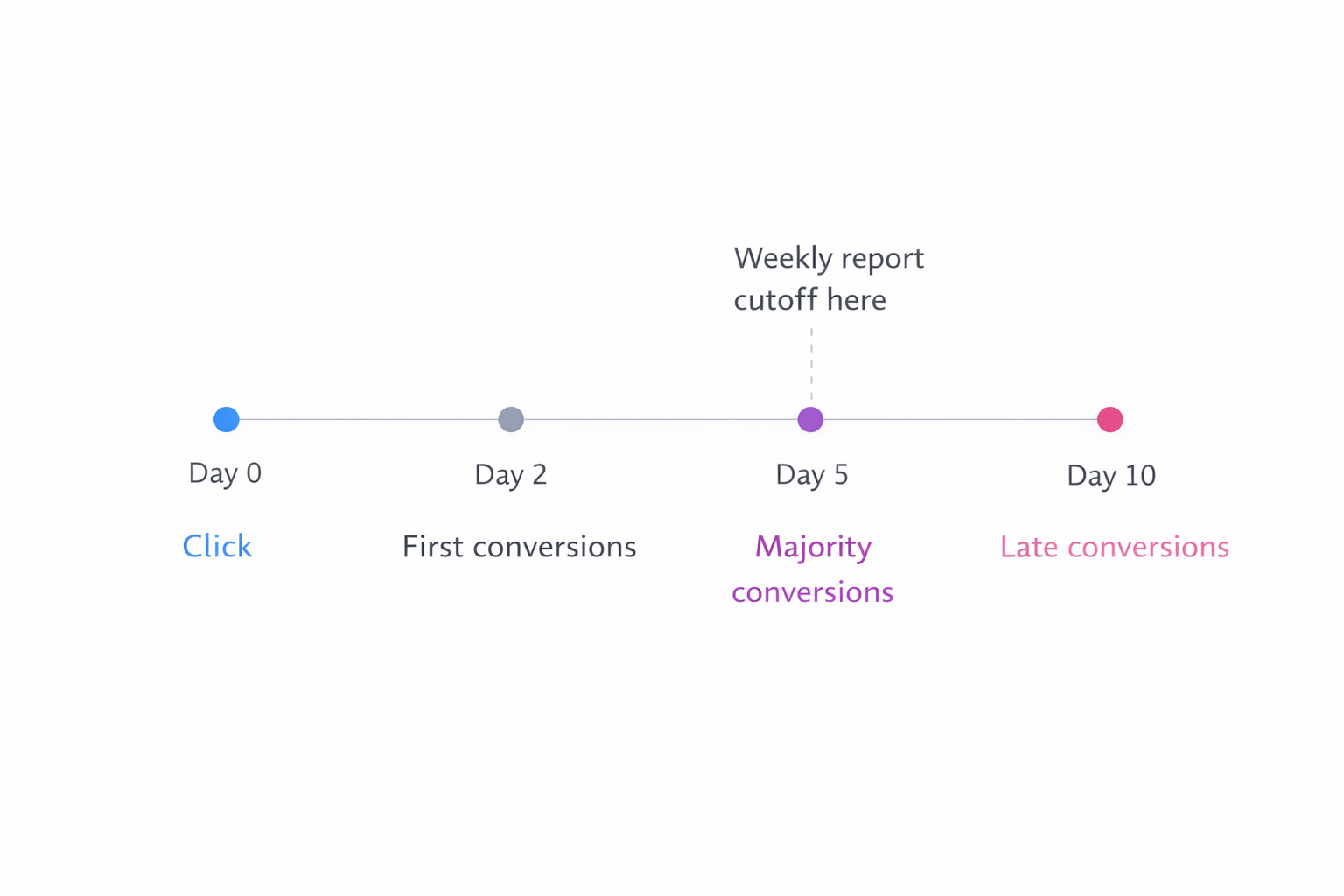

Attribution Windows Distort Short-Term Comparisons

Week-to-week analysis assumes clean boundaries. In reality, conversions bleed across reporting windows.

For example:

-

A click from last week converts this week.

-

A view-through conversion appears after delayed consideration.

-

Longer sales cycles shift revenue recognition across periods.

If your sales cycle exceeds a few days, weekly CPA or ROAS snapshots become noisy. You’re comparing partially matured cohorts to fully matured ones.

Instead of reacting to raw weekly ROAS, track:

-

Blended 14-day or 28-day rolling performance.

-

Lag-adjusted conversion cohorts.

-

Click-to-conversion delay distribution.

If 40% of your conversions occur 5–10 days after the click, weekly reporting will inherently fluctuate. That’s structural, not tactical.

For a deeper look at reporting delays, see Attribution Lag in Facebook Ads: Why Results Look Better (or Worse) Days Later.

Creative Fatigue Is Gradual, Not Binary

Many advertisers blame performance drops on fatigue. Sometimes that’s correct. Often it’s oversimplified.

Creative performance declines when:

-

Frequency increases within a constrained audience.

-

Engagement rate decreases relative to historical benchmarks.

-

Competing creatives in the auction outperform yours on predicted action rate.

But fatigue is not a switch. It’s a probability shift.

If your CTR and conversion rate both decline while CPM remains stable, creative degradation is likely. If CTR holds but CPM rises, the issue is competitive pressure.

You can isolate this by comparing:

-

CTR trend.

-

Conversion rate trend.

-

CPM trend.

Only when CTR and CVR deteriorate simultaneously does fatigue become the primary suspect.

If you want a practical breakdown of fatigue signals, review Creative Fatigue: Early Signals and Fixes.

Budget Changes Reshape the Learning Environment

Small budget adjustments can create disproportionate impact.

Increasing budget:

-

Expands reach into less responsive audience segments.

-

Alters bid aggressiveness.

-

Forces the system to re-explore inventory.

Decreasing budget:

-

Constrains learning.

-

Reduces optimization flexibility.

-

May trap delivery inside high-frequency pockets.

Even a 20–30% budget shift can temporarily destabilize results if the campaign operates near learning thresholds.

If performance dropped immediately after a scaling move, consider whether the system is rebalancing rather than failing.

In practice, gradual scaling reduces variance. Abrupt scaling increases it.

Audience Overlap and Internal Competition

Week-to-week fluctuation sometimes comes from inside your own account.

If multiple ad sets target overlapping audiences:

-

They compete in the same auctions.

-

Delivery shifts unpredictably between them.

-

Performance attribution becomes inconsistent.

One week, Ad Set A wins more auctions. The next week, Ad Set B does. Aggregate results may look unstable even if the combined system is steady.

If you suspect this, read Why Audience Overlap Is Killing Your Facebook Ad Performance and simplify where possible. Structural clarity reduces internal volatility.

External Conversion Friction

Not all variance originates inside Meta.

Performance can fluctuate because of:

-

Landing page load speed changes.

-

Form bugs.

-

CRM processing delays.

-

Sales team response time variance.

-

Payment gateway instability.

If conversion rate drops without any auction metric change, inspect the post-click environment.

You’d be surprised how often a minor technical issue creates what looks like algorithm instability.

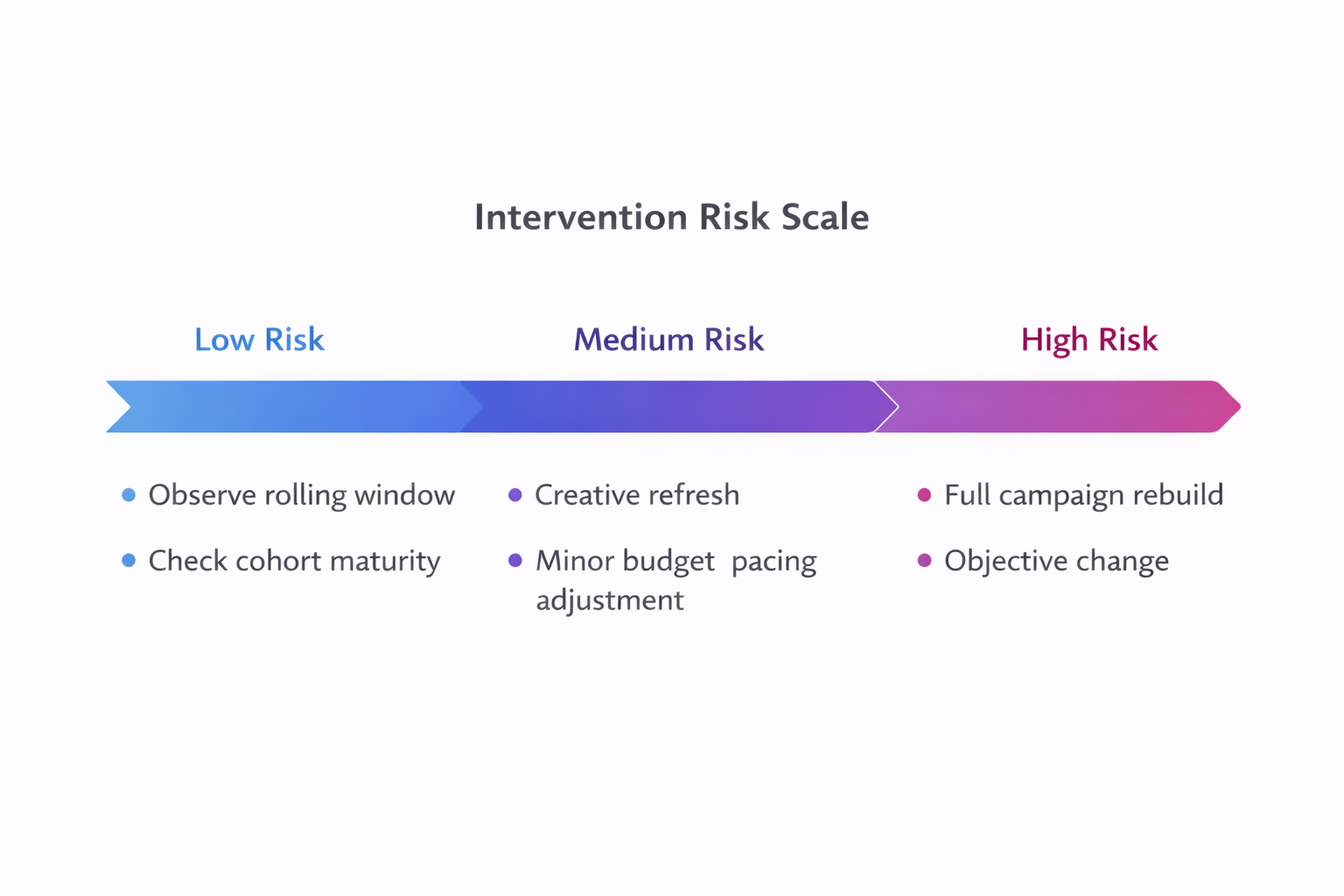

The Real Problem: Overreacting to Noise

The most expensive consequence of weekly fluctuation is not the fluctuation itself. It’s reactive optimization.

Common mistakes include:

-

Killing ad sets after 5–7 days of weak data.

-

Rebuilding campaigns during temporary CPM spikes.

-

Rotating creatives prematurely.

-

Changing targeting before lag-adjusted results mature.

When you intervene too frequently, you introduce additional learning resets. That compounds volatility.

A more disciplined approach:

-

Identify whether the variance is auction-driven, signal-driven, or structural.

-

Confirm changes across at least one full optimization window.

-

Adjust only one variable at a time.

Stability often improves not because you “fixed” performance, but because you stopped interrupting the system.

How to Think About Weekly Fluctuation Strategically

Instead of asking, “Why did performance drop this week?”, ask:

-

Did signal density change?

-

Did CPM shift materially?

-

Did budget or structure change?

-

Are we evaluating immature cohorts?

-

Did post-click conversion conditions change?

This reframing shifts you from reactive mode to diagnostic mode.

Meta performance is probabilistic. Weekly variance is normal within statistical bounds. What matters is whether the underlying system remains efficient over rolling windows.

When you design campaigns with:

-

Consolidated signal flow.

-

Clear objective alignment.

-

Stable budget pacing.

-

Cohort-aware reporting.

You reduce unnecessary volatility.

Performance still fluctuates. But it becomes interpretable. And once it’s interpretable, it’s manageable.