Lead magnets sit at the critical conversion point between anonymous traffic and identified prospects. Even marginal improvements in opt-in rates can produce significant downstream revenue impact.

According to multiple industry benchmarks:

-

Businesses that run structured A/B tests see conversion rate improvements of 20–30% on average.

-

Companies that test consistently are twice as likely to achieve significant increases in lead volume.

-

A 1% improvement in landing page conversion can translate into thousands of additional leads annually for high-traffic campaigns.

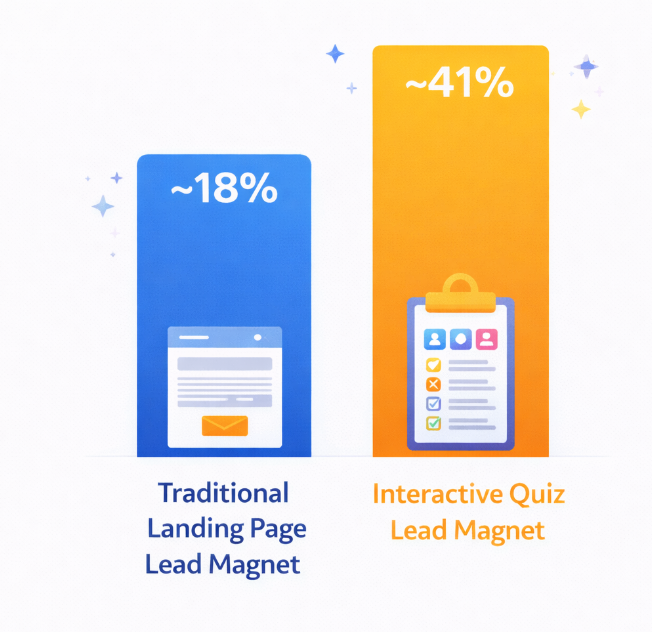

Conversion rate comparison between typical lead generation landing pages and interactive quiz-based lead magnets

Despite these gains, most teams limit experimentation to headline swaps or button color changes. Advanced split testing goes far beyond surface-level optimization and focuses on behavioral psychology, segmentation, statistical validity, and funnel alignment.

From Basic A/B Testing to Experimental Architecture

1. Move Beyond Single-Variable Testing

Traditional A/B testing isolates one element at a time. While methodologically clean, this approach can be slow. Advanced experimentation incorporates:

-

Multivariate testing for interaction effects

-

Sequential testing frameworks

-

Adaptive traffic allocation models

-

Behavioral segmentation splits

Instead of testing “Headline A vs. Headline B,” test structured hypotheses such as:

-

Pain-driven framing vs. outcome-driven framing

-

Immediate download vs. gated multi-step qualification

-

Short-form opt-in vs. micro-commitment funnel

The objective is not cosmetic improvement but structural performance enhancement.

Designing High-Impact Hypotheses

Advanced split testing starts with disciplined hypothesis formation.

A strong hypothesis includes:

-

Clear independent variable

-

Defined dependent metric (e.g., conversion rate, lead quality score, pipeline velocity)

-

Behavioral reasoning

-

Expected magnitude of change

Example framework:

“If we reposition the lead magnet as a solution to a specific operational bottleneck rather than a general resource, conversion rates will increase because specificity reduces cognitive friction.”

Avoid vague test rationales. Hypothesis quality strongly correlates with test ROI.

Segment-Based Split Testing

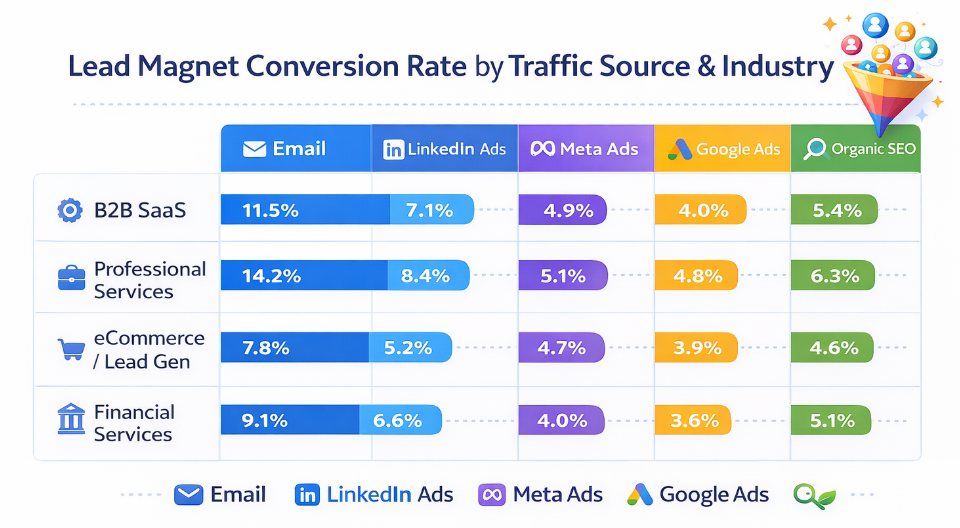

Comparison of landing page conversion rates to lead magnet opt-ins by traffic source and industry, highlighting variation in channel performance

Not all traffic behaves equally. High-performing teams test lead magnets across segmented audiences such as:

-

Traffic source (organic, paid, outbound)

-

Industry vertical

-

Company size

-

Job title or seniority

-

Geographic region

Segment-level testing often reveals 2–3x variation in opt-in performance. What converts at 18% for paid traffic may convert at 6% for cold outbound audiences.

Instead of optimizing for blended averages, optimize per acquisition channel.

Testing for Lead Quality, Not Just Volume

High opt-in rates do not always translate into revenue impact.

Advanced testing integrates downstream metrics:

-

SQL rate (sales-qualified lead rate)

-

Meeting booking rate

-

Opportunity creation rate

-

Pipeline value per lead

In many B2B campaigns, reducing opt-in volume by 10% while increasing qualification rate by 25% produces superior pipeline efficiency.

Use CRM-integrated analytics to measure the true performance of each lead magnet variant.

Statistical Discipline and Sample Size

One of the most common failures in split testing is premature conclusion drawing.

Best practices include:

-

Establish statistical significance thresholds (typically 95%)

-

Pre-calculate minimum detectable effect

-

Avoid peeking bias

-

Run tests for full traffic cycles

For example, if your baseline conversion rate is 12%, detecting a 15% relative lift may require thousands of visitors per variant. Underpowered tests lead to false positives and unreliable scaling decisions.

Multi-Step Lead Magnet Experiments

Advanced testing often focuses on funnel structure rather than static pages.

Experiment with:

-

Single-step vs. two-step opt-ins

-

Progressive profiling forms

-

Personalized dynamic content blocks

-

Exit-intent gated assets

Two-step opt-ins, in particular, frequently outperform single-step forms due to micro-commitment psychology. In many controlled experiments, this structure increases conversions by 10–30%.

Behavioral Framing Experiments

Test framing at a strategic level:

-

Data-driven authority positioning vs. tactical checklist positioning

-

Scarcity-based messaging vs. exclusivity-based messaging

-

Immediate value vs. long-term strategic gain

Behavioral economics principles such as loss aversion, social proof, and specificity bias can significantly influence opt-in performance.

Avoid random experimentation. Anchor tests in cognitive science principles.

Iterative Optimization Framework

Advanced split testing is not a one-time initiative. It operates in cycles:

-

Baseline measurement

-

Hypothesis creation

-

Controlled experiment

-

Data analysis

-

Rollout and documentation

-

Next iteration

Organizations that formalize this loop often see sustained annual conversion improvements exceeding 30%.

Document every experiment. Institutional learning compounds over time.

Common Advanced Testing Mistakes

Even experienced teams encounter structural errors:

-

Testing too many variables without traffic volume

-

Ignoring segmentation

-

Over-optimizing for top-of-funnel metrics

-

Stopping tests early due to initial spikes

-

Failing to validate technical implementation

Testing maturity requires discipline, not creativity alone.

Integrating Split Testing with Data Infrastructure

High-performing teams connect experimentation platforms with:

-

CRM systems

-

Lead scoring models

-

Revenue attribution frameworks

-

Behavioral analytics tools

This integration allows marketers to evaluate not only immediate opt-ins but lifetime value, deal velocity, and revenue contribution per lead magnet variant.

Advanced testing becomes a revenue optimization system, not just a UX exercise.

Strategic Takeaways

Advanced split testing for lead magnets requires:

-

Structured hypothesis design

-

Segment-based experimentation

-

Statistical rigor

-

Downstream revenue measurement

-

Iterative documentation

When executed systematically, optimization efforts can produce measurable increases in both lead volume and sales efficiency.

Recommended Reading

For further strategic insights, explore these related articles

Each provides complementary frameworks to strengthen acquisition performance across your marketing funnel.