Marketers often rely on platform-reported conversions, but those numbers rarely show the true impact of advertising. Incrementality testing helps determine whether Meta Ads actually drive additional results or simply capture conversions that would have happened anyway.

What Incrementality Testing Means

Incrementality testing measures the real lift created by advertising by comparing two groups: one exposed to ads and one not exposed. The difference between the groups represents the incremental impact of advertising.

Traditional attribution models frequently overestimate performance. Studies from Meta show that standard attribution can overstate campaign impact by more than 30% in some cases. Incrementality testing isolates the true causal effect of advertising by using control groups.

For teams without analysts or advanced data infrastructure, structured experiments inside Meta Ads Manager provide a practical way to measure incremental performance.

Why Incrementality Matters for Meta Ads

Performance metrics such as ROAS or cost per acquisition often rely on attribution models that cannot fully separate correlation from causation. Incrementality testing addresses this issue.

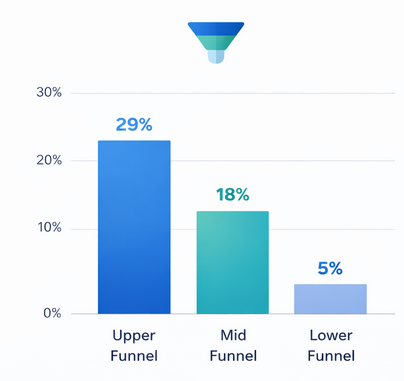

Incremental lift can vary significantly by funnel stage, with experiments showing higher lift in upper-funnel outcomes compared with lower-funnel conversions

Research by Nielsen has shown that nearly 60% of digital advertising spend can be misattributed when relying only on platform reporting. Incrementality testing provides a clearer view of what advertising actually generates.

When marketers implement regular incrementality experiments, they typically identify between 10% and 40% of conversions that would have occurred without advertising. Recognizing this gap helps reallocate budget toward campaigns that truly drive new demand.

Step 1: Define a Clear Test Objective

Before launching a test, determine the exact business question you want to answer. Common objectives include:

-

Determining whether prospecting campaigns generate new customers

-

Measuring incremental conversions from retargeting

-

Evaluating whether a new creative concept increases incremental revenue

The key is to test one primary variable at a time. This ensures the results can be interpreted without complex statistical modeling.

Step 2: Create Control and Test Groups

Incrementality testing requires two groups:

Test group: Users who see the ads.

Control group: Users intentionally excluded from the ads.

In Meta Ads, this can be implemented using audience splits or geo-based segmentation. A simple approach is to exclude a portion of your audience from campaigns and compare outcomes.

For example, if a campaign targets 100,000 users, reserve 10–20% of them as a control group that receives no ads. The remaining users receive the campaign normally.

Step 3: Keep Campaign Conditions Stable

A common mistake in incrementality testing is changing campaign variables during the experiment. Budget, creatives, and targeting should remain constant throughout the test period.

Consistency ensures that differences between groups reflect advertising exposure rather than other variables. Most incrementality tests should run for at least two weeks to collect enough data.

Statistical best practices recommend reaching a minimum of several hundred conversions before drawing conclusions.

Step 4: Measure Conversion Lift

After the test period, compare conversion rates between the exposed group and the control group.

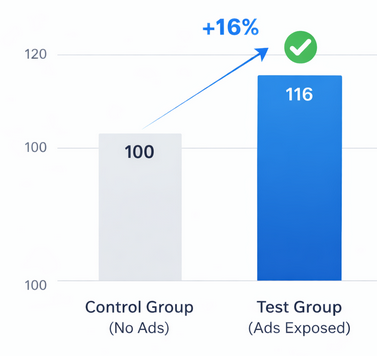

Example of incremental conversion lift: campaigns can generate around 16% additional conversions that would not occur without advertising exposure

Incremental lift can be calculated as:

Incremental Lift = (Conversion Rate of Test Group − Conversion Rate of Control Group) / Conversion Rate of Control Group

For instance:

-

Test group conversion rate: 4.0%

-

Control group conversion rate: 3.0%

Incremental lift = (4.0 − 3.0) / 3.0 = 33% lift

This means one-third of conversions were driven by advertising that would not have occurred otherwise.

Step 5: Translate Results Into Budget Decisions

The primary goal of incrementality testing is not just measurement but optimization.

If a campaign produces high incremental lift, scaling spend may be justified. If the difference between test and control groups is minimal, the campaign may mainly capture existing demand.

According to eMarketer research, advertisers who regularly run incrementality tests improve marketing efficiency by up to 25% through smarter budget allocation.

Common Pitfalls to Avoid

Even simple incrementality tests can fail if key principles are ignored.

Running tests for too short a period often produces unreliable results due to insufficient sample size.

Changing campaign settings mid-test introduces variables that invalidate comparisons.

Using audiences that overlap between test and control groups can contaminate results.

Maintaining strict experimental conditions ensures that the observed lift accurately reflects advertising impact.

How Often You Should Run Incrementality Tests

Incrementality testing should not be a one-time exercise. Consumer behavior, competition, and creative performance change constantly.

Many performance teams conduct incrementality experiments every quarter. This cadence allows marketers to reassess which campaigns truly generate new demand and which rely on attribution bias.

Over time, these insights build a clearer understanding of how advertising contributes to growth.

Final Thoughts

Incrementality testing is one of the most reliable methods for measuring the true effectiveness of Meta Ads. Even without a data team, marketers can run structured experiments by splitting audiences, maintaining stable campaign conditions, and comparing conversion rates between exposed and control groups.

The insights gained from these tests often reveal hidden inefficiencies in advertising strategies and help redirect budgets toward campaigns that generate real business impact.