In performance marketing, creative testing has become a core discipline. Teams continuously experiment with new messaging, visuals, formats, and hooks to discover what resonates with their audience. While this approach can unlock significant gains, it can also introduce inefficiencies, confusion, and diminishing returns if not managed properly.

Understanding when creative testing becomes counterproductive is essential for maintaining both performance and operational efficiency.

The Promise of Creative Testing

Creative testing allows marketers to:

-

Identify high-performing messaging faster

-

Adapt to audience preferences and market shifts

-

Improve key metrics such as CTR, conversion rate, and ROI

-

Reduce reliance on guesswork

According to industry benchmarks, structured creative testing can improve conversion rates by up to 30% and reduce customer acquisition costs by 20–25%. Additionally, campaigns that refresh creatives regularly can see engagement increases of 15–20% compared to static campaigns.

Despite these advantages, there is a tipping point where testing no longer delivers incremental value.

When Testing Starts to Backfire

1. Excessive Volume Without Clear Hypotheses

Running too many tests simultaneously without a clear framework leads to noisy data and inconclusive results. Instead of learning what works, teams end up with conflicting signals.

Research shows that marketers who test more than 10 variables at once without structured hypotheses are 40% more likely to misinterpret performance outcomes.

2. Insufficient Time for Learning

Creative tests require enough time and data to reach statistical significance. Cutting tests short—or rotating creatives too quickly—prevents reliable insights.

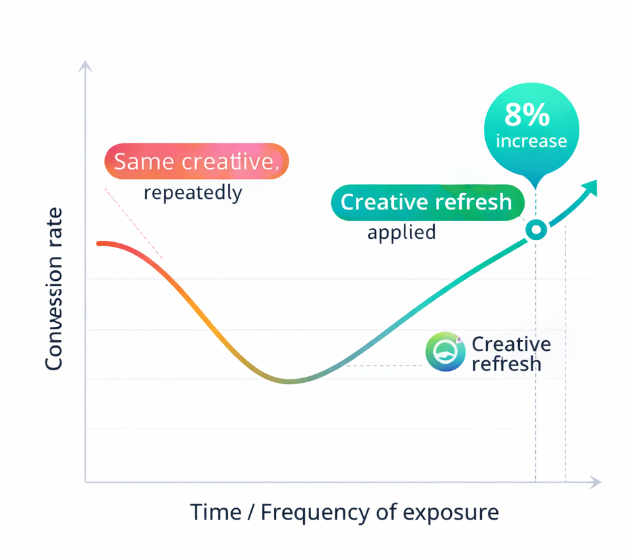

A common issue is "creative fatigue anxiety," where teams replace assets prematurely. In reality, many creatives reach peak performance only after exiting the learning phase.

3. Budget Fragmentation

Fewer, well-funded creative tests generate clearer and more statistically reliable insights than fragmented testing across too many variations

Spreading budget across too many variations reduces the impact of each test. As a result, none of the creatives receive enough impressions to generate meaningful data.

Studies indicate that campaigns with concentrated budgets achieve up to 35% more reliable performance insights compared to heavily fragmented testing setups.

4. Over-Optimization and Creative Burnout

Constant iteration can lead to over-optimization, where creatives become too similar or overly tuned to short-term performance metrics.

This often results in:

-

Loss of originality

-

Reduced brand differentiation

-

Audience fatigue

In fact, over-tested creative sets can see engagement drops of 10–15% over time due to lack of novelty.

5. Misalignment with Strategic Goals

Not all tests contribute to broader marketing objectives. When teams focus only on micro-metrics (like CTR), they may overlook long-term value indicators such as customer lifetime value or brand perception.

Signs Your Creative Testing Is Counterproductive

You may be over-testing if:

-

Results are inconsistent or difficult to interpret

-

Winning creatives fail to scale

-

Testing cycles are too short to gather meaningful data

-

Teams spend more time producing variations than analyzing results

-

Performance improvements plateau despite increased testing

How to Regain Control

1. Prioritize Hypothesis-Driven Testing

Every test should be based on a clear assumption. For example, instead of testing random variations, define what you expect to change and why.

2. Limit Concurrent Tests

Focus on fewer, high-quality experiments. This improves data clarity and ensures each test receives sufficient budget and exposure.

3. Establish Testing Cycles

Allow enough time for creatives to exit the learning phase before making decisions. Structured cycles lead to more reliable insights.

4. Balance Exploration and Exploitation

Dedicate part of your budget to scaling proven creatives while reserving a smaller portion for experimentation.

5. Align Testing with Business Outcomes

Ensure that creative testing supports broader goals such as revenue growth, retention, and brand positioning—not just short-term engagement metrics.

Conclusion

Creative testing remains a powerful tool, but only when applied with discipline. Without structure, it can quickly become a source of inefficiency and misleading insights.

The key is not to test more, but to test smarter—focusing on meaningful experiments, clear hypotheses, and sustainable performance improvements.

Recommended Reading