Creative testing is widely regarded as one of the most effective levers for improving Facebook Ads performance. Marketers iterate on visuals, copy, and formats to identify winning combinations that drive engagement and conversions. However, in accounts with low conversion volume, creative testing frequently delivers inconclusive or misleading results.

The issue is not the concept of creative testing itself—it is the lack of sufficient data and structural readiness within the account. Without these, even the most sophisticated testing strategies become unreliable.

The Data Threshold Problem

Facebook’s algorithm relies heavily on conversion data to optimize delivery. According to Meta’s own recommendations, ad sets should ideally generate at least 50 conversion events per week to exit the learning phase effectively. Accounts that fall below this threshold operate in a state of continuous learning limitation.

In low-conversion accounts:

-

The algorithm cannot accurately evaluate creative performance

-

Results fluctuate due to statistical noise

-

Winning creatives cannot be reliably identified

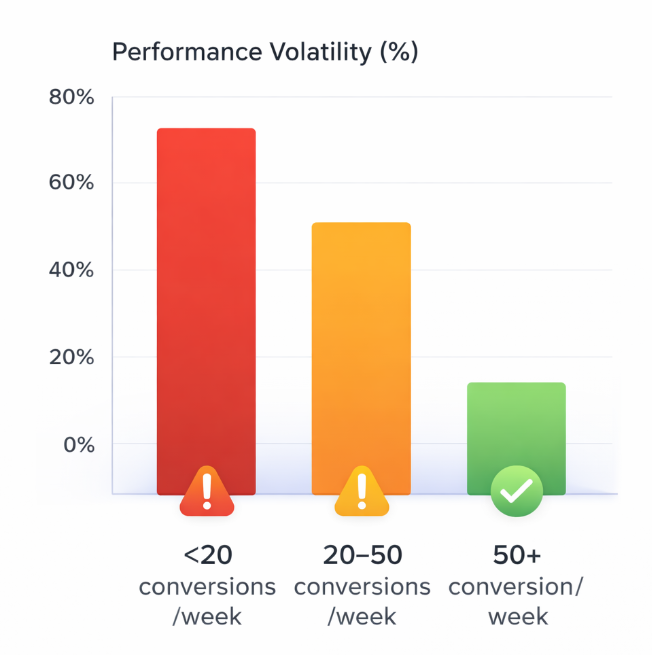

Studies across performance marketing benchmarks show that campaigns with fewer than 30 conversions per week can experience performance variance exceeding 50%, making test conclusions statistically insignificant.

Misattribution of Creative Performance

When conversion volume is low, marketers often attribute poor performance directly to creative quality. In reality, the issue is frequently rooted in:

-

Audience targeting inefficiencies

-

Weak offer-market fit

-

Poor landing page experience

Research indicates that landing page experience alone can impact conversion rates by up to 2–5x. If the funnel is broken downstream, no creative variation will consistently perform.

As a result, creatives are prematurely discarded or incorrectly labeled as ineffective, leading to wasted time and budget.

The Learning Phase Trap

Low-conversion accounts rarely allow campaigns to exit the learning phase. Each time a new creative is introduced, the system resets learning, further delaying optimization.

Low conversion volume keeps campaigns in the learning phase, resulting in significantly higher performance volatility

This creates a cycle:

-

New creatives are launched

-

Insufficient data prevents stabilization

-

Performance appears inconsistent

-

Creatives are replaced too quickly

According to Meta, frequent changes during the learning phase can increase CPA by up to 20–30% due to delivery instability.

Budget Fragmentation

Creative testing often involves splitting budgets across multiple ad sets or ads. In low-conversion accounts, this fragmentation reduces the already limited data per variation.

For example:

-

A $50/day budget split across 5 creatives results in only $10/day per creative

-

With low conversion rates, some creatives may generate zero conversions during the test period

This leads to false negatives, where potentially strong creatives are paused due to lack of visible results.

Overreliance on Short-Term Metrics

In the absence of conversions, marketers often rely on proxy metrics such as CTR, CPC, or engagement rates. While useful, these metrics do not necessarily correlate with conversion performance.

Industry data shows that high CTR creatives do not always translate into higher conversion rates, especially when the messaging attracts low-intent users.

Optimizing for clicks instead of conversions can further degrade account performance over time.

Structural Issues in Low‑Conversion Accounts

Creative testing fails not because of execution errors, but because of structural limitations:

-

Insufficient conversion volume

-

Lack of event prioritization

-

Weak signal quality for optimization

-

Inefficient campaign architecture

Without addressing these foundational issues, testing more creatives only amplifies inefficiency.

How to Fix Creative Testing in Low‑Conversion Accounts

1. Consolidate Campaign Structure

Reduce the number of ad sets and creatives to concentrate data. Fewer variables allow the algorithm to gather meaningful signals faster.

2. Optimize for Higher-Frequency Events

Instead of optimizing for purchases, consider optimizing for higher-volume events such as add-to-cart or landing page views. This provides more data for the algorithm to learn from.

3. Increase Budget per Test

Ensure each creative receives sufficient spend to generate statistically relevant results. As a guideline, allocate enough budget to achieve at least 20–30 events per variation before making decisions.

4. Fix the Funnel First

Before testing creatives, audit the entire conversion funnel:

-

Offer clarity

-

Landing page speed and UX

-

Trust signals and messaging consistency

Even small improvements in funnel efficiency can significantly increase conversion volume.

5. Extend Testing Duration

Avoid making decisions too early. Low-conversion accounts require longer testing windows to accumulate enough data for reliable conclusions.

Conclusion

Creative testing is a powerful tool, but it depends on data density and account stability. In low-conversion Facebook Ads accounts, the lack of sufficient signals prevents the algorithm from making accurate optimizations, leading to misleading test outcomes.

Instead of continuously rotating creatives, advertisers should focus on improving conversion volume, consolidating campaign structure, and strengthening the overall funnel. Once these elements are in place, creative testing becomes significantly more effective and actionable.

Recommended Reading