In modern marketing and content ecosystems, creative output is often judged by performance metrics tied to reach, impressions, and engagement. Yet a significant number of creatives never receive sufficient delivery to generate statistically meaningful results. As a consequence, their work is prematurely labeled as underperforming, and opportunities diminish before real potential can be proven.

This problem is not rooted in creativity alone. It is frequently driven by structural inefficiencies, testing biases, and limitations in campaign execution.

The Delivery Gap: A Hidden Bottleneck

According to industry benchmarks, it can take between 10,000 and 50,000 impressions for a creative asset to reach performance stability in paid campaigns. However, many organizations terminate or deprioritize creatives long before they reach even 5,000 impressions.

A study by Nielsen found that nearly 47% of ad performance is driven by creative quality, yet only a fraction of tested creatives receive enough delivery to accurately measure that quality.

This mismatch creates a “delivery gap”—a situation where evaluation occurs without sufficient exposure.

Why Creatives Don’t Get Enough Delivery

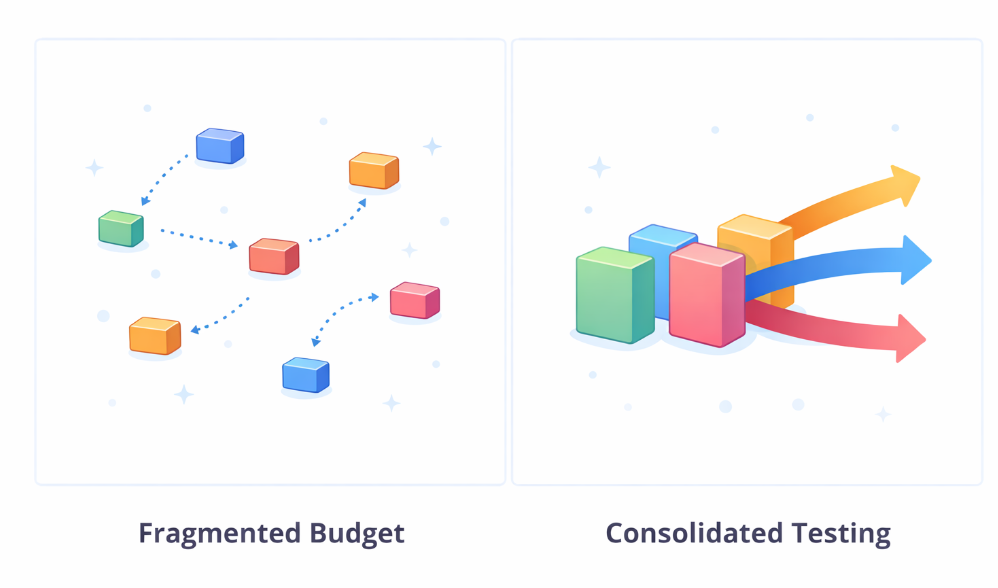

1. Budget Fragmentation

Marketing teams frequently distribute budgets across too many campaigns, audiences, and creative variants. While diversification is important, excessive fragmentation reduces the delivery each creative receives.

When budgets are spread thin, algorithms cannot gather enough data to optimize effectively, resulting in inconclusive or misleading performance signals.

2. Premature Optimization Decisions

When budgets are fragmented across too many creatives, none receive enough delivery to generate reliable performance data

Many teams make optimization decisions within the first 24–72 hours of launching a campaign. During this period, performance data is often volatile and not statistically reliable.

Research from Meta suggests that learning phases typically require at least 50 optimization events per ad set. Ending a creative’s run before reaching this threshold prevents the system from stabilizing.

3. Algorithmic Bias Toward Early Winners

Ad platforms are designed to allocate more delivery to creatives that show early positive signals. While efficient in theory, this mechanism can suppress potentially strong creatives that require more time to resonate with audiences.

As a result, a small subset of creatives captures most of the delivery, while others remain underexposed.

4. Lack of Structured Testing Frameworks

Without a clear testing methodology, creatives are often launched in uncontrolled environments where multiple variables change simultaneously. This makes it difficult to isolate performance drivers and leads to inconsistent delivery.

A structured approach—such as isolating variables and using controlled experiments—can significantly improve both delivery distribution and insight quality.

The Cost of Under-Delivery

Failing to provide adequate delivery has measurable consequences:

-

Misallocation of budget toward short-term performers

-

Loss of potentially high-performing creative concepts

-

Reduced innovation due to risk-averse decision-making

-

Lower overall return on ad spend (ROAS)

According to Deloitte, companies that implement disciplined testing strategies can improve marketing ROI by up to 30%.

How to Ensure Creatives Get a Fair Chance

1. Consolidate Budgets Strategically

Instead of spreading budgets across numerous small experiments, focus on fewer, well-structured tests. This ensures each creative receives sufficient impressions to generate reliable data.

2. Define Clear Evaluation Thresholds

Set minimum delivery requirements—such as impressions, clicks, or conversions—before making performance decisions. This prevents premature conclusions.

3. Implement Phased Testing

Adopt a two-stage approach: initial exploration followed by scaling. In the exploration phase, allow creatives to reach data sufficiency. In the scaling phase, allocate more budget to validated performers.

4. Monitor Statistical Significance

Use statistical methods to determine whether performance differences are meaningful. Avoid relying solely on surface-level metrics in early stages.

Conclusion

Creative success is not solely determined by quality—it is also a function of opportunity. Without adequate delivery, even the most promising ideas can go unnoticed.

Organizations that recognize and address the delivery gap gain a competitive advantage. By aligning budget allocation, testing frameworks, and evaluation criteria, they create an environment where creativity can be accurately assessed and effectively scaled.

Further Reading